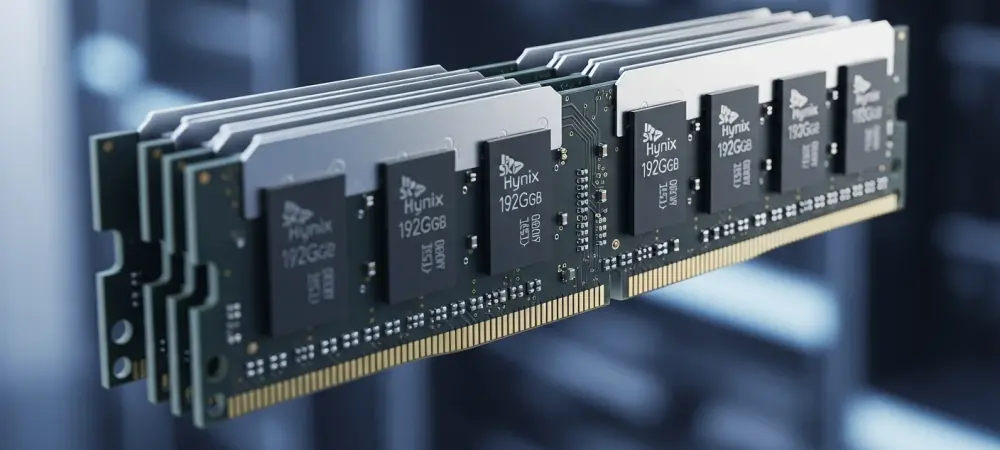

The persistent battle against thermal throttling and data congestion in the world’s most powerful server racks has finally reached a definitive turning point with the arrival of high-density modular memory. SK Hynix has officially entered mass production of its 192GB SOCAMM2 memory modules, a move that signals a transformative shift in how data centers handle the heavy lifting of artificial intelligence. Specifically engineered to support NVIDIA’s upcoming Vera Rubin platform, these modules represent more than just a capacity increase; they are a fundamental redesign of memory architecture for the Agentic AI era. By utilizing the advanced 1cnm process—the sixth generation of 10-nanometer technology—SK Hynix is bridging the gap between the low-power requirements of mobile devices and the high-performance demands of enterprise servers. This analysis explores how this breakthrough addresses the critical bottlenecks currently hindering the next leap in machine learning and global data center efficiency.

The Evolution of Memory in the Age of Large Language Models

To understand the significance of the 192GB SOCAMM2, one must look at the historical struggle to balance bandwidth with power consumption. For years, the industry relied on standard RDIMM solutions, which, while reliable, began to struggle under the immense data throughput required by Large Language Models. As AI moved from simple pattern recognition to the resource-heavy training of models with hundreds of billions of parameters, the energy cost of moving data became a primary concern for Cloud Service Providers.

The shift toward LPDDR5X DRAM within a server context represents a strategic pivot, adapting high-efficiency mobile technology to meet the grueling thermal and computational needs of modern AI clusters. This transition reflects a broader recognition that traditional scaling is no longer sufficient; the physical limitations of existing memory formats necessitated a radical departure to sustain the exponential growth of neural network complexity.

Analyzing the Impact of SOCAMM2 Technology

Breakthroughs in Bandwidth and Power Efficiency

The primary technical achievement of the 192GB SOCAMM2 lies in its ability to outperform traditional RDIMM solutions while significantly reducing the carbon footprint of the data center. By delivering more than double the bandwidth of conventional modules, SK Hynix has cleared a path for faster data processing during both the training and inference phases of AI development. Even more impressive is the 75% improvement in power efficiency. For global Cloud Service Providers, this reduction in energy consumption is not merely an environmental win; it is an economic necessity that allows for higher density in server racks without exceeding thermal limits or infrastructure power caps.

Modularity and the Compression Connector Innovation

A critical hurdle in high-density server environments has always been the physical integrity of the memory connection and the ease of hardware maintenance. The SOCAMM2 form factor introduces a slim design that utilizes a compression connector rather than traditional pins. This innovation serves two purposes: it enhances signal integrity by reducing electrical interference and facilitates easier module replacement. This modularity is a departure from previous soldered-down low-power memory configurations, giving data center operators the flexibility to upgrade or repair systems without replacing entire motherboards, thereby extending the hardware lifecycle.

Strategic Supply Chain Diversification for the Vera Rubin Era

The mass production of these modules is also a story of market stability and strategic sourcing. NVIDIA is expected to diversify its supply chain by sourcing SOCAMM2 components from a trio of industry leaders: SK Hynix, Samsung, and Micron. This approach ensures that the rollout of the Vera Rubin platform is not hampered by single-source bottlenecks. By fostering a competitive environment among memory manufacturers, the industry can ensure a steady flow of high-capacity components. This diversification is essential as the global demand for AI-optimized hardware continues to outpace supply, providing a safeguard for the rapid deployment of next-generation AI services.

Future Trends in AI Hardware and Energy Management

Looking ahead, the transition to SOCAMM2 technology suggests that the future of AI will be defined by low-power, high-density architectures. As Agentic AI—AI that can autonomously perform complex tasks—becomes more prevalent, the demand for always-on high-performance memory will grow exponentially. We can expect future innovations to focus on even tighter integration between memory and processing units to further reduce latency. Furthermore, as regulatory scrutiny over the energy consumption of AI increases, the industry will likely see a mandatory shift toward the efficiency standards set by the 1cnm process, making low-power DRAM the default choice for all future server deployments.

Strategic Takeaways for the Enterprise Sector

For businesses and technology professionals, the arrival of 192GB SOCAMM2 modules offers several actionable insights. First, when planning infrastructure upgrades, decision-makers should prioritize modularity to ensure their systems remain adaptable to evolving AI requirements. Second, there is a clear benefit to pivoting from simple inference-optimized setups to hardware that can handle resource-intensive training, as this will be the primary differentiator in the AI market. Finally, organizations should look toward optimizing their software stacks to take full advantage of the increased bandwidth and efficiency offered by these new modules, ensuring that hardware capabilities are fully translated into operational speed.

The Foundations of the Next AI Revolution

The mass production of 192GB SOCAMM2 memory marked the end of the experimental phase for next-generation AI infrastructure and the beginning of large-scale deployment. By solving the dual challenges of thermal management and data bottlenecks, this technology provided the essential foundation for the Vera Rubin platform and beyond. Industry stakeholders realized that hardware had to catch up to the ambitions of software developers to prevent a plateau in machine learning capabilities. These advancements ensured that the infrastructure remained resilient, scalable, and capable of supporting the autonomous digital ecosystems that define the current technological landscape. Moving forward, the focus shifted from mere capacity to the sustainable integration of intelligence and energy efficiency.