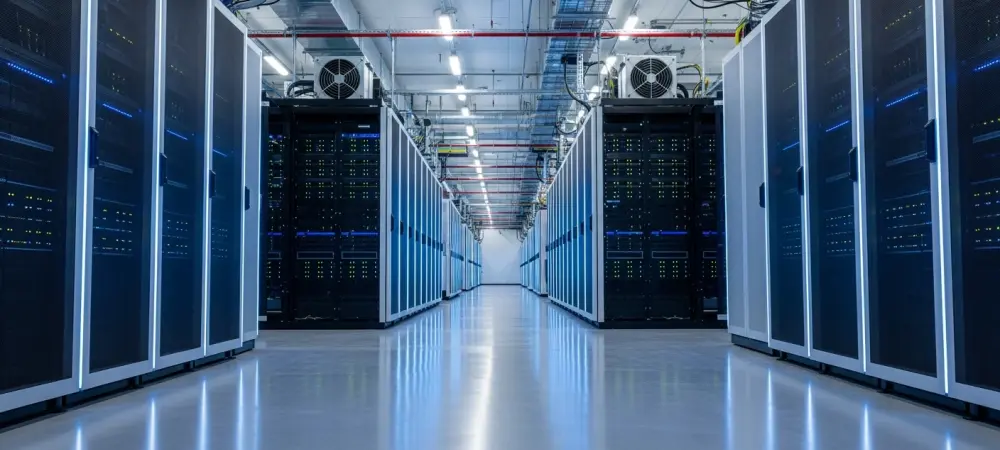

The global race for artificial intelligence dominance is no longer restricted to sophisticated algorithms or neural network architectures; it has moved into the physical realm of industrial steel and high-voltage power. While software development remains the public face of the industry, the survival of the AI revolution depends entirely on massive, specialized infrastructure investments that can handle the sheer heat and electrical load of modern chips. This shift marks a transition from general-purpose data storage to high-density computing hubs designed to sustain the next generation of digital intelligence.

As AI workloads become increasingly complex, traditional data center designs are reaching their thermal and electrical limits. Modern facilities must now accommodate unprecedented kilowatt-per-rack requirements, leading to the birth of “AI-ready” architecture. This evolution explores the rise of these specialized hubs, using the $1.4 billion Metrobloks expansion as a lens to view the migration toward regional metro edge facilities.

The Evolving Landscape: High-Density AI Computing

Market Growth: The Rise of AI-Ready Facilities

Current demand for infrastructure is shifting toward facilities specifically engineered for the high-density requirements of modern GPUs. Standard server racks that once pulled five kilowatts are being replaced by high-performance configurations demanding fifty kilowatts or more. This statistical surge forces developers to prioritize advanced liquid cooling systems and massive power backups that were once considered niche requirements.

Furthermore, secondary markets are becoming the new frontier for these digital powerhouses. Cities like Kansas City and various locations throughout the Midwest offer a competitive edge through lower operational costs and stable energy grids. State-level incentives, particularly Missouri’s Data Center Sales Tax Exemption Program, provide the regulatory support necessary to transform these regions into national tech corridors.

Case Study: Metrobloks and the Liberty Expansion

The $1.4 billion Metrobloks project in Liberty, Missouri, represents a significant leap in regional infrastructure capability. Spanning nearly 570,000 square feet in Clay County, this three-building campus is specifically tailored for intensive AI workloads. The first phase consists of a 177,000-square-foot facility that utilizes municipal bond support to accelerate construction and deployment.

Strategic site selection played a pivotal role in the development of the 29-acre Old Hughes Road location. By positioning the campus near robust fiber networks and existing energy assets, Metrobloks ensures low-latency connectivity and reliable power. This Missouri facility acts as a scalable blueprint for the company’s broader global strategy, which includes similar high-density developments in Miami, Phoenix, and Paris.

Strategic Industry Perspectives: Regional Migration

The Shift: The Move to the Metro Edge

Industry leaders, including CEO Ernest Popescu, emphasize a strategic pivot away from traditional hyperscale models toward “metro edge” facilities. These centers bring high-density compute power closer to urban hubs and end-users without the congestion of coastal markets. This approach reduces latency for real-time AI applications while bypassing the space constraints found in older tech capitals.

The Midwest advantage is becoming a central theme in economic discussions. Governor Mike Kehoe and local leaders view this migration as a foundational element of a new digital economy. By providing reliable energy and a supportive business environment, inland regions are successfully attracting billion-dollar investments that were previously reserved for Silicon Valley or Northern Virginia.

Investor Confidence: Financing the Future

A successful financing round led by groups like Canopy Generations Fund signals a deep institutional belief in the longevity of specialized AI assets. Investors are increasingly viewing physical data centers as the essential “real estate” of the modern era. This capital influx ensures that the physical foundations of AI can keep pace with the rapid acceleration of hardware capabilities and data processing needs.

Future Outlook: The Decentralization of AI Power

The Regional Hub Explosion: Beyond Coastal Markets

The coming years will likely see a continued explosion of regional hubs as tech companies flee saturated coastal markets. Strategic inland locations offer the land and power resources required for massive expansion. This decentralization not only stabilizes the national grid but also democratizes access to high-performance computing across different geographic zones.

However, balancing these massive power requirements with sustainability remains a significant hurdle. Operators must find ways to integrate renewable energy sources to power their cooling systems and server banks. The socio-economic impact is equally profound, as these projects create high-quality technical jobs and foster innovation ecosystems in regions that were once overlooked by the tech sector.

Evolving Hardware: Preparing for New Chips

As AI chip designs continue to evolve, the physical configurations of data centers must remain flexible. Future iterations of silicon will likely require even more specialized spatial layouts and advanced immersion cooling techniques. Facilities that can adapt to these changing mechanical requirements will become the most valuable assets in the global digital supply chain. The success of artificial intelligence remained inextricably linked to the physical facilities that housed it. Stakeholders realized that the Kansas City metropolitan area and similar regional hubs were positioned to lead the next phase of the global digital economy. Moving forward, the industry prioritized the integration of sustainable power solutions and modular facility designs to maintain the momentum of the intelligence age.