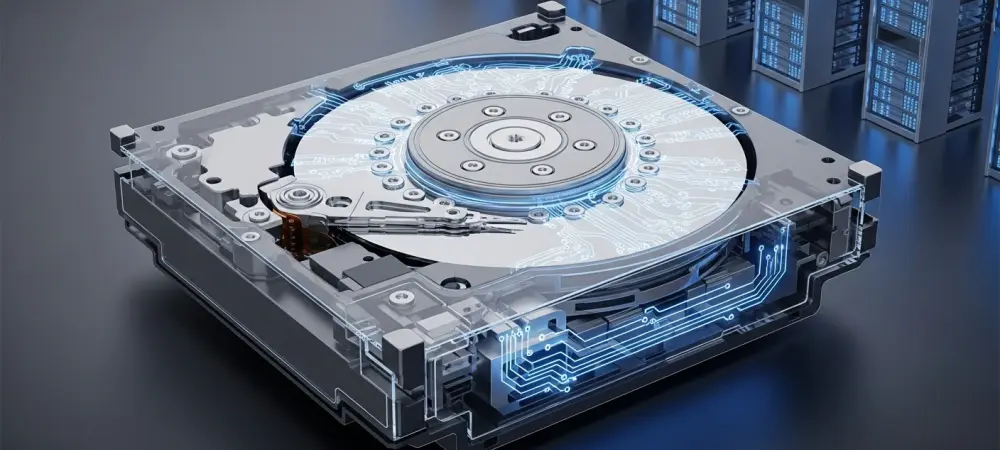

The astronomical growth of artificial intelligence and high-performance computing has pushed global data center energy consumption toward a critical breaking point where supply often struggles to meet demand. As of 2026, hyperscale facilities are increasingly constrained by power delivery limitations and cooling capacities rather than physical floor space, forcing a radical rethink of hardware efficiency. Hard disk drives have historically been a significant contributor to this energy drain because they must remain spinning at high speeds to ensure immediate data availability. This constant mechanical rotation generates heat and consumes electricity even when the drive is not actively reading or writing information. Western Digital has addressed this inefficiency by introducing a power-optimized drive technology that allows disks to enter a low-power state without the prohibitive latency traditionally associated with waking them up. By bridging the gap between performance and conservation, this innovation offers a path toward sustainable scaling for the world’s most demanding digital infrastructures.

Modern storage environments have long avoided aggressive power management for mechanical drives due to the severe performance penalties that occur when a disk must accelerate from a standstill. When a traditional hard drive spins down to save energy, the process of returning to full rotational speed can take several seconds, a delay that often triggers application timeouts or system-level errors. Because enterprise software stacks expect near-instantaneous response times, data center operators have typically chosen to keep millions of drives spinning 24/7, regardless of actual workload requirements. This practice leads to immense waste, particularly for “cool” data that is accessed infrequently but must remain online. The new technology developed by Western Digital eliminates these historical barriers by fine-tuning the transition between power states. This allows the hardware to respond to incoming requests with a minimal impact on latency that remains well within the operational thresholds required by hyperscale software environments, effectively ending the forced trade-off between energy savings and system reliability.

Overcoming Traditional Latency Barriers

The breakthrough in Western Digital’s approach lies in its ability to manage the delicate mechanics of the drive motor and read-write heads with unprecedented precision. Historically, any attempt to reduce power by lowering the spindle speed resulted in a “spin-up” delay that was simply too long for production databases and real-time analytics engines to handle. By optimizing the firmware and mechanical response times, these new drives can now transition from a deep sleep or low-power idle state back to a fully active status in a fraction of the time previously required. This means that the software layer, which manages thousands of simultaneous requests, does not perceive a stall significant enough to drop connections or report hardware failures. This hardware-centric solution allows for a more dynamic allocation of power across a storage rack, ensuring that only the disks currently processing data are drawing full wattage. This technical achievement marks a transition from static power consumption models to a more intelligent, demand-driven energy profile for massive storage arrays.

Furthermore, this technology creates a unique middle ground in the storage hierarchy, serving as a functional bridge between high-speed solid-state drives and traditional high-capacity archival disks. While flash storage provides the highest performance, its cost remains prohibitive for the massive volumes of secondary data that hyperscalers must retain. Conversely, traditional high-capacity hard drives are excellent for density but inefficient when kept in a permanent high-power state. By allowing high-capacity mechanical drives to behave more like on-demand resources, Western Digital has effectively enabled a new tier of energy-efficient storage. This tier is particularly well-suited for social media archives, backup repositories, and large-scale telemetry logs where millisecond-level latency is less critical than total cost of ownership. The ability to park data on these drives while they are in a low-power state, yet have that data accessible almost immediately, represents a significant shift in how architects design storage clusters to balance performance, capacity, and environmental impact.

Maximizing Density Within Power Envelopes

Beyond the obvious benefit of reduced utility bills, this power-optimized technology allows data center operators to significantly increase their storage density without upgrading their existing electrical infrastructure. Most modern server racks are governed by a strict “power envelope,” which limits the total number of devices that can be installed based on the maximum electricity the rack can safely draw and cool. Because Western Digital’s new drives lower the average power footprint per unit, operators can now pack more drives into a single rack while staying within the same energy budget. This means that a facility can increase its total petabytes of storage without needing to build new data halls or install additional cooling units. This optimization is particularly valuable in 2026, as the demand for storage continues to outpace the rate at which new power grids can be commissioned. By doing more with the same amount of electricity, organizations can maximize the return on their physical assets while simultaneously reducing their carbon footprint.

The implementation of this technology does not require a massive overhaul of existing software architectures, making it an attractive proposition for the world’s largest cloud providers. Hyperscalers have traditionally been cautious about adopting new drive technologies that require custom drivers or complex management layers. Western Digital has engineered this solution to be as transparent as possible to the host system, allowing the drives to handle power state transitions internally. This ease of integration has sparked genuine interest from industry leaders who are looking for immediate ways to meet sustainability targets while managing the explosive growth of data generated by the latest generation of machine learning models. As these drives undergo rigorous testing in real-world environments, the focus is shifting toward verifying long-term mechanical durability under frequent power-cycling conditions, ensuring that the energy savings do not come at the cost of the drive’s lifespan.

Strategic Implementation and Future Considerations

To capitalize on these advancements, data center architects should begin by identifying workloads characterized by bursty access patterns or long periods of dormancy. Implementing these power-optimized drives for secondary storage tiers, such as object storage and large-scale content delivery networks, provided an immediate reduction in operational expenses without necessitating a shift to more expensive flash-based alternatives. It was essential for engineering teams to conduct thorough pilot programs to map the specific latency profiles of their applications against the drive’s wake-up cycles. By utilizing predictive analytics to anticipate data access needs, organizations moved toward a model where drives are pre-woken just before a request arrived, further smoothing the transition and eliminating the last vestiges of perceptible lag. This proactive management strategy ensured that the infrastructure remained responsive while operating at the lowest possible energy state.

Moving forward, the industry must view energy efficiency as a core component of the hardware specification rather than an optional feature. As data volumes continue to scale from 2026 to 2028 and beyond, the focus will likely shift toward even deeper integration between the storage firmware and the data orchestration layer. Future developments should explore standardized communication protocols that allow the software to signal upcoming data requests to the hardware with greater granularity. For now, the transition to power-optimized mechanical storage represented a vital step in maintaining the feasibility of massive data centers. Stakeholders who prioritized the adoption of these technologies positioned themselves to handle the next wave of data expansion while mitigating the risks associated with rising energy costs and increasingly stringent environmental regulations. The shift toward intelligent, low-power mechanical storage proved to be a necessary evolution in the quest for a sustainable and scalable digital future.