Dominic Jainy has spent the better part of two decades at the intersection of high-performance computing and disruptive technologies. As an IT professional with a deep specialization in artificial intelligence and machine learning, he has witnessed the evolution of graphics processing from the early days of simple pixel pushing to the current era of neural rendering and blockchain integration. Our discussion focuses on the ten-year legacy of the Pascal architecture, a milestone that many enthusiasts still consider the “golden age” of GPU craftsmanship. We explore how this era defined the standards for power efficiency and raw rasterization before the industry pivoted toward the AI-driven world of ray tracing and DLSS.

The following conversation touches upon the technical leaps of the Pascal series, the market disruption caused by the legendary GTX 1080 Ti, and the fundamental shift in design philosophy as the industry transitioned from the GTX to the RTX branding. We also examine the trade-offs between pure hardware power and the software-based solutions that define modern gaming today.

The Pascal series introduced clock speeds exceeding 2 GHz and significant efficiency gains over previous architectures. How did these technical leaps translate to real-world performance in titles like Doom or The Witcher III, and what specific architectural shifts allowed for such a massive jump in power efficiency?

When the Pascal architecture arrived, it felt like someone had finally unlocked the true potential of silicon, pushing clock speeds past that iconic 2 GHz barrier for the first time. In a fast-paced title like DOOM 2016, this translated into a visceral experience where we saw frame rates obliterating the 200 FPS mark, creating a level of fluid motion that was simply unheard of at the time. For more cinematic, dense worlds like The Witcher III, the specialized features such as Fur and Hairworks were finally able to run without the massive performance penalties we had grown accustomed to. This leap wasn’t just about raw speed; the Pascal series was meticulously designed to outclass the already efficient GTX 900 “Maxwell” GPUs by maximizing every watt of power consumed. It was a rare moment where a GPU family offered superb overclocking headroom while simultaneously maintaining unmatched levels of power efficiency, making the hardware feel remarkably cool and stable even under heavy loads.

When the GTX 1080 Ti entered the market, it disrupted the competitive landscape and largely overshadowed the release of the RX Vega series. What specific performance metrics allowed this card to maintain its dominance for years, and how did its arrival force a pivot in the industry’s design philosophy?

The arrival of the GTX 1080 Ti was a seismic event that caught the entire industry—and especially the Radeon camp—completely off guard. While the competition was busy preparing the RX Vega series to compete with the standard GTX 1080, NVIDIA dropped the Ti variant, which offered a performance leap so massive it essentially rendered the rival’s roadmap obsolete before it even launched. With its massive 11 GB of VRAM and a core that could handle almost anything thrown at it, the 1080 Ti established a level of dominance that lasted for years, forcing competitors to go back to the drawing boards. It shifted the philosophy from “incremental improvements” to “overwhelming force,” proving that a flagship card could stay relevant for multiple generations if the initial craftsmanship was high enough. This card didn’t just win a benchmark war; it became a symbol of a time when top-tier GPUs provided incredible value and longevity, setting a bar that many feel hasn’t been cleared in the same way since.

Before the transition to Tensor and RT cores, GPU design focused primarily on raw compute power and high VRAM capacities. What were the practical benefits of prioritizing pure rasterization for the games of that era, and how did this focus influence the overall longevity and reliability of the hardware?

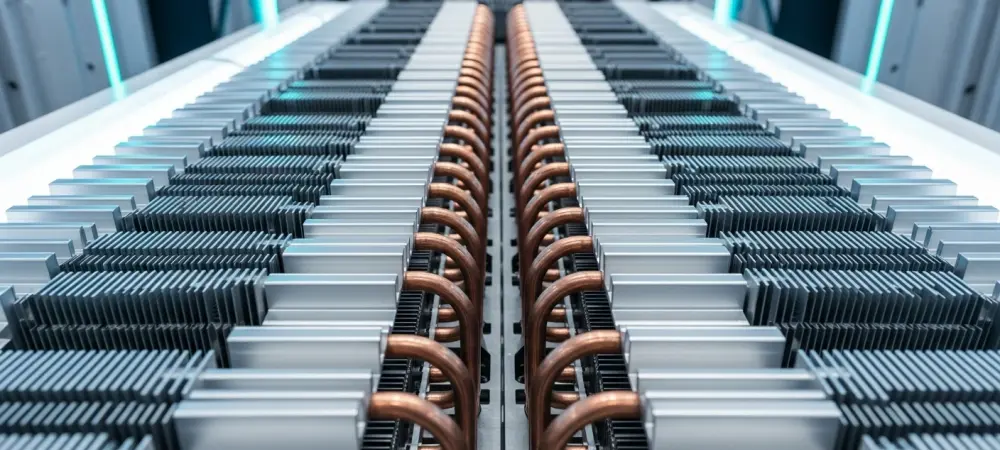

Prioritizing pure rasterization meant that every transistor on the die was dedicated to the immediate task of rendering pixels and calculating geometry, leading to a very “honest” form of performance. During the Pascal era, the focus was on uplifting memory capacities—ranging from the humble 2 GB on the GT 1010 to the staggering 12 GB on the Titan Xp—to pave the way for high-texture, high-fidelity gaming without the need for software tricks. This approach resulted in hardware that felt incredibly robust and reliable, as gamers didn’t have to worry about the complexities of modern issues like melting power connectors or buggy upscaling artifacts. By focusing solely on adding more compute and shader capabilities, manufacturers delivered a product that aged gracefully because its power was rooted in physical hardware rather than evolving software algorithms. There was a certain peace of mind in knowing your GPU’s performance was constant and predictable, anchored by solid craftsmanship and a focus on the fundamental building blocks of graphics.

Modern titles like Cyberpunk 2077 and Alan Wake 2 now use path tracing and neural rendering to achieve visual fidelity that was once impossible. How does the immersion provided by these AI-driven technologies compare to the high-texture standards of the past, and which specific advancements feel most transformative?

The leap from the high-texture standards of the past to the path-traced environments of today is nothing short of breathtaking, even if it feels like we’ve entered a completely different world. Back in the day, Crysis was the pinnacle of visual achievement, but modern giants like Cyberpunk 2077 or the Alan Wake 2 remake simply crush those old benchmarks in every conceivable visual segment. The immersion provided by neural rendering and path tracing creates lighting and shadows that behave exactly as they do in the real world, moving beyond the “baked-in” effects we used to praise. While I still feel nostalgic for the games I played ten years ago, the visual fidelity in newer titles like Pragmata or Expedition 33 demonstrates how miles beyond we have gone in terms of atmospheric depth. These AI-driven technologies allow for a level of detail where light bounces off surfaces with a sensory accuracy that makes the virtual world feel tangible and lived-in.

The shift from the GTX series to the RTX branding marked a fundamental change in how games are rendered and upscaled. Looking back at the craftsmanship of the Pascal era, what were the trade-offs in moving away from pure hardware power toward software-based solutions like DLSS and frame generation?

Moving away from the “pure” hardware era of Pascal toward the AI-enhanced “Turing” and subsequent RTX generations involved a significant trade-off between traditional horsepower and intelligent reconstruction. In the Pascal days, if you wanted better performance, you needed a faster clock or more shaders; today, we rely on DLSS and frame generation to bridge the gap between what the hardware can draw and what we see on the screen. This shift changed the landscape from a focus on raw, unadulterated compute power to a reliance on software-based solutions that can sometimes introduce artifacts or latency if not handled correctly. However, the benefit is that we can now achieve levels of visual quality—like real-time ray tracing—that would be mathematically impossible on pure hardware alone within a reasonable power budget. While we lost the “simpler times” of pure rasterization, we gained a toolkit that allows games to look like pre-rendered movies, even if it means we are no longer purely reliant on the physical silicon to do all the heavy lifting.

What is your forecast for the future of GPU architectures over the next decade?

I believe the next decade will see the complete blurring of the line between hardware rendering and AI-generated imagery, where the GPU becomes more of a “neural engine” than a traditional graphics processor. We will likely move away from the current model of rendering every pixel and instead transition to a system where the hardware only calculates the most essential data points while AI fills in the rest with perfect accuracy. This will allow for photorealistic environments in VR and AR that are indistinguishable from reality, powered by architectures that prioritize “intelligence per watt” over raw clock speeds. As we saw with the leap from Pascal to RTX, the focus will continue to shift toward software-defined performance, but I expect the physical craftsmanship to return to the forefront as we reach the limits of silicon miniaturization. Ultimately, the GPUs of 2034 will not just be about playing games; they will be the primary engines for personal AI assistants and real-time world simulation, making the 2 GHz breakthrough of the Pascal era look like the first flickering of a lightbulb compared to a sun.