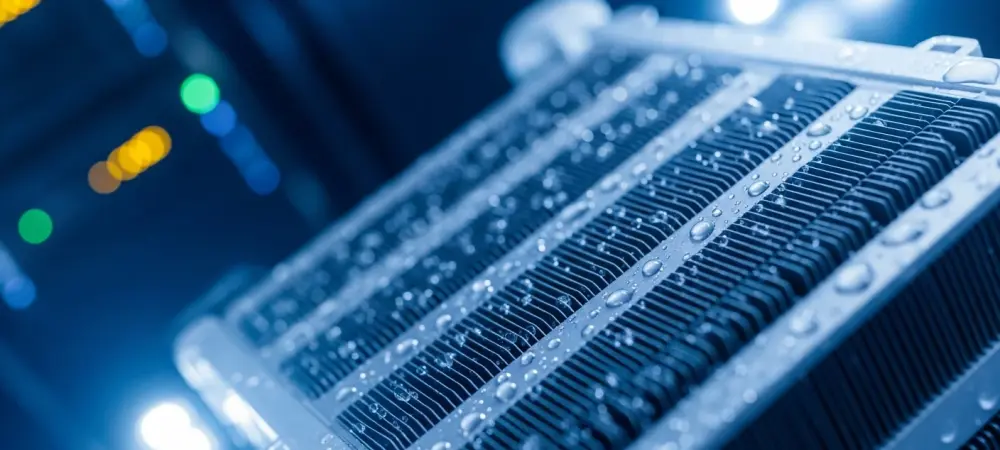

The sprawling expansion of global artificial intelligence has turned water management into the silent cornerstone of digital reliability, demanding more than simple plumbing to keep systems alive. As high-density chips generate unprecedented heat, the tech sector is reaching a breaking point where traditional cooling methods no longer suffice. This shift has elevated water from a utility expense to a critical infrastructure component, forcing a total reimagining of how data centers interact with local environments.

The Rise of Integrated Water Life Cycle Systems

1. Market Growth: The Shift to Unified Frameworks

Fragmented vendor models are rapidly vanishing in favor of integrated platforms like HyperSolved, which manage the entire water journey from sourcing to discharge. Statistics indicate that the data center vertical will account for 25% of major treatment firms’ portfolios by 2027. This economic pivot reflects a move toward seeing water as a strategic asset rather than a secondary concern. Moreover, the “once-in-a-generation” AI build-out is accelerating the demand for containerized, modular treatment solutions. These systems allow for rapid deployment, matching the breakneck speed of server installation while ensuring that the cooling infrastructure is just as resilient as the power grid.

2. Real-World Applications: Global Deployments

Hyperscale operators are already demonstrating the efficacy of closed-loop systems in high-demand hubs like Didcot, UK. These facilities utilize AI-driven tools to balance sourcing and treatment within a single framework. By prioritizing municipal reuse over freshwater, companies are securing site flexibility even in water-stressed regions.

Expert Perspectives on Sustainable Scaling

1. The Infrastructure Benchmark: Lessons From History

Industry leaders frequently compare the current digital expansion to the 19th-century railroad build-out, stressing that reliability is the only metric that matters. To achieve this, water must be treated with the same accountability as power systems. Avoiding fragmentation is no longer an option; it is an operational necessity.

2. The Sustainability Mandate: Achieving ESG Standards

Thought leadership emphasizes that low-risk scaling is the only way to meet environmental and social governance standards. Experts argue that decoupling digital growth from freshwater consumption is essential for long-term viability. This transition ensures that the surge in computing power does not come at the expense of local community resources.

The Future of Data Center Utility Management

1. Evolution of Site Selection: Geography and Climate

Advanced treatment allows data centers to operate in diverse climates where freshwater is scarce or unavailable. The ability to utilize alternative sources means that geography is no longer a constraint for hyperscale development. Future facilities will likely feature fully autonomous, self-healing water loops that require minimal human intervention to maintain peak efficiency.

2. Broader Implications: Innovation vs. Scarcity

The tension between high-density cooling needs and global conservation efforts will define the next phase of innovation. While resource scarcity poses a significant risk, it also drives the development of more efficient, circular water economies. Navigating this balance is the primary challenge for engineers in the coming years.

Summary of Key Trends: Decoupling Growth From Consumption

The industry successfully shifted away from disconnected vendors toward holistic, AI-driven management frameworks. This transition ensured that digital expansion remained sustainable while protecting local ecosystems from the strain of cooling requirements. Stakeholders moved beyond simple conservation and instead focused on total water circularity as a non-negotiable standard for excellence. These advancements provided the necessary blueprint for scaling infrastructure without exhausting vital natural resources.