Dominic Jainy brings a wealth of knowledge in scaling massive IT infrastructures, specifically focusing on the intersection of high-performance computing and physical data center architecture. With experience navigating the complexities of multi-billion dollar site developments, he offers a unique perspective on how the physical world of steel and fiber meets the virtual world of artificial intelligence. Today, we explore the logistical triumphs behind the Fairwater project and the shift toward high-density, multi-story facilities on industrial brownfields.

The Fairwater site is reportedly going live ahead of schedule despite its massive scale. What specific project management strategies allow for such an accelerated timeline, and how do you successfully integrate hundreds of thousands of GB200s into a single seamless cluster while maintaining stability?

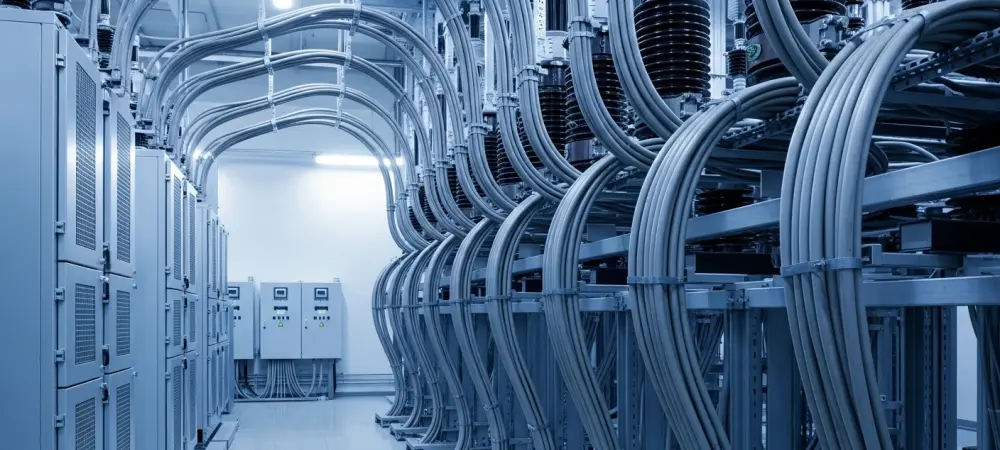

To get a project of this magnitude online early, you have to move away from sequential construction and embrace highly parallelized workflows. This involves pre-positioning critical components and using modular designs for the 1.2 million square feet of floor space to ensure hardware can be rolled in the moment the shell is secured. Integrating hundreds of thousands of GB200 GPUs into a single cluster is a feat of networking engineering, requiring a fabric that can handle unprecedented East-West traffic without bottlenecks. We focus heavily on thermal management and power distribution consistency to ensure that this massive density doesn’t compromise the operational stability of the world’s most powerful AI data center.

Investing $7.3 billion into a single campus requires massive quantities of structural steel and underground cabling. How do you coordinate the logistics for such a scale, and what are the primary technical trade-offs when deploying enough fiber to wrap the planet four times?

Coordinating the arrival and installation of 26.5 million pounds of structural steel requires a precision supply chain where every beam is tracked from the mill to the foundation piles. We are managing 120 miles of medium-voltage underground cable and 72.6 miles of mechanical piping simultaneously, which means the underground city must be perfectly mapped before the first slab is poured. Deploying enough fiber to wrap the planet four times over is a staggering requirement that forces us to look at high-density ribbon cables and advanced splicing techniques. The primary trade-off is between signal latency and physical space; while we need massive bandwidth for AI workloads, managing that much glass without creating signal degradation is a constant engineering challenge.

Development in Mount Pleasant has expanded to over 1,000 acres with plans for fifteen additional buildings. What are the long-term infrastructure implications of this footprint, and what step-by-step processes do you follow to ensure local power grids can support this concentrated AI demand?

Expanding to a 1,000-acre footprint transforms the local landscape into a primary hub for global compute, necessitating a multi-decade view of infrastructure durability. When planning for fifteen additional buildings, we start with a deep-foundation strategy, similar to the 46.6 miles of piles used in the initial phase, to ensure the ground can support the weight of evolving server densities. Ensuring the power grid can handle this AI demand involves a step-by-step collaboration with utility providers to build dedicated on-site substations and redundant feeds. We also analyze the thermal plume of the entire campus to ensure that the cumulative heat from hundreds of thousands of GPUs doesn’t impact the efficiency of neighboring buildings.

International projects like the Skelton Grange site involve three-story data centers on former power station land. How does the engineering approach change for high-density multi-story facilities, and what metrics determine the feasibility of repurposing industrial brownfield sites for modern cloud infrastructure?

Building three-story data centers, like the ones planned for the 424,000 square foot facilities in Leeds, requires a significant shift in structural engineering to handle the immense weight of liquid-cooling systems and battery arrays on upper floors. We move away from traditional horizontal layouts and focus on vertical power busbars and riser-based cooling distributions to maximize the space. When repurposing a former power station site, the primary feasibility metrics are existing grid proximity and environmental remediation costs. These industrial brownfields are often ideal because they already possess the heavy-duty electrical infrastructure needed for modern operations, even if construction doesn’t start until early 2027.

What is your forecast for AI data center expansion?

I anticipate a shift toward mega-campuses where the distinction between a data center and a power plant becomes increasingly blurred as we see more $7.3 billion investments like Fairwater. We will likely see a move toward even higher vertical density in industrial zones, utilizing multi-story designs to cram more compute power into smaller geographic footprints. The integration of specialized AI silicon will drive a revolution in liquid cooling and on-site energy generation to keep up with the exponential demand for training large-scale models. Ultimately, the physical infrastructure will become a primary competitive advantage, with speed-to-market and power-density being the two most critical metrics for success in the next decade.