The global digital economy no longer relies on simple storage warehouses filled with silent servers but instead functions as a vibrant, living ecosystem where every watt and airflow pattern matters for modern survival. As the pulse of industry beats faster, the physical foundation of the internet is undergoing a silent but total revolution. Operators are moving away from manual inspections and reactive fixes toward a sentient ecosystem that monitors its own health. By embedding intelligence directly into the electrical and mechanical bones of the facility, the industry is turning once-inert metal and copper into a continuous stream of actionable data. The transition to an intelligent data center reflects a shift in philosophy where the facility itself becomes an active participant in uptime. In a landscape defined by high-frequency trading and autonomous systems, the “static” data center has become a liability. Today, the conversation centers on how a building can sense a problem before it manifests as a failure. This transformation represents the convergence of physical durability and digital intelligence, ensuring that the infrastructure supporting our digital lives is as sophisticated as the code it hosts.

The End of the “Dumb” Data Center

The era of the passive warehouse, where servers sat in unmonitored racks and cooling was a matter of guesswork, has officially ended. Today, a single localized hot spot caused by a high-density artificial intelligence chip can jeopardize millions of dollars in hardware within seconds. This reality has forced a move away from the “dumb” data center toward environments where every component is vocal about its status. The physical layer is no longer just a shell; it is a networked entity that provides real-time feedback on its internal environment, moving the industry toward a state of total awareness.

Modern facilities utilize these data streams to replace the labor-intensive walkthroughs that once defined data center management. Instead of technicians checking gauges once a shift, sensors embedded in the infrastructure now report conditions thousands of times per second. This shift turns the entire building into a digital asset, allowing for a level of transparency that was previously impossible. By making the invisible visible—such as the micro-vibrations in a cooling pump or the subtle resistance in a power cable—operators can maintain peak performance without ever stepping onto the data hall floor.

Furthermore, this intelligence allows the facility to adapt to the workload it carries. When a cluster of servers ramps up for a massive computational task, the surrounding infrastructure responds in kind. The transition from a static to a sentient environment means that the physical building finally moves at the speed of software. This adaptability is the hallmark of the modern era, where the infrastructure is no longer a bottleneck but a facilitator of digital innovation and reliability.

Why Intelligent Foundations are Non-Negotiable in the AI Era

The surge in high-performance cloud computing and artificial intelligence has pushed power densities to their absolute breaking point. In this high-stakes environment, the gap between “static” infrastructure—where parts are replaced on a calendar schedule—and “dynamic” infrastructure is the difference between seamless uptime and catastrophic failure. As power draw per rack climbs toward unprecedented levels, the traditional methods of thermal management and power distribution are proving insufficient. Continuous visibility into voltage fluctuations and airflow patterns has shifted from a luxury to a baseline requirement for survival.

As facilities become more sprawling and often situated in remote locations to leverage renewable energy, the ability to manage them from afar is essential. Intelligent foundations provide a bridge between the physical site and the remote operations center, ensuring that distance does not compromise oversight. Without these smart systems, the risk of cascading failures increases exponentially, as there is no way to detect the early warning signs of heat soak or electrical fatigue in a high-density environment.

The economic implications of this shift are equally profound. In the AI era, downtime is measured not just in lost minutes, but in lost competitive advantage and massive financial penalties. By adopting an intelligent foundation, organizations can ensure that their physical assets are utilized to their maximum potential without crossing the line into failure. This precise management of resources allows for higher density and better performance, directly impacting the bottom line of the modern enterprise.

Breaking Down the Digital-Physical Convergence

Modern data center design now prioritizes the conversion of physical variables into digital insights at the source. This transformation is visible across several key layers of the infrastructure, beginning with granular visibility at the rack level. Sensors and smart relays are now embedded within power distribution units (PDUs) and enclosures, allowing operators to monitor thermal anomalies at the individual chip or rack level. This level of detail ensures that cooling resources are directed exactly where they are needed, preventing energy waste and protecting sensitive components from heat damage.

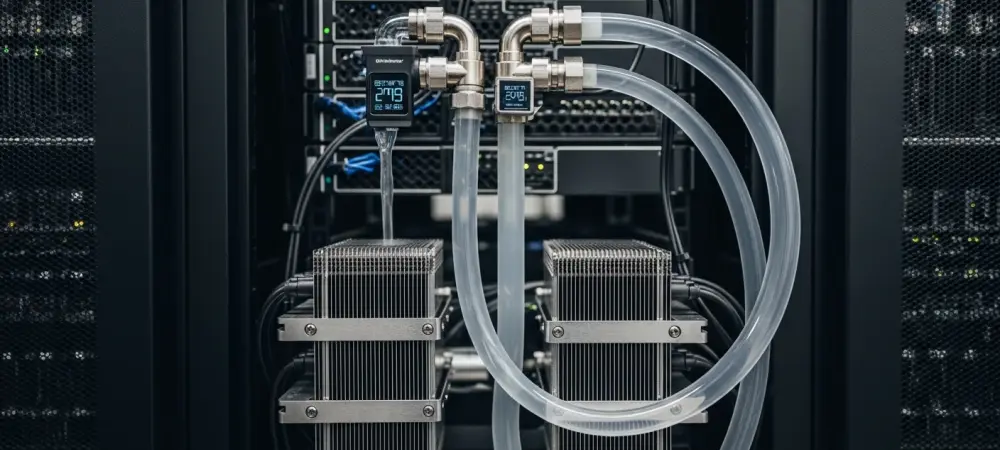

As liquid cooling becomes the standard for intensive workloads, IoT-enabled thermal controls manage closed-loop systems with extreme precision. These systems do not just move fluid; they interpret data to prevent hardware degradation and optimize the cooling cycle. The precision afforded by these digital controls allows for a much tighter thermal equilibrium, which is critical when dealing with the volatile heat signatures of modern processors. By integrating these sensors directly into the cooling loop, the system can react to changes in milliseconds, far faster than any manual control could manage.

The industry is also moving away from off-the-shelf components toward bespoke, engineering-led solutions that facilitate this convergence. This includes tailored enclosures with transparent inspection panels and precision-drilled wiring paths designed for specific high-density configurations. These intelligent components are built to withstand harsh electrical noise and moisture while meeting global standards like RoHS and REACH. This ensures that the “brains” of the system are as rugged as the hardware they control, providing durability alongside their digital capabilities.

Driving Reliability Through Predictive Analytics and Expert Insights

The most significant operational leap provided by IoT is the transition from a “break-fix” model to predictive maintenance. By analyzing historical performance data alongside real-time inputs, systems can now flag a failing power relay or a rising temperature gradient before a system failure occurs. Industry experts highlight that this proactive stance neutralizes the risk of cascading failures in mission-critical environments. This shift is supported by advanced Ingress Protection (IP) ratings, which ensure that even as data centers adopt volatile liquid cooling methods, the underlying sensors remain functional.

This predictive capability transforms the role of the data center engineer from a firefighter to a strategist. Instead of reacting to alarms, the team uses data to schedule maintenance during planned windows, replacing components that show early signs of wear long before they can cause an outage. This method drastically reduces the “human error” factor, which remains one of the leading causes of data center downtime. By relying on objective data rather than subjective schedules, the reliability of the entire facility is elevated to a new standard.

Moreover, the integration of expert insights with automated data streams creates a powerful feedback loop. When a sensor detects an anomaly, it can be compared against a global database of performance patterns to determine the exact nature of the risk. This allows for a more nuanced response, such as shifting workloads to a different part of the facility while a specific cooling unit is tuned. This level of sophistication ensures that the data center remains a stable platform for the most critical applications on earth.

Strategies for Implementing a Smart Infrastructure Framework

Transitioning to an IoT-enabled physical infrastructure required a structured approach to integration and scaling. The journey began with a comprehensive audit for data gaps, identifying areas in the power and cooling chain where manual monitoring was still the primary source of information. By pinpointing these blind spots, organizations were able to prioritize where to install the first wave of intelligent hardware. This methodical approach ensured that the most critical components were brought into the digital fold first, providing immediate returns on the investment in smart technology.

The next phase involved a move beyond catalog-buying toward collaborating with specialized engineering partners. These experts helped design customized enclosures and distribution units that fit unique facility layouts, ensuring that the IoT sensors were placed in the most effective locations. Establishing a predictive maintenance loop was also vital, as it allowed IoT data streams to be integrated into central management software. This integration created automated alerts for hardware that deviated from normal operating parameters, providing a clear path for technicians to follow when an issue was detected. Finally, optimizing for sustainability became a core part of the framework. By utilizing micro-adjustments in cooling and power draw, facilities were able to maintain thermal equilibrium with minimal energy expenditure. This reduced both the carbon footprint and the operational costs, proving that intelligence is the most effective tool for environmental responsibility. Through these strategies, the industry successfully navigated the transition to a smart framework, setting a new standard for how physical infrastructure should be built and managed. These steps provided a blueprint for future developments, ensuring that as the digital demand grew, the physical world was ready to meet it with precision and resilience.