The rapid proliferation of public generative artificial intelligence models across the global workforce has forced modern enterprises to fundamentally rethink how they manage and protect their proprietary digital knowledge assets. In 2026, the initial fascination with general-purpose chatbots has been replaced by a rigorous commitment to internal governance and data integrity. Organizations are witnessing a significant pushback against the use of unverified external models, which often provide inconsistent or factually incorrect information. Instead, the focus has shifted toward building bespoke AI assistants that operate within a controlled corporate sandbox. These tools are designed to serve as the primary interface for employees who need immediate, accurate answers when interacting with clients or managing complex internal workflows. By prioritizing authorized intelligence over public convenience, businesses are effectively insulating themselves from the legal and reputational risks associated with AI-generated misinformation. This strategic pivot ensures that every piece of advice given to a customer is backed by verified data, rather than the unpredictable patterns of a model trained on the open internet.

Transitioning to Component-Based Content Architectures

Legacy systems that rely on static, monolithic documents like hundred-page PDF manuals or long-form training modules are increasingly viewed as obstacles to efficient AI integration. To feed an intelligent assistant effectively, content must be re-engineered into a component-based architecture where information exists as modular, reusable topics often referred to as learning nuggets. This transition allows the AI to parse through highly specific data points rather than struggling to summarize an entire chapter to find one relevant sentence. For instance, a technical description of a product feature is no longer buried in a single manual; instead, it resides in a central repository as a discrete entity that can be pulled for a sales pitch, a troubleshooting guide, or an automated support response. By adopting this granular approach, companies are ensuring that their content is inherently machine-readable, which significantly reduces the latency between a user’s query and a precise, context-aware answer from the generative system.

This modularity also facilitates the rise of multi-modal customer experiences where a single verified content component powers various media formats simultaneously without human intervention. In the current operational environment, a “topic” regarding a safety protocol can be automatically transformed into an interactive video script, a real-time notification for a field technician, or a voice-over script for an automated training assistant. This level of versatility ensures that the information remains consistent across all touchpoints, regardless of whether the customer is reading a text message or watching a generated instructional clip. Furthermore, when a procedure changes, a content manager only needs to update the single source component to synchronize the entire digital ecosystem. This capability provides a level of scalability that was previously impossible when updates required manual revision of multiple documents and video files. The shift toward these dynamic assets represents a fundamental change in how corporate knowledge is maintained, turning static libraries into living datasets that evolve in real-time.

Optimizing Technical Infrastructure and Metadata Standards

The efficacy of an AI-driven knowledge base is fundamentally tied to the quality of the metadata and indexing strategies that govern its retrieval processes. Without comprehensive descriptive tags, even the most sophisticated generative models can struggle to find the exact piece of information required to satisfy a complex customer request. Consequently, there is an industry-wide push to elevate tagging from a secondary administrative task to a core competency within technical and marketing teams. These tags include not only keywords but also parameters regarding the target audience, the specific product version, and the regulatory environment relevant to the information. This rigorous metadata application creates a structured map that allows the AI to navigate vast enterprise ecosystems with high precision. Moreover, this cultural shift ensures that every piece of content created is born with the necessary identifiers to be discovered and utilized by automated systems, thereby maximizing the return on investment for every hour spent on original research and data development.

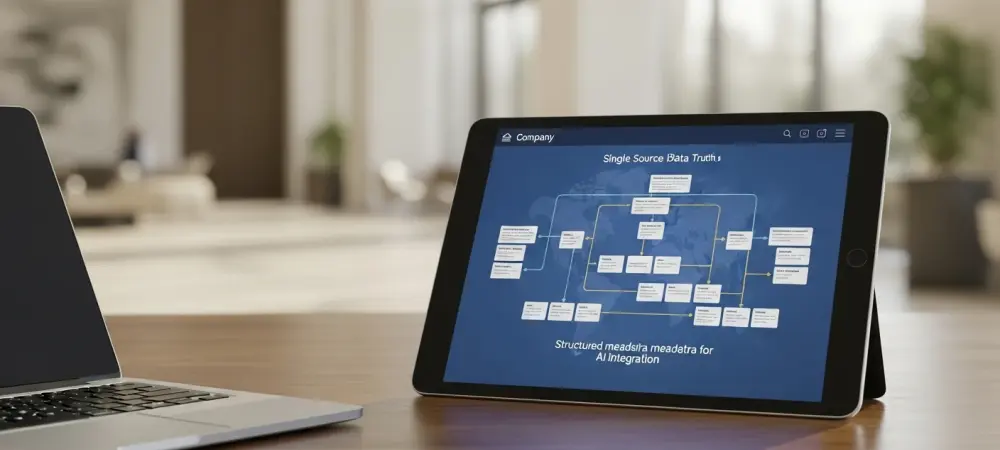

Beyond the organization of individual data points, the physical and logical storage of information must be unified through advanced aggregation layers and modern Enterprise Content Management systems. Historically, critical company data has remained trapped in disparate silos, such as siloed marketing databases, technical documentation repositories, and separate human resources platforms. To overcome these barriers, organizations are implementing robust application programming interfaces and out-of-the-box connectors that bridge these gaps, creating a single source of truth for the AI to query. This unified pipeline eliminates the friction of fragmented information and ensures that the AI does not provide conflicting answers based on which database it happens to access first. By centralizing the governance of these data flows, IT departments can monitor how information is consumed and pinpoint exactly where gaps in the knowledge base exist. This infrastructure-first approach provides the necessary stability for deploying AI at scale, turning a collection of disconnected files into a cohesive engine for operational intelligence.

Evolving Roles: From Content Creation to Expert Curation

As generative artificial intelligence matures into its role as the primary engine for drafting initial communications, the traditional job description of the content creator is being replaced by the content curator. These professionals no longer spend the majority of their time writing first drafts from scratch; instead, they focus on the strategic assembly and verification of the modular components that fuel the AI. This new role involves managing complex content stores to ensure there is no redundancy and that every piece of information aligns with the current brand voice and legal requirements. Curators act as the final gatekeepers of “authorized intelligence,” ensuring that the automated outputs are not just technically accurate but also contextually appropriate for the end user. This transition requires a significant mental shift, moving away from a focus on individual creativity toward a broader perspective on information management and systemic consistency. By overseeing the entire content lifecycle, curators maintain the high standards that differentiate premium brands from those relying on unvetted AI tools.

The quality control measures required in this new era are significantly more rigorous than the peer reviews of the past because generative AI can bypass traditional verification stages. Curators are now responsible for implementing automated factual verification pipelines and cross-referencing AI outputs against sanctioned internal datasets. They are tasked with identifying informational gaps that the AI might attempt to fill with hallucinations, proactively creating the missing “nuggets” to ensure the system never has to guess. Furthermore, these specialists are increasingly involved in the feedback loop between the end-user and the data source, analyzing which content components are performing well and which ones need refinement based on real-world interactions. This active management of the information supply chain ensures that the AI remains a reliable asset for both employees and customers. Ultimately, the move toward curation reflects a maturing understanding of AI’s limitations, placing human expertise at the center of a technological framework that prioritizes safety, accuracy, and long-term brand equity.

Strategic Next Steps: Moving Toward Authorized Intelligence

The journey toward mastering generative artificial intelligence through governed content reached a critical milestone as organizations moved away from experimental chat interfaces. Leaders in the field successfully implemented structured data models that allowed their AI systems to draw from verified internal sources, effectively eliminating the risks associated with public models. This transition was supported by a heavy investment in metadata standards and the unification of previously disconnected data silos. Content teams embraced their new roles as curators, focusing on the precision and modularity of information rather than the sheer volume of output. These actions collectively established a robust foundation for what is now known as authorized intelligence, a framework where every interaction is grounded in corporate truth. By prioritizing the integrity of the underlying data, businesses avoided the pitfalls of misinformation and strengthened their relationships with both employees and clients. The focus on governance proved to be the deciding factor in whether AI became a source of liability or a catalyst for unprecedented growth.