The traditional architecture of global intelligence is currently undergoing a radical relocation as the primary engines of artificial intelligence begin their ascent from the overburdened power grids of the Earth to the pristine vacuum of Low Earth Orbit. This migration is not merely a technical experiment but a fundamental reimagining of how a digital economy functions when terrestrial constraints such as land availability, water for cooling, and fossil-fuel dependency become insurmountable barriers to progress. India, a nation that has historically bypassed several stages of traditional industrialization to embrace digital-first solutions, is now positioning its domestic aerospace and AI sectors to lead this orbital transition. By leveraging the unique physical properties of space, the country seeks to resolve the persistent infrastructure bottlenecks that threaten to cap the expansion of generative AI and real-time autonomous systems.

The move toward orbital compute facilities marks the beginning of an era where the metaphorical “cloud” becomes a literal physical reality. As of early 2026, the global market has begun to recognize that the sheer volume of data processing required for the next generation of intelligence cannot be sustained by ground-based infrastructure alone. Terrestrial datacentres are increasingly viewed as environmental liabilities, consuming vast quantities of natural resources while struggling to provide the micro-second response times necessary for global edge computing. India’s strategic focus on space-based hardware represents a bid for a new kind of technological sovereignty, one that ensures national data security and high-performance compute capabilities remain independent of the geopolitical and physical vulnerabilities inherent to the Earth’s surface.

Bridging the Gap Between Earthly Constraints and Orbital Opportunities

The trajectory of data processing has always been a relentless struggle against the physical limitations of the material world. For decades, the industry accepted that datacentres must be tethered to the ground, requiring massive physical footprints and constant proximity to high-capacity energy grids. However, the current landscape has reached a critical inflection point where the depletion of terrestrial resources and the surging demand for immediate AI processing necessitate a departure from this historical model. The convergence of satellite miniaturization and the commercialization of heavy-lift launch vehicles has finally made it possible to consider the vacuum of space as a viable environment for heavy-duty computation.

These background factors are significant because they represent a departure from viewing space primarily as a medium for communication toward viewing it as a medium for processing. In the past, satellites acted merely as relays, passing data from one terrestrial point to another with minimal onboard manipulation. The modern paradigm shift involves moving the actual “brain” of the network into orbit. This evolution allows for a decentralized digital architecture that remains resilient against natural disasters, terrestrial power outages, and localized infrastructure failures. For India, understanding this historical transition is vital for recognizing why the move to space is the most logical and efficient next step for its burgeoning digital economy.

Redefining Efficiency and Performance in the Vacuum

The Thermodynamics of Space-Based Computation: A New Efficiency Standard

A primary driver for relocating AI workloads to orbit is the resolution of the deepening energy and cooling crisis currently plaguing terrestrial facilities. On the ground, datacentres must expend nearly half of their total energy consumption simply to manage the heat generated by dense server racks, often relying on billions of gallons of water for evaporative cooling. Space, by contrast, offers an environment where solar energy is virtually unlimited and the thermal management process can be entirely redefined. By operating in a vacuum, facilities can employ advanced radiative cooling systems to shed excess thermal energy into the void of space without the need for traditional coolants or fans.

This shift in thermal management allows for a theoretical Power Usage Effectiveness (PUE) that approaches a perfect 1.0. This metric, which represents the ratio of total energy used to the energy delivered to the compute hardware, is a target that terrestrial facilities can never truly reach due to the inherent inefficiency of atmospheric cooling. In an orbital setting, almost every watt of harvested solar power is directed toward actual AI inferencing rather than the auxiliary systems required to prevent hardware meltdowns. For a nation like India, which faces significant power stability challenges in various regions, this orbital efficiency provides a sustainable path toward scaling AI without further straining the domestic energy grid.

Latency and the Mandate for Real-Time Inference: Scaling Global Connectivity

As the AI industry transitions from the energy-intensive training of large models toward the “inference” phase, the speed at which a model responds to a query becomes the most critical performance metric. Traditional terrestrial networks are often plagued by routing delays and atmospheric interference that can result in latencies of 100 milliseconds or more, a delay that is unacceptable for time-sensitive applications like autonomous drone navigation or remote surgical procedures. By deploying compute modules in Low Earth Orbit (LEO), India aims to provide a distributed network that offers sub-15ms response times across the globe.

This proximity enables a sophisticated form of global edge computing, allowing high-speed AI services to be delivered to aircraft, maritime vessels, and remote rural outposts that are currently bypassed by fiber-optic networks. The strategic advantage of this low-latency infrastructure cannot be overstated; it democratizes access to high-performance AI, ensuring that geographic isolation is no longer a barrier to digital participation. By situating the compute hardware just hundreds of miles above the user rather than thousands of miles across a terrestrial network, the orbital model provides a seamless experience for the next generation of real-time digital services.

Strategic Sovereignty: Protecting National Assets through Orbital Isolation

Beyond the technical and economic benefits, orbital datacentres offer a unique solution to the problem of national data sovereignty. In an increasingly fragmented geopolitical landscape, the security of critical data—ranging from healthcare records to national defense intelligence—is a paramount concern. Terrestrial datacentres, while physically secure, are susceptible to localized conflicts, cyber-attacks on physical grid infrastructure, and foreign interference in the supply chain. Establishing a presence in orbit creates an effective “air-gap” from these terrestrial vulnerabilities, making space the preferred high ground for sovereign data storage and processing.

This approach addresses a common misconception that space is merely a tool for telecommunications, repositioning it as a fortress for digital assets. By maintaining control over sovereign hardware in orbit, India ensures that its critical AI ecosystems remain operational even if ground-based international links are compromised. This strategic isolation provides a level of security that is difficult to replicate on Earth, where the interconnected nature of power and data lines creates a web of potential failure points. Orbital facilities, operating on independent solar power and communicating through encrypted satellite links, offer a resilient alternative for the most sensitive workloads.

Emerging Trends in Orbital Hardware and Sustainability

The current trajectory of the orbital compute industry is being defined by a rapid evolution in hardware philosophy and environmental stewardship. One of the most significant trends is the development of “repurposeable” rocket architecture. Rather than treating the upper stages of launch vehicles as expendable debris, pioneering Indian firms are designing these structures to host compute payloads once they have fulfilled their primary delivery mission. This method significantly reduces the cost of deployment and minimizes the introduction of new objects into the orbital environment, creating a more sustainable ecosystem for long-term growth.

Furthermore, the industry is shifting away from the traditional model of satellite repair toward a continuous hardware refresh cycle. Given the pace at which AI hardware evolves, the idea of maintaining a single satellite for fifteen years is no longer economically or technically viable. Instead, the current model favors the deployment of smaller, modular units that are designed to de-orbit and burn up in the atmosphere after a three-to-five-year lifespan. This ensures that the orbital datacentre fleet always utilizes the most advanced chips and cooling technologies, treating the orbital server more like a piece of high-end consumer electronics than a permanent piece of civil infrastructure.

Strategic Recommendations for an Orbital Economy

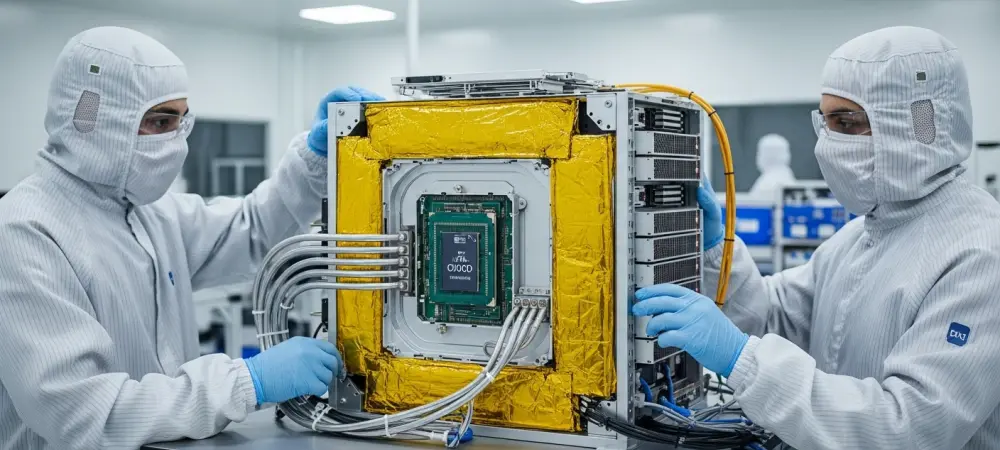

For organizations and policymakers looking to capitalize on this transition, the primary focus must be on the development of specialized hardware tailored for the rigors of the space environment. This includes the mass production of radiation-hardened AI processors that can withstand the bombardment of cosmic rays without compromising data integrity. Additionally, investments should be prioritized in the field of advanced materials for radiative cooling, which will be the primary bottleneck for increasing compute density in future orbital nodes. Businesses that align their AI deployment strategies with these emerging orbital capabilities will likely find a significant competitive advantage in the coming years. The economic potential of this sector is substantial, with projections suggesting a $50 billion market opportunity by 2040 for nations that can successfully integrate spacetech and AI. To realize this, India must continue to foster a collaborative environment between its sovereign cloud providers and its emerging private aerospace startups. A phased approach, starting with localized pilot programs and scaling to a distributed global network of hundreds of orbital nodes, will be essential for managing the risks associated with this frontier. By treating space as a logical extension of the national infrastructure, India can build a resilient digital backbone that is immune to many of the limitations that define the terrestrial age.

Navigating the Final Frontier of Digital Intelligence

The development of space-based datacentres was a significant milestone that reflected an ambitious bid for technological independence and global leadership. By addressing the physics-based limitations of the Earth—specifically the constraints of heat, power, and terrestrial distance—the initiative successfully built a resilient and sovereign ecosystem for the AI era. This transition was the logical progression for a global society that had fundamentally outgrown its terrestrial boundaries. The marriage of aerospace engineering and high-performance computing matured into a reality that ensured the digital services of the future remained operational and secure.

The implementation of these orbital facilities ultimately proved that the “cloud” was not just a metaphorical marketing term but a necessary evolution of physical infrastructure. The move toward the vacuum of space addressed the critical cooling and energy problems that threatened to halt progress on the ground. By securing the high ground for digital intelligence, the nation ensured that its technological future was not tethered to the vulnerabilities of the surface. This strategic pivot established a new standard for efficiency and security, providing a roadmap for how a modern economy could thrive by looking beyond the horizon for its most essential resources.