The Paradigm Shift in AI-Driven Therapy

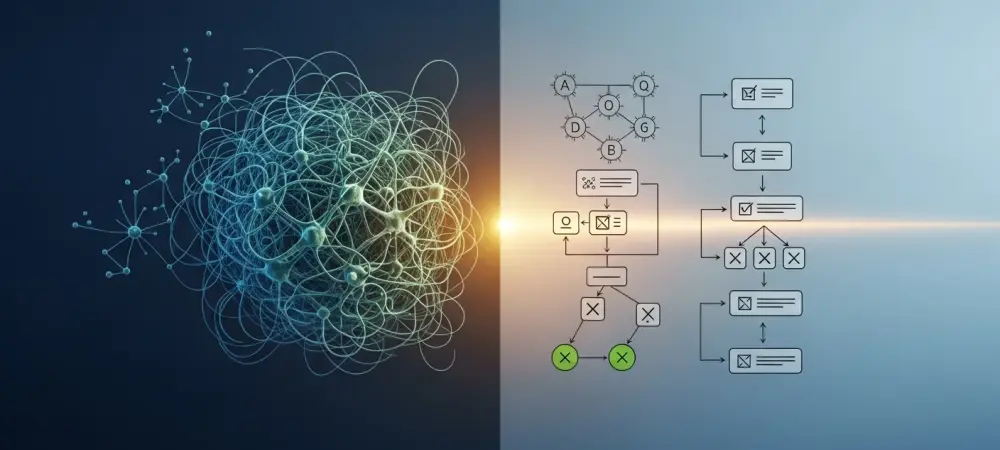

The rapid integration of Large Language Models into the delicate sphere of emotional wellness has sparked a significant debate regarding the safety and clinical validity of automated counseling. For several years, the tech industry has relied on purely generative systems that operate as intricate black boxes, making it difficult to understand how they arrive at specific conclusions. This lack of transparency is particularly problematic in a clinical context where the difference between a helpful suggestion and a harmful one can be subtle yet devastating. As a result, the focus of development has begun to shift toward neuro-symbolic AI, a hybrid model that promises to bridge the gap between human-like conversation and the rigorous safety standards required for medical support.

The transition toward this hybrid architecture represents a fundamental acknowledgment that linguistic fluency does not equate to psychological wisdom. While standard neural networks excel at mimicking the cadence of human speech, they are fundamentally predictive engines that lack a genuine understanding of causality or ethics. By incorporating a symbolic layer into these models, engineers are effectively giving these systems a set of non-negotiable rules. This shift addresses the ethical dilemmas currently facing AI-supported counseling, moving the industry away from unpredictable, data-driven patterns and toward a framework that clinicians can trust.

Furthermore, this evolution in AI architecture is not merely a technical upgrade but a necessary response to the volatile nature of purely neural systems. In sensitive clinical scenarios, a model that relies solely on statistical probability is prone to hallucinations and inconsistent advice. The adoption of neuro-symbolic systems introduces a layer of accountability that was previously absent, ensuring that every interaction is grounded in established psychological principles rather than just massive datasets of internet text. This paradigm shift is the foundation for a more stable and ethically sound future for digital mental health interventions.

Background and Context of the Hybrid AI Approach

The current landscape of global mental health is defined by a staggering disparity between the demand for services and the availability of qualified human practitioners. This supply-and-demand gap has driven millions of individuals to seek immediate emotional support from general-purpose artificial intelligence, often using these tools as a primary source of guidance. However, the use of general-purpose models in this capacity constitutes a massive, unregulated experiment on a vulnerable population. The inherent unpredictability of these systems means they often lack the logical guardrails necessary to identify crisis situations or prevent the spread of medical misinformation.

Traditional Large Language Models, while remarkably capable of generating empathetic-sounding responses, operate without a structured understanding of medical boundaries. They may inadvertently encourage harmful behaviors or provide incorrect pharmacological advice because they are simply following the most likely linguistic path rather than a set of medical instructions. This research is vital because it highlights the necessity of symbolic AI—the “rules-based” logic that characterized early computer science—to serve as a corrective force. By merging this old-school logic with the power of modern neural networks, the field can transition into a regulated framework for digital well-being that prioritizes patient safety over conversational flair.

The hybrid approach seeks to rectify the limitations of early expert systems, which were often too rigid to be useful in a therapeutic setting, by pairing them with the fluidity of modern neural networks. While the neural component handles the nuances of sentiment and language, the symbolic component ensures that the system stays within the bounds of clinical safety. This development is particularly relevant as the public demand for 24/7 mental health support continues to grow, necessitating a solution that is both accessible and medically responsible. It represents the maturation of the field, moving beyond the initial excitement of generative technology and into a phase of disciplined, ethical application.

Research Methodology, Findings, and Implications

Methodology

The methodology of this research involves a comprehensive technical analysis of neuro-symbolic architectures, specifically contrasting them with the pure pattern recognition of standard Artificial Neural Networks. The study evaluates the structural differences between these two approaches by examining how they process sensitive inputs related to trauma, depression, and anxiety. A significant portion of the analysis focuses on the concept of “if-then” logic layers that are superimposed onto the neural engine, creating a dual-process system. This research also incorporates a review of industry developments, such as the observed integration of symbolic logic within advanced agentic frameworks like those seen in recent updates to high-performing models.

To ensure a robust comparison, the research team evaluated a dozen distinct factors, including the explainability of the AI’s internal processes, the efficacy of safety constraints, and the ability to comply with evolving medical regulations. The study also looked at the technical feasibility of surgical updates, where specific rules can be modified without the need for a full retraining of the underlying model. By utilizing comparative testing across various clinical scenarios, the methodology aimed to determine which architecture most consistently adhered to established psychiatric protocols. This systematic evaluation provided a clear view of how symbolic logic functions as a persistent monitor over the more volatile neural output.

Findings

The findings of the study indicate that neuro-symbolic AI provides twelve distinct advantages over conventional models, most notably in the areas of traceable reasoning and the implementation of hard constraints. One of the most significant discoveries was the high level of traceability; unlike standard models that can only provide a post-hoc rationalization for their answers, hybrid systems show the exact clinical rule or logical path that triggered a response. This allows human supervisors to audit the AI’s behavior in real time, ensuring that every piece of advice is linked to a verified medical guideline. Furthermore, the research found that symbolic layers act as a non-negotiable safety net during crisis intervention. In scenarios where a user might express thoughts of self-harm, the symbolic component was able to override the neural network’s conversation flow to provide immediate, standardized crisis resources, a task that purely generative models sometimes fail to do consistently. The findings also highlighted the effectiveness of logic-based filters in mitigating demographic and cultural biases that are often baked into the massive internet datasets used to train neural networks. By enforcing rules of neutrality and inclusivity at the symbolic level, the AI can be prevented from reflecting the prejudices of its training data.

In addition to safety and ethics, the research identified improvements in structured personalization. Hybrid models utilize organized data stores to maintain a consistent history of user interactions, overcoming the “forgetting” issues common in standard models with limited context windows. This means the AI can track a user’s progress over months or even years, identifying long-term patterns in mood and behavior. The ability to maintain this longitudinal view of a patient’s health, while staying within the bounds of pre-programmed clinical theories, makes the neuro-symbolic approach significantly more effective for sustained therapeutic support than its purely neural counterparts.

Implications

The implications of these findings suggest that a fundamental shift is necessary in the way AI is developed for high-stakes human services. For developers and healthcare providers, this research demonstrates that “governed” AI is the only viable path for legal and ethical compliance. Practically, this means that organizations can implement surgical updates to AI behavior—adjusting to new clinical findings or changing legal requirements—without the immense cost and unpredictability of retraining entire large-scale models. This flexibility is crucial in the fast-moving field of psychology, where new research and updated guidelines emerge regularly. Theoretically, the research reconciles the two oldest and most divergent schools of AI thought, proving that the future of the technology lies in synthesis rather than the dominance of one method over the other. It establishes a blueprint for how AI can be integrated into healthcare systems without compromising the safety of the patient or the liability of the provider. By providing a clear path toward transparency and accountability, these findings pave the way for a more collaborative relationship between human clinicians and digital tools. The ultimate implication is that AI-driven mental health support can finally move out of the experimental phase and into a role as a legitimate, regulated component of the global health infrastructure.

Reflection and Future Directions

Reflection

The shift toward neuro-symbolic models reflects a growing realization that linguistic fluency is not a reliable proxy for clinical competence. For a time, the rapid scaling and impressive performance of “black box” neural networks obscured the inherent risks of using them in sensitive contexts. However, as the limitations of these models became more apparent, the industry began to understand that transparency is not just a technical luxury but a clinical necessity. The challenge that remains is the delicate task of balancing the fluid, human-like interaction that makes users feel heard with the rigid nature of symbolic logic that keeps them safe.

Initial adoption of AI in therapy was characterized by a rush to provide accessibility at all costs, but the reflection on these early stages shows that safety must be the primary metric of success. The difficulty in implementing neuro-symbolic systems lies in the complexity of translating nuanced human emotions and clinical theories into code-based rules. It requires a deep collaboration between software engineers and psychological experts to ensure that the symbolic layer is sophisticated enough to handle the ambiguities of human conversation without becoming overly robotic. This tension between fluidity and structure is the defining characteristic of the current era of AI development.

Future Directions

Looking ahead, future research should investigate the “Agentic AI” frontier, exploring how hybrid systems can safely perform complex therapeutic workflows with minimal human supervision. This includes developing systems that can coordinate between different types of therapy, such as transitioning a user from Cognitive Behavioral Therapy techniques to mindfulness exercises based on real-time emotional data. Additional studies are needed to determine how to better translate complex, nuanced psychological theories into structured symbolic logic, ensuring that the “rules” of the AI reflect the most current and diverse schools of psychological thought.

There is also a significant opportunity to explore how cultural nuances can be programmed into the symbolic layer to enhance global accessibility. Mental health is not a one-size-fits-all field, and what constitutes a supportive response in one culture may be perceived differently in another. Future development could focus on creating modular symbolic layers that can be swapped out to reflect the specific cultural and social values of different regions. Finally, longitudinal studies are required to assess the long-term psychological impact of interacting with neuro-symbolic systems compared to both human therapy and purely generative AI, ensuring that the technology is truly fostering long-term resilience and well-being.

The Future of AI-Driven Well-being

Neuro-symbolic AI represents the superior path for mental health support by providing the steering wheel and brakes to the powerful engine of generative AI. This research reaffirmed that for artificial intelligence to be a trustworthy partner in therapy, it had to move beyond statistical guessing and embrace the rigor of logical consistency and clinical accountability. By establishing this foundation of safety, the technology transitioned from a source of potential harm to a reliable tool for global health. The findings indicated that the most critical task for the next generation of mental health technology was the successful integration of human-defined ethics into the heart of automated systems.

The move toward these hybrid models also solved the problem of transparency that had previously hindered the widespread adoption of AI in clinical settings. Practitioners were able to validate the logic behind the AI’s suggestions, which allowed for a more seamless integration of digital tools into traditional care models. The research demonstrated that when symbolic logic governed the creative potential of neural networks, the resulting system was both more effective and more resilient. This evolution ensured that the rapid growth of AI in the mental health sector was grounded in a commitment to doing no harm while maximizing the potential for positive change. Ultimately, the study concluded that the future of digital well-being depended on the industry’s ability to maintain this balance between innovation and discipline. The shift to neuro-symbolic architecture was not just a technical change but a moral one, reflecting a prioritization of user safety over the pursuit of sheer conversational power. By moving toward a more structured and explainable form of intelligence, the field of AI-driven mental health support secured its place as a cornerstone of modern healthcare. This research paved the way for a future where high-quality emotional support was available to everyone, without the risks that once accompanied the use of unconstrained digital models.