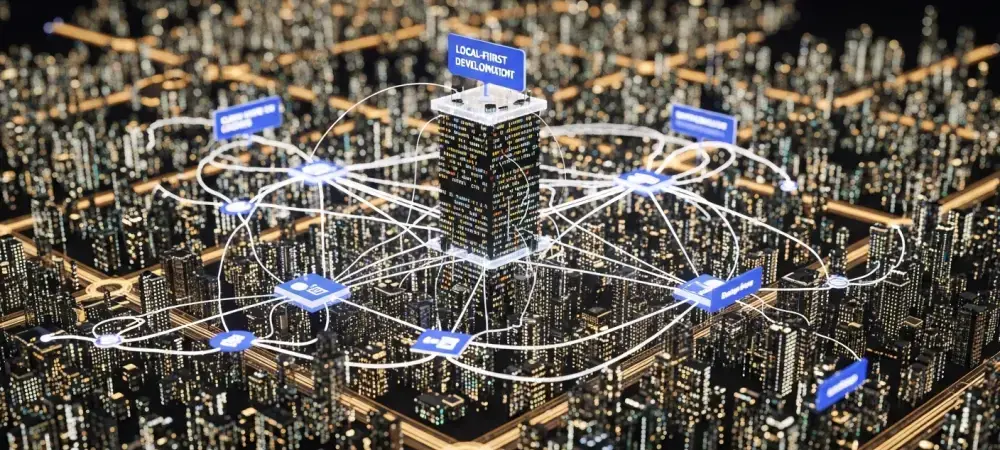

The modern browser has evolved from a simple viewing window into a high-performance database engine that effectively bridges the gap between traditional web applications and native desktop software. This transformation marks the rise of local-first architecture, a paradigm shift where the primary data management happens on the user’s device rather than on a remote server. By prioritizing local state, developers are solving the persistent “offline-sync” problem, ensuring that applications remain functional regardless of internet stability. This transition reduces server-side complexity and drastically lowers latency, making the user experience feel nearly instantaneous.

Modern web engineering is currently defined by the maturation of these technological pillars. The analysis of this trend reveals a move toward client-server symmetry, where the browser is no longer a passive recipient of data but an active, sovereign participant in the application’s lifecycle. Industry adoption of these patterns indicates a permanent shift in how JavaScript-based ecosystems handle data, shifting the focus from thin clients toward robust, data-dense local environments.

The Shift Toward Client-Side Sovereignty

The significance of client-side sovereignty lies in its ability to decouple user interaction from network reliability. Historically, web applications relied on a constant heartbeat with a central database, leading to “loading spinners” and fragile state management. Local-first architecture upends this model by embedding high-performance storage directly within the browser context. This approach simplifies the development process by allowing engineers to write code that interacts with a local source of truth, leaving the background synchronization to specialized middleware that manages conflict resolution automatically.

Moreover, this architectural evolution reflects a broader trend toward decentralization in software design. As the browser gains the capacity to run complex relational queries and persistent storage tasks, the backend’s role is being redefined. Instead of handling every minor UI update, the server is becoming a coordination layer for authentication, global state backup, and multi-user collaboration. This shift not only improves performance but also enhances privacy, as sensitive user data can remain on the device until it is explicitly required for external processing.

The Growth and Practical Application of Local-First Tech

Market Trajectory: Adoption of In-Browser Databases

Foundational technologies like WebAssembly and the Origin Private File System have provided the necessary infrastructure for this local-first revolution. WebAssembly allows languages like C++ and Rust to run at near-native speeds within the browser, enabling the porting of robust database engines that were once restricted to server environments. The Origin Private File System provides a dedicated, high-performance storage area that bypasses the limitations of older browser storage methods like IndexedDB, allowing for the direct manipulation of binary files.

In a surprising turn, the industry is witnessing the “Revenge of SQL” within the JavaScript ecosystem. Developers are increasingly moving back to relational databases like PGlite and SQLite for client-side storage because of their reliability and structured querying capabilities. These tools allow for a unified data model where the same SQL logic used on the backend can be mirrored exactly in the browser. Furthermore, performance-centric tools such as the Rust-based Rolldown and the Bun runtime are gaining significant traction, providing the speed necessary to manage these complex local data structures without compromising the developer experience.

Real-World Implementation: Architectural Shifts

The practical application of these technologies is perhaps best illustrated by the emergence of Electrobun, which is currently challenging the dominance of Electron. By leveraging the Bun runtime, Electrobun enables the creation of leaner desktop applications that avoid the excessive resource consumption typically associated with bundled Chromium instances. This shift toward more efficient runtimes allows developers to build local-first applications that feel like native software while maintaining the flexibility of a JavaScript codebase.

Simultaneously, state management is undergoing a refinement through the use of “Signals.” This mechanism provides fine-grained reactivity, replacing the heavy Virtual DOM diffing processes that characterized earlier framework generations. By tracking dependencies at the atomic level, Signals ensure that only the specific parts of the UI that need updating are re-rendered. This efficiency is critical for local-first apps that manage large volumes of data on the fly, ensuring that the interface remains responsive even during heavy local database operations.

Industry Perspectives: Maturing Ecosystem

Core maintainers of the most influential tools are currently guiding a transition of the core development stack toward high-performance languages. There is a visible trend where the tooling itself is being rewritten; for instance, the migration of TypeScript and Vite toward Go and Rust highlights a demand for compilation speeds that JavaScript alone cannot achieve. This evolution ensures that the development environment can keep pace with the increasing complexity of client-side applications, allowing for real-time feedback during the coding process.

Expert opinions suggest that cross-language integration, such as Project Detroit, is now essential for maintaining enterprise-grade JavaScript applications. This initiative facilitates better interoperability between JavaScript and other ecosystems like Java or Python, which is vital for organizations that need to share logic across different platforms. Additionally, the rise of AI-driven development has necessitated the creation of specialized documentation and tooling designed for machine consumption, allowing Large Language Models to perform more accurate code inspection and automated debugging.

The Future: Local-First and High-Performance Web

Looking ahead, the impending engine shift in TypeScript 7.0 toward a Go-based core is expected to revolutionize development velocity. This transition will likely eliminate the bottlenecks currently associated with large-scale type checking, enabling engineers to manage massive codebases with unprecedented ease. Furthermore, the integration of AI agents directly within frameworks like Next.js suggests a future where the development cycle is significantly augmented by specialized CLI tools that can suggest architectural improvements and identify potential data synchronization conflicts before they reach production.

However, the path forward is not without its hurdles. Addressing data security within the browser and managing complex synchronization conflicts in multi-device environments remain significant challenges. As local databases grow in size and complexity, the hardware requirements for running these applications will become a more prominent consideration for developers. Nevertheless, the blurring lines between web, desktop, and AI operations will likely solidify JavaScript’s position as an all-purpose engineering language capable of handling sophisticated enterprise workloads.

Conclusion: New Era for the JavaScript Powerhouse

The transition from rudimentary scripting to a robust, local-first architecture marked a definitive turning point for the web. JavaScript’s maturation was solidified through the adoption of faster runtimes and the seamless integration of SQL databases within the browser environment. These developments empowered developers to build applications that were not only faster but also significantly more resilient to the fluctuations of network availability. The community successfully navigated the shift toward fine-grained reactivity, ensuring that user interfaces could handle the demands of modern data processing.

As these technologies moved into the mainstream, the focus shifted toward optimizing the developer experience and ensuring cross-language compatibility. The introduction of high-performance tooling ensured that the ecosystem remained competitive, while AI-ready documentation paved the way for more intelligent automation. This evolution provided a clear roadmap for future-proofing applications, reminding the industry that staying ahead required a commitment to local performance. Ultimately, embracing this shift became essential for any organization seeking to deliver high-quality digital experiences in an increasingly complex landscape.