In the high-stakes environment of modern commerce, an organization’s ability to survive no longer depends solely on its physical assets but on how effectively it can harness the invisible streams of information flowing through its systems. Companies that treat data as a byproduct of business operations rather than their most valuable capital often find themselves drowning in noise while competitors extract precise, actionable signals. This shift necessitates a move away from simple information gathering toward a robust, data-first culture where every decision is anchored in verified intelligence. The transition to a data-centric model is not merely a technical upgrade; it is a fundamental strategic pivot that requires a departure from traditional management styles. Historically, data was relegated to the IT department as a cost center, but in the current landscape, it has become the primary engine of value creation. This roundup explores the critical perspectives and architectural shifts necessary to build a sustainable data ecosystem that survives the volatility of modern markets. By examining the interplay between technology and human capital, we can begin to see how a unified strategy transforms raw numbers into a durable competitive advantage.

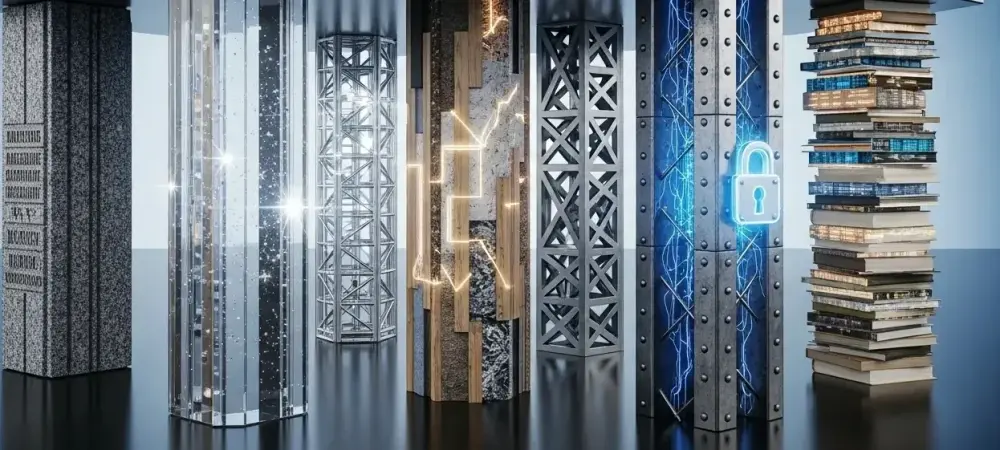

Architecting a Modern Ecosystem for Sustainable Competitive Advantage

Establishing Data Integrity Through Centralized Catalogs and Structural Hygiene

A pervasive challenge in the digital age is the proliferation of “dark data,” which consists of information that is collected and stored but never utilized because its existence or location is unknown to decision-makers. To combat this, industry leaders emphasize the implementation of centralized data catalogs that act as a single source of truth for the entire enterprise. These catalogs utilize sophisticated metadata to bridge the gap between technical storage environments and business-centric users, ensuring that anyone within the organization can find the information they need without a deep background in database administration.

However, the creation of a catalog is only half the battle; maintaining structural hygiene is the other. Many experts argue that data strategy must prioritize quality control and validation at the point of ingestion to prevent the “garbage in, garbage out” cycle. This involves establishing rigorous standards for data integration and cleansing, ensuring that every record is accurate, timely, and compliant with internal standards. While some organizations find the initial investment in these processes daunting, the long-term cost of making decisions based on fragmented or incorrect information far outweighs the upfront expenditure on data integrity.

Balancing Technological Governance with User-Centric Software Autonomy

The tension between centralized IT control and the agility required by individual departments often creates friction within large enterprises. In the past, strict governance meant that users were forced to work with a rigid set of pre-approved tools, which often stifled innovation and led to the adoption of “shadow IT.” Today, a more nuanced approach is emerging, where organizations distinguish between the heavy-duty infrastructure managed by IT and the flexible, front-end visualization tools used by business analysts. This allows for a “bring your own analytics” environment where data scientists can use the specific methodologies they prefer while still operating within a secure, governed framework.

This balance of autonomy and oversight reduces the risk of fragmented data silos while empowering employees to move at the speed of the market. For instance, while a marketing team might use a specialized tool for customer sentiment analysis, the underlying data must still come from a verified, governed warehouse. This hybrid model minimizes the risk of users accidentally creating insecure data replicas or using non-compliant software. By defining clear boundaries for tool usage based on professional roles, businesses can foster a culture of innovation that does not compromise the security or integrity of the master data sets.

Navigating the Ethics of Advanced Analytics and Predictive Methodologies

As organizations move beyond simple reporting toward predictive modeling and cluster analysis, the ethical implications of their data usage become increasingly complex. While these advanced techniques offer incredible potential for optimizing supply chains or anticipating customer needs, they also introduce risks related to bias and transparency. Some practitioners suggest that the application of predictive algorithms should be subject to the same level of scrutiny as financial audits, especially when dealing with sensitive information or personal identifiers. The goal is to ensure that automated insights do not reinforce existing prejudices or violate the trust of the consumer base.

Regional differences in regulatory environments also play a significant role in how these methodologies are deployed. In some jurisdictions, the right to an explanation for automated decisions is a legal requirement, forcing companies to move away from “black box” models toward more interpretable AI. Future-proofing a data strategy involves building an ethical framework that anticipates these shifts rather than reacting to them. This means training personnel not only in the technical execution of analytics but also in the sociotechnical impact of their work, ensuring that technological progress remains aligned with corporate values and public expectations.

Cultivating Data Literacy and the Democratization of Knowledge Assets

The democratization of data is often discussed in terms of access, but true democratization requires a foundation of high data literacy across the entire workforce. It is no longer sufficient for only a handful of specialists to understand how to interpret a dashboard; instead, employees from human resources to the factory floor must be equipped with the skills to ask the right questions of the information available to them. This cultural shift transforms data from a niche technical asset into a universal language that facilitates clearer communication across disparate departments.

Moreover, a unique trend in modern data strategy is the move toward crowdsourced data management. By involving subject matter experts in the definition of master data—such as what constitutes a “customer” or a “product”—organizations ensure that their systems reflect the practical realities of the business. This collaborative approach prevents the disconnect that often occurs when IT teams build systems in isolation. When data literacy is high, users are more likely to take ownership of the data they produce, leading to a self-sustaining cycle of high-quality information and more insightful analysis that enhances the collective knowledge of the enterprise.

Operationalizing the Framework Through Policy, Auditing, and Human Capital

Moving from a theoretical framework to an operational reality requires a disciplined approach to documentation and policy. A cohesive strategy acts as a roadmap for every project, asking whether the data usage is appropriate, approved, and purposeful before any work begins. These policies are not meant to be bureaucratic hurdles but are instead the essential guardrails that protect the organization from legal and security liabilities. Retrospective auditing of these policies allows leaders to refine their approach over time, ensuring that the data infrastructure remains lean and effective as the business scales.

Furthermore, the human element remains the most significant variable in the success of a data strategy. While cloud-based systems have automated many routine tasks, the need for skilled architects, engineers, and savvy analysts has never been greater. These individuals are responsible for translating high-level business objectives into the physical architecture that supports daily operations. Investing in continuous professional development ensures that the workforce remains capable of navigating new technologies and methodologies as they emerge, securing the organization’s future in an increasingly data-dependent world.

The Future of the Data-Driven Enterprise: A Unified Vision for Growth

The integration of these foundational pillars created a pathway for organizations to transform their informational burdens into strategic assets. By synthesizing data integrity, tool autonomy, ethical analytics, and universal literacy, enterprises established a framework that was both resilient to change and flexible enough to foster innovation. The convergence of these elements meant that the strategy was no longer a standalone IT initiative but a central component of the broader corporate vision, influencing everything from budget allocation to market positioning.

The progression of this unified vision resulted in businesses that were better equipped to handle the complexities of a volatile economy. Decision-makers gained the ability to act with confidence, knowing that their insights were grounded in a secure and transparent ecosystem. As the landscape continues to shift, the ongoing focus on the human-data relationship will likely remain the defining factor for organizational success. Moving forward, the most effective leaders will be those who prioritize the development of specialized “data translators” who can bridge the gap between technical capabilities and strategic business outcomes. This approach did not just improve internal efficiencies; it fundamentally redefined the role of information as a driver of global growth and long-term sustainability.