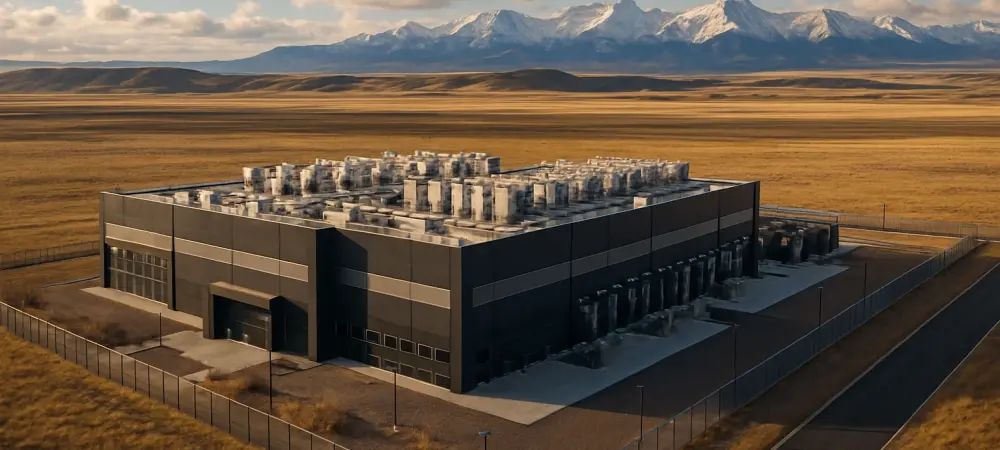

I’m thrilled to sit down with Dominic Jainy, a seasoned IT professional whose deep expertise in artificial intelligence, machine learning, and blockchain has positioned him as a thought leader in cutting-edge technology applications. Today, we’re diving into an exciting project he’s closely following: Prometheus Hyperscale’s new AI data center campus in Casper, Wyoming. Our conversation explores the strategic choices behind this development, the innovative sustainability measures being implemented, and the broader implications for the future of digital infrastructure. From the massive scale of the project to the groundbreaking carbon capture technologies, Dominic offers invaluable insights into how this campus aims to redefine hyperscale computing while prioritizing environmental responsibility.

How did Prometheus Hyperscale decide on Casper, Wyoming, for their second AI data center campus?

Casper was a strategic pick for several reasons. It’s the second-largest city in Wyoming, which means access to a decent labor pool and infrastructure compared to more remote areas. Wyoming itself is attractive due to its business-friendly policies and relatively low energy costs, which are critical for data centers with massive power needs. I also believe the proximity to natural gas resources played a role, as the campus will rely on this for power, albeit with a sustainable twist. Compared to their flagship campus in Evanston, Casper offers a different dynamic—potentially better connectivity to larger markets and a bit more urban support, which can help with scaling operations.

What can you tell us about the projected 1.5GW of IT capacity for this campus and the kind of demand it’s meant to address?

That 1.5GW capacity is staggering—it’s a clear signal that Prometheus is targeting hyperscalers, particularly those running intensive AI workloads. We’re talking about major tech companies or AI-driven enterprises that need immense computational power for training models or processing vast datasets. This scale positions the Casper campus as one of the heavyweights, not just regionally but nationally. Most data centers in the region operate at a fraction of that capacity, so this project is really setting a new benchmark for what’s possible in terms of meeting the skyrocketing demand for AI infrastructure.

With a $500 million investment to kick off this project, how do you see that funding shaping the early stages of development?

That half-a-billion-dollar investment is a significant commitment and likely comes from a mix of private equity, strategic partnerships, and possibly some incentives from local or state government, given Wyoming’s push for economic development. In the initial phases, I’d expect the bulk of this money to go toward land acquisition, site preparation, and the foundational infrastructure—think power systems and early construction of high-density compute halls. There’s also likely a chunk allocated to integrating the advanced cooling systems they’ve mentioned, which are crucial for energy efficiency at this scale.

The timeline has the first IT-ready power arriving in 2026. What are some of the key steps needed to hit that target?

Getting to IT-ready power by 2026 means they’ve got a tight schedule. First, they’ll need to finalize permits and environmental assessments, which can be tricky given the scale and the use of natural gas. Then there’s the construction of the power generation and distribution systems, alongside the data halls themselves. Partnerships with tech providers for carbon capture will need to be locked in early to ensure integration. Challenges like supply chain delays for equipment or unexpected regulatory hurdles could slow things down, but with a well-coordinated plan, it’s achievable.

Prometheus claims the campus will be carbon negative despite using natural gas. How do you think they’re planning to pull that off?

It’s an ambitious claim, but it hinges on their collaboration with carbon capture specialists. They’re deploying direct air capture and other technologies to not only offset the emissions from natural gas but to remove more carbon from the atmosphere than they produce. The idea is to capture harmful emissions at the source and store them permanently in sequestration wells. If done right, this could set a precedent for fossil fuel-powered data centers to operate sustainably, though the tech’s scalability and cost-effectiveness are still being proven at this level.

Can you shed some light on the partnerships with carbon capture firms and how they contribute to this project?

These partnerships are central to the carbon-negative goal. One firm focuses on providing net-zero electricity through advanced capture methods, ensuring the power generation itself is clean. The other likely handles the logistics of storing captured carbon in geological formations, making sure it’s locked away for good. Working with two specialized firms allows Prometheus to cover both ends of the carbon management spectrum—capture and sequestration—which is a smart way to hedge against the limitations of any single technology.

There’s mention of a related initiative aiming to be the world’s largest carbon capture project. How does that tie into the Casper campus?

That initiative is a massive undertaking in Wyoming, and it’s directly linked to the power and sustainability strategy for the Casper campus. It’s about creating a blueprint for large-scale carbon removal that can support high-energy facilities like data centers. By tying into this project, Prometheus benefits from cutting-edge infrastructure and expertise, which boosts their credibility in claiming carbon negativity. It also positions Wyoming as a hub for sustainable tech innovation, which could draw more investment to the state.

Looking ahead, what’s your forecast for the role of sustainable data centers in the future of AI and digital infrastructure?

I think we’re at a turning point where sustainability isn’t just a nice-to-have—it’s becoming a core requirement for data centers, especially as AI workloads continue to explode. The energy demands are only going to grow, and with that comes scrutiny on environmental impact. Projects like Casper, if successful, could prove that you can balance massive computational needs with ecological responsibility. My forecast is that within the next decade, carbon-negative or net-zero data centers will become the industry standard, driven by both regulation and market demand for greener tech solutions. We’ll see more innovation in power sources and capture tech, and places like Wyoming could lead the charge.