The persistent global scarcity of DRAM has forced one of the industry’s leading players to make a dramatic pivot in its manufacturing strategy, fundamentally reshaping which graphics cards will be readily available to consumers. Amid this challenging supply environment, NVIDIA is reportedly implementing a sophisticated new approach that prioritizes the production of graphics cards based on profitability relative to their memory consumption. This strategy, as outlined by Gigabyte CEO Eddie Lin, moves beyond simply meeting demand and instead focuses on maximizing the financial return from every gigabyte of precious VRAM. This calculated shift signals a significant change in the consumer GPU landscape, where the availability of certain models will no longer be dictated by popularity or performance tier alone, but by a cold, hard calculation of their efficiency in generating revenue from limited memory resources. The implications are far-reaching, suggesting a market where mid-range options may become scarcer while both budget and ultra-premium cards receive preferential treatment.

A New Calculus for Production

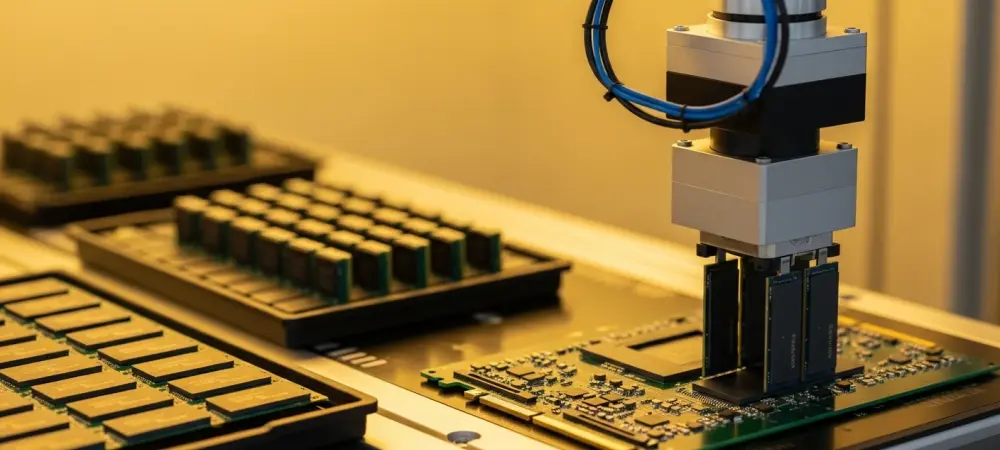

At the heart of this strategic realignment is a formula referred to as the “Profit/GB” model, a method for calculating the revenue generated per gigabyte of VRAM for each distinct GPU segment. This analytical approach allows the company to direct its limited memory allocation toward the products that yield the highest financial return. An illustrative example breaks down the logic: a hypothetical graphics card priced at $400 and equipped with 8GB of VRAM contributes $50 of revenue for every gigabyte of memory it uses. In sharp contrast, a more powerful and expensive $500 GPU featuring 16GB of VRAM would contribute only approximately $32 per gigabyte. From a resource-efficiency standpoint, the less expensive 8GB model becomes the more attractive product to manufacture when memory is the primary production bottleneck. This model strongly suggests that NVIDIA’s manufacturing lines will increasingly favor 8GB models, such as the GeForce RTX 5060 and 5060 Ti, and the highest-end SKUs like the RTX 5090, which can command premium pricing that offsets their VRAM allocation and maintains a high profit-per-gigabyte ratio.

The tangible market effects of this profitability-focused strategy are already beginning to surface, providing a coherent explanation for several recent and seemingly disconnected industry rumors. Speculation regarding NVIDIA’s decision to restart production lines for older-generation cards, like the RTX 3060, aligns perfectly with a model that favors lower-VRAM configurations. Furthermore, whispers of a significant MSRP increase for the upcoming flagship RTX 5090 can be understood as a necessary adjustment to ensure its high VRAM count remains profitable under the new calculus. Consequently, consumers may witness the quiet discontinuation of less profitable mid-range SKUs that offer a less favorable balance of VRAM to price. This could lead to a scenario where the market is saturated with older, more profitable GPUs while the supply of modern, VRAM-heavy mid-tier cards dwindles. As a further measure, the company might even explore using older GDDR5 memory modules, which are not in high demand for the booming AI sector, as a temporary solution for certain product lines to circumvent the current GDDR memory shortage.

The AI Shadow Over Gaming

This strategic pivot ultimately underscores a much broader trend within the company, where the explosive growth and immense profitability of the AI and data center business have begun to cast a long shadow over the consumer-facing graphics card division. The decision to allocate scarce resources based on a “Profit/GB” metric is a clear signal that the consumer segment, historically a cornerstone of the business, is now taking a secondary role to the demands of the AI industry. The market’s response reflects this new reality, as the availability of gaming GPUs becomes increasingly dependent on their alignment with a corporate strategy optimized for enterprise-level profits. This shift clarifies that future product roadmaps and supply chain decisions for consumer hardware will likely be influenced, and in some cases dictated, by the resource needs of the more lucrative AI sector, fundamentally altering the long-standing relationship between the company and its gaming audience.