Attackers did not wait for a proof-of-concept or a weekend lull, turning a fresh advisory into a working exploit chain in roughly half a day and demonstrating how AI-serving stacks have become fast-moving targets for SSRF-driven reconnaissance and lateral movement. The case centered on CVE-2026-33626, a high-severity flaw (CVSS 7.5) in LMDeploy’s vision-language module present in versions 0.12.0 and earlier, where the load_image() function in lmdeploy/vl/utils.py fetched arbitrary URLs without filtering internal or private address spaces. That single oversight enabled access to sensitive cloud metadata endpoints and internal services. Reported by Orca Security’s Igor Stepansky, the bug was exploited in the wild only 12 hours and 31 minutes after disclosure, collapsing the detection-to-exploitation window and signaling that detailed advisories can now act as deployment playbooks for adversaries and automated exploit synthesis pipelines.

The Exploit: What Happened

SSRF Root Cause and Rapid Abuse

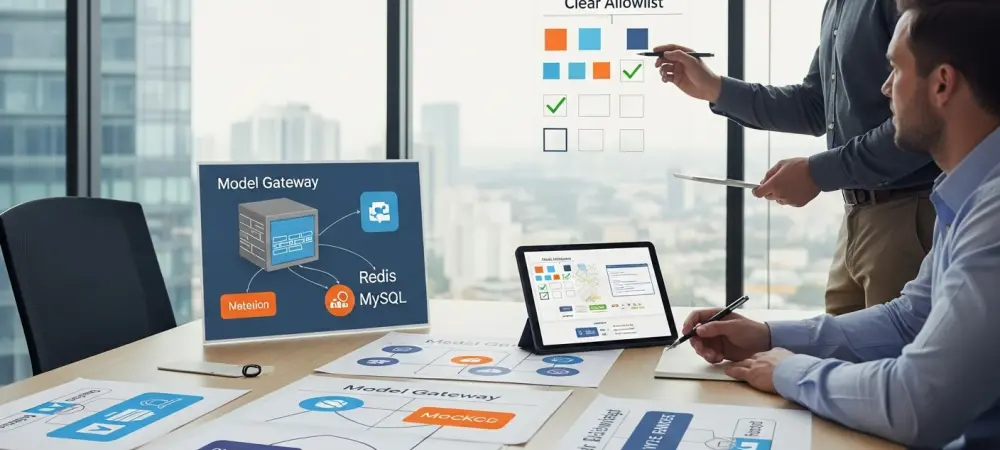

The vulnerability hinged on LMDeploy’s image loading pathway accepting remote URIs and trusting them by default, with no guardrails to block requests to loopback, link-local, or RFC1918 ranges. In practice, that meant a model-serving workflow became a generic SSRF primitive: a user-supplied image URL could quietly pivot to 169.254.169.254 for AWS Instance Metadata Service, 127.0.0.1 for internal admin surfaces, or 10.0.0.0/8 for east-west peeks. Sysdig observed a session from 103.116.72[.]119 that lasted eight minutes yet packed a broad probe. The actor hit IMDS endpoints, enumerated Redis and MySQL, and reached for an internal HTTP admin interface, blending target selection with speed. Out-of-band DNS callbacks to requestrepo[.]com provided confirmation that blind probes landed, while loopback port scans mapped available footholds. No public PoC surfaced, yet the advisory’s file paths and parameter names sufficed to reconstruct reliable requests.

Tradecraft, Evasion, and the New Tempo

Beyond the primitive itself, the operator rotated between VLMs—internlm-xcomposer2 and OpenGVLab/InternVL2-8B—suggesting a basic evasion tactic to sidestep platform-specific logging heuristics and reduce the chance of runbook alerts tied to a single model pipeline. That choice also indicated familiarity with how inference gateways route requests, where swapping model identifiers can flip execution paths and briefly hinder correlation. The flows reflected a routine playbook: test metadata, sweep for state stores like Redis, peek at SQL, and probe internal web consoles that often surface in AI-serving clusters. Crucially, the effort began within hours, not days, revealing how commercial LLMs and scripts can transform advisory breadcrumbs—function names, paths, or permissive URI handlers—into working exploit scaffolds. This tempo left defenders reacting to reconnaissance already in motion, and it showed that advisory transparency, while valuable, now materially shapes attacker time-to-weaponization.

The Wider Campaigns

Parallel Campaigns Across Web and ICS

The same rhythm surfaced across unrelated but thematically aligned targets. WordPress sites faced pressure from two plugin flaws: Ninja Forms – File Upload (CVE-2026-0740) and Breeze Cache (CVE-2026-3844). In practice, those bugs enabled arbitrary file upload and remote code execution, culminating in full site compromise when misconfigurations or weak isolation compounded the impact. Opportunistic operators blended mass scanning with selective follow-through, staging webshells, cron-based persistence, and CDN abuse to mask payload delivery. Meanwhile, a wave of scanning struck Modbus-enabled PLCs exposed on the public internet across 70 countries, splitting into broad, automated sweeps alongside quieter, target-specific fingerprinting. Several scanners geo-located to China, while many IPs carried low reputation scores consistent with rotating infrastructure. Together, the signals pointed to a converging ecosystem: automated enumeration at scale feeding rapid, modular exploit deployment.

Implications for AI Stacks and Concrete Defenses

Building on this foundation, the LMDeploy episode demonstrated why AI-adjacent middleware now sits on the front line. SSRF endures because it stretches across trust boundaries, turning outward-facing parsers into bridges to metadata services, internal control planes, or ephemeral caches. Practical defenses demanded specificity rather than slogans: sanitize URL fetchers with explicit allowlists; block RFC1918, loopback, link-local, and metadata endpoints; enforce IMDSv2 or cloud-equivalent protections; segment Redis and MySQL behind service meshes with mTLS; and narrow egress while capturing high-fidelity DNS logs for OOB detection. Fast patch cycles mattered, but so did posture: strip internet exposure from admin consoles, require token-bound requests for model APIs, and treat model gateways as Tier 0 assets. Taking these steps shrank SSRF blast radius, constrained lateral paths, and turned eight-minute reconnaissance windows into noisy, contained dead ends. The path forward was clear and achievable with disciplined engineering.