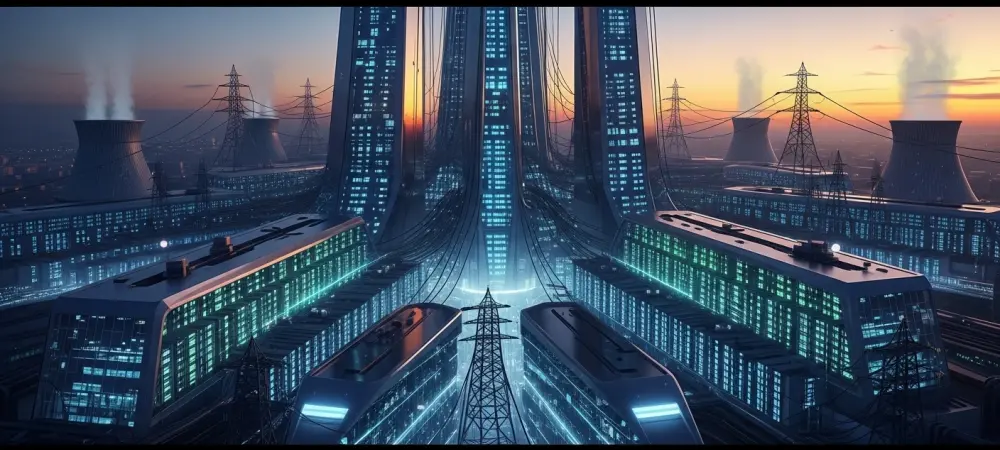

As the digital landscape undergoes its most radical transformation since the dawn of the internet, the balance of power is shifting decisively toward a few major players. Dominic Jainy, a seasoned IT professional with a deep background in artificial intelligence and infrastructure, joins us to explore the implications of a world where hyperscalers are projected to control two-thirds of global data center capacity by 2031. This conversation explores the decline of traditional on-premise facilities, the massive financial stakes of the AI arms race, the rising costs of energy, and the growing tension between technological expansion and local community resources.

Hyperscalers are projected to own two-thirds of global data center capacity by 2031 while on-premise facilities continue to shrink. How does this shift affect enterprise control over proprietary data, and what specific steps should IT leaders take to manage this transition without losing operational flexibility?

The shift toward hyperscale dominance represents a stinging reality for traditional IT departments, as we watch the on-premise share of capacity plummet from 56% in 2018 to a projected 19% by 2031. This migration means that the physical “moat” around proprietary data is evaporating, replaced by a logical one maintained by a third party. To keep their footing, IT leaders must transition from being hardware gatekeepers to becoming masters of hybrid architecture and cloud governance. By the time we reach 2031, hyperscalers will have 14 times the capacity they held in 2018, which creates a massive gravity well for data. Leaders should respond by implementing rigorous multi-cloud strategies and data egress plans that ensure they aren’t locked into a single provider’s ecosystem. It is vital to maintain a “cloud-adjacent” strategy for the most sensitive workloads while leveraging the 32% of capacity that will remain in non-hyperscale or on-prem environments for high-security tasks.

Over $500 billion is being invested in AI infrastructure, yet compute capacity remains a constrained resource for many organizations. How should leaders prioritize their internal workloads during these shortages, and what are the primary technical trade-offs when shifting from own-built facilities to leased or cloud environments?

We are witnessing an unprecedented financial surge, with more than $500 billion in capital expenditures earmarked for AI infrastructure in fiscal year 2026 alone. Despite this staggering sum, organizations are hitting a “capacity crunch” that feels like a physical wall, as even giants like Microsoft struggle with shortages expected to last through the entire fiscal year. Leaders must prioritize workloads by distinguishing between “existential” AI projects that drive revenue and “experimental” ones that can be throttled or delayed. When you move from an own-built facility to a leased or cloud environment, you are essentially trading absolute sovereignty for rapid scalability. While hyperscalers are doubling their footprint to meet demand, the trade-off involves navigating shared resource contention and potential latency issues that didn’t exist when the server was ten feet down the hall.

Data center energy demand could trigger electricity price hikes of nearly 80% in certain regions within the next few years. What are the long-term implications for companies participating in ratepayer protection pledges, and how can facilities be redesigned to mitigate the financial impact on local communities?

The sheer hunger of these facilities is becoming a public concern, especially with forecasts suggesting price hikes of up to 79% in high-demand areas like Texas by 2027. Companies joining the Ratepayer Protection Pledge are essentially entering a social contract to ensure their expansion doesn’t bankrupt the local residents who live in the shadow of these massive “Stargate” projects. To mitigate this, we have to look at facility redesigns that go beyond simple cooling and move toward total energy circularity. This includes investing in onsite renewable generation and grid-balancing technologies that allow data centers to act as massive batteries for the community during peak hours. The industry is already seeing a massive push for these voluntary commitments from leaders like Google, Meta, and Amazon to prove they can grow without leaving the local population in the dark or under a mountain of debt.

Some local governments are beginning to pass referendums and construction freezes to halt data center expansion due to community concerns. How can infrastructure providers better align with regional interests to avoid these legal roadblocks, and what alternative locations or technologies could alleviate the pressure on saturated markets?

The pushback is no longer just a series of isolated complaints; it has evolved into legal freezes in places like Maine, which could halt construction until late 2027, and anti-data center referendums in states like Wisconsin. To avoid these roadblocks, providers must stop viewing local communities as just “real estate” and start acting as infrastructure partners that provide tangible benefits like job training or water restoration. We are also seeing a shift toward “edge” locations and secondary markets where the power grid is less strained and the local government is more welcoming of the tax revenue. Technologies like liquid cooling and modular data centers can also help by reducing the physical footprint and the noise pollution that often irritates neighbors in residential areas like Northern Virginia. If providers don’t adapt to these community anxieties, they will find themselves locked out of the very regions where the fiber-optic infrastructure is most robust.

With major providers planning to double their data center footprint in the next two years, the industry faces massive scaling challenges. What are the logistical hurdles in deploying thousands of new AI-specific chips, and how can organizations ensure their software remains compatible as underlying hardware architectures rapidly evolve?

Scaling up to 1,360 large data centers by the end of 2025 is a logistical feat that borders on the impossible, especially when you consider the specialized cooling and power delivery required for thousands of new AI chips. We are seeing a massive rush for specialized silicon, such as the TPU chips being secured by Anthropic through 2027, which creates a frantic race for supply chain dominance. Organizations must build their software stacks with an abstraction layer that allows code to run across different architectures, whether they are using AMD’s gigawatt-scale capacity or specialized chips from Broadcom. The hum of these thousands of processors creates a thermal challenge that requires a complete rethinking of airflow and rack density. If a company doesn’t stay hardware-agnostic, they risk their entire software investment becoming obsolete the moment a hyperscaler shifts their underlying silicon strategy.

What is your forecast for the future of enterprise on-premise data centers?

I believe we are entering an era of the “Surgical Data Center,” where on-premise facilities will no longer be the general-purpose workhorses they were in 2018, but rather highly specialized vaults for high-compliance and low-latency workloads. While the capacity share will shrink to 19% by 2031, those remaining facilities will be significantly more powerful, packed with dense GPU clusters that allow companies to run proprietary AI models without their data ever touching the public internet. We will see a “re-shoring” of specific data types as companies realize the cost and security benefits of keeping their most valuable intellectual property under their own roof. Ultimately, the successful enterprise of the future will use the cloud for scale and on-premise for its “crown jewels,” creating a balanced ecosystem that values both the massive reach of hyperscalers and the ironclad control of local infrastructure.