The long-awaited synthesis of physical dexterity and cognitive reasoning has finally arrived, ending the age when machines were confined to the rigid, repetitive motions of factory assembly lines. For decades, the primary limitation of robotics was a lack of adaptability; a minor change in an object’s position could halt an entire production sequence. Today, the integration of generative models has provided the missing neurological link, enabling robots to interpret context and learn from their surroundings. This shift represents a move toward a new foundation where autonomy is defined not by pre-set scripts, but by the ability to reason through the unexpected.

The Cognitive Revolution: Mapping the Modern Robotics Landscape

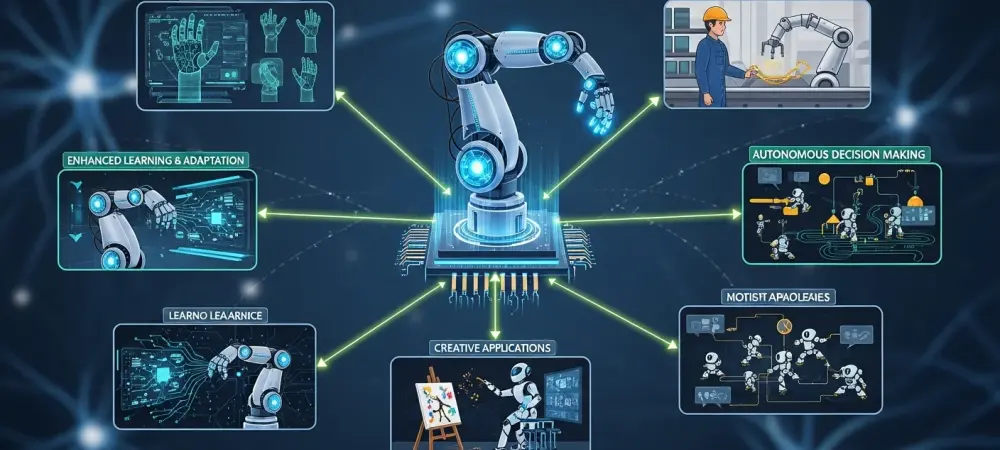

The current landscape is characterized by a transition from pre-programmed automation to generative, adaptive intelligence. In the industrial sector, this means arms no longer just move to coordinates; they recognize the specific properties of the materials they handle. Service and humanoid robotics are also seeing a massive influx of technology as developers move away from narrow AI toward systems that can generalize across different environments. This ecosystem is thriving because the barrier between digital logic and physical action has been dismantled by sophisticated neural architectures. At the center of this revolution are Large Language Models (LLMs) and Multimodal AI, which function as the new robotic brain. These pillars allow a machine to process a single command—such as “clean up the spilled liquid”—and understand that it needs to find a towel, navigate to the mess, and apply the correct pressure to the floor. Market leadership is currently a mix of tech giants providing the cloud infrastructure and specialized startups focused on the edge computing necessary for real-time motion. Meanwhile, global manufacturing hubs are racing to integrate these systems to stay competitive in an increasingly automated economy.

Regulatory frameworks are struggling to keep pace with the sheer speed of innovation. International safety standards are being rewritten to account for robots that no longer follow a predictable path, shifting the focus from hardware compliance to algorithmic transparency. These guidelines are essential for ensuring that as robots enter our streets and hospitals, they remain under human oversight. The goal is to create a standardized language of safety that allows for cross-border collaboration while protecting the physical integrity of human bystanders.

From Execution to Intuition: Major Trends and Market Projections

Emerging Trends Transforming Robotic Capabilities

The evolution of Sim2Real technology has completely altered how robots are trained, moving the bulk of research and development into hyper-realistic virtual environments. Instead of risking expensive hardware in physical trials, developers use generative simulations to subject robots to millions of diverse scenarios in seconds. This process not only accelerates the timeline for deployment but also allows machines to master edge cases that would be too dangerous or rare to replicate in the real world. Consequently, the cost of bringing a sophisticated robot to market has plummeted.

Natural interaction is another trend turning machines into accessible tools for the general public. We are moving beyond code and specialized interfaces toward a world where verbal commands and simple gestures suffice. Robots now synthesize visual, tactile, and auditory data simultaneously through multimodal perception. This allows them to understand that a “fragile” box requires a different grip than a steel component, even if the weight is identical. This level of intuition is making the boundary between human intent and robotic execution nearly invisible.

Market Growth and the Economic Impact of Generative Robotics

Data-driven forecasts suggest that the AI-driven robotics market will maintain a substantial compound annual growth rate through 2030. Investment heatmaps indicate that capital is flowing most heavily into surgical robotics and last-mile logistics, where the demand for precision and efficiency is highest. Investors are no longer looking for simple labor replacement; they are seeking labor optimization that can scale without a linear increase in overhead. This financial backing is a vote of confidence in the long-term viability of generative systems.

Performance indicators are shifting from simple speed metrics to more complex measures of return on investment, such as reduced downtime and autonomous problem-solving. A robot that can fix its own workflow when a sensor fails is infinitely more valuable than one that requires a technician for every minor hiccup. By maximizing precision and minimizing human intervention, companies are seeing a fundamental change in how they calculate the value of their automated fleets. The economic impact is already visible in the stabilization of supply chains that were once vulnerable to labor shortages.

Navigating the Friction: Technological and Strategic Hurdles

Despite the optimism, the computation paradox remains a significant hurdle for mobile platforms. Sophisticated generative models require immense energy and processing power, which often clashes with the need for robots to be lightweight and untethered. Balancing high-performance edge computing with battery longevity is a constant struggle for engineers. Until hardware efficiency catches up with algorithmic complexity, many robots will remain limited by their power cycles or reliance on constant high-bandwidth connections. The accuracy gap, often manifesting as AI “hallucinations,” presents a life-and-death risk in high-stakes environments like heavy industry. A robot misinterpreting a visual cue could lead to a catastrophic failure or injury. Furthermore, the scarcity of high-quality physical datasets makes it difficult to train models that are truly robust. Unlike text-based AI, which has the entire internet to learn from, physical robots require data that describes the messy, unpredictable nuances of the three-dimensional world, which is much harder to collect and label.

The Rulebook of Autonomy: Governance, Ethics, and Security

Safety protocols are now being built into the very architecture of robotic “fail-safe” mechanisms. When operating in public spaces, a robot must be able to default to a safe state the moment its generative model produces an uncertain or high-risk output. Data privacy is another growing concern, as robots equipped with high-resolution cameras and sensors move through our private offices and homes. Ensuring that the information captured is processed locally and not leaked into broader datasets is a primary focus for security experts. Liability and accountability laws are entering a new phase of complexity as generative systems begin making autonomous errors. If a robot makes a decision that leads to property damage, determining whether the fault lies with the hardware manufacturer, the software developer, or the user is a legal labyrinth. Global standardization efforts are currently underway to align these regulations, ensuring that a robot manufactured in one country can safely and legally operate in another. This legal clarity is the final requirement for the mass adoption of autonomous systems.

The Road Ahead: Toward Ubiquitous and Collaborative Systems

The rise of smart urbanism will likely see autonomous robots becoming the invisible backbone of city infrastructure. In the coming years, these systems will manage resource distribution and maintain public utilities with minimal human oversight. We are also moving toward a period of human-robot synergy, where the machine is no longer just a tool but a collaborative partner in creative and technical fields. Imagine a laboratory where a robot suggests new molecular combinations or a construction site where the machine adapts the blueprints in real-time to account for weather changes.

Mass personalization will allow generative AI to adapt robotic behavior to the unique needs of a specific household or patient. Rather than a one-size-fits-all approach, robots will learn the preferences and routines of their human companions, providing a level of care and assistance that feels truly intuitive. Looking even further, generative design may lead to self-repairing systems that can diagnose their own mechanical wear and 3D-print their own replacement parts. This level of self-sufficiency would mark the ultimate achievement in robotic autonomy.

Synthesizing the Future: Final Outlook on Generative Robotics

The convergence of generative intelligence and physical automation has fundamentally altered the trajectory of global productivity. It was determined that success in this field depends on the development of “world models” that allow machines to understand physics as intuitively as humans do. Developers should prioritize the creation of open-source physical datasets to overcome current data scarcity issues. Strategic investments must shift toward modular hardware that can be easily updated as software models continue to evolve. Ultimately, the industry moved toward a collaborative model where the primary goal was augmenting human capability rather than simple replacement. This shift ensured that the benefits of automation were distributed across the workforce, fostering a more resilient and creative economy.