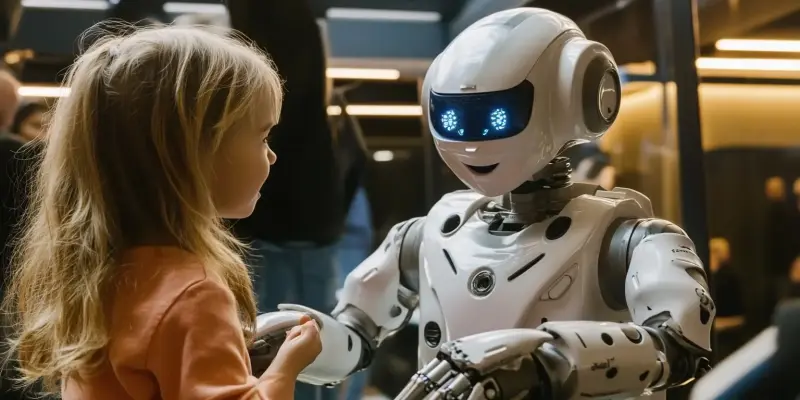

Artificial intelligence (AI) is rapidly becoming an integral part of children’s lives, offering both educational opportunities and mental health support. As AI’s role expands, the risks it poses to young users become increasingly apparent. While AI technologies promise significant benefits, they also present unique vulnerabilities and safety gaps that necessitate special attention. This article delves deeply into these risks, highlighting the urgent need for child-specific safeguards to protect children in an increasingly digital world.

The Growing Influence of AI in Children’s Lives

In today’s digital age, children are more frequently engaging with AI-driven tools and applications. From educational platforms designed to enhance learning experiences to mental health chatbots offering crucial support, AI has the potential to significantly boost child development. However, the unique developmental needs and vulnerabilities of children require special consideration to ensure their safety online. Many children lack the cognitive maturity to fully comprehend the consequences of interacting with AI, making them particularly susceptible to harmful content and interactions. Given this, it becomes critically important to establish higher standards of safety measures tailored specifically for young users.

The promise of AI technologies in children’s lives cannot be understated, as they offer innovative ways to learn and receive support. However, the potential risks associated with these technologies also need thorough examination. AI can inadvertently expose children to inappropriate or harmful content, and the lack of age-appropriate safeguards can lead to unintended negative consequences. To mitigate these risks, it’s essential to prioritize the development of AI systems that can cater to children’s unique requirements while enhancing their digital experiences safely.

Identifying Existing Safety Gaps

Current AI safety protocols predominantly cater to adult users, leaving significant safety gaps for children. This disparity became evident in a recent study that evaluated the safety of six major Large Language Models (LLMs). The evaluation revealed that none of these models were entirely safe for children, highlighting the inadequacy of existing AI systems in addressing the unique risks faced by child users. This issue underscores the urgent need for developing child-specific safeguards to protect young users more effectively. The study’s findings reveal that while AI models have made remarkable strides in terms of sophistication, they fall short in providing the necessary protections for children.

The lack of child-specific safety measures creates a digital environment that is not entirely secure for youngsters. Given that AI technologies can have such a profound impact on children’s lives, it is incumbent upon developers and policymakers to fill these safety gaps. Robust, tailored safety protocols are essential to protect children from potentially harmful interactions with AI systems. By failing to account for the unique vulnerabilities of children, current AI safety measures leave a significant portion of users at risk, necessitating urgent action to address these concerns effectively.

Evaluating AI Models for Child Safety

One of the more alarming revelations from the study was the high defect rates among the tested AI models. The best-performing model still exhibited a 29.6% defect rate, indicating a significant risk for harmful interactions. Paradoxically, one of the most advanced models, GPT-4, had the highest defect rate. This finding challenges the assumption that more complex and larger models automatically equate to increased safety. The high defect rates among these models highlight the critical need for rigorous safety evaluations specifically designed to account for interactions with children.

Ensuring that AI systems are safe for young users is imperative to mitigate potential risks. Developers must prioritize the creation of models that can navigate the complexities of child interactions without compromising safety. This involves extensive testing and refinement to minimize the chances of harmful content or responses. Given the vulnerability of children, it is essential that AI developers implement stringent safeguards and continuously evaluate the models for safety. By adopting meticulous evaluation methods, the tech industry can work towards creating a digital environment that prioritizes the well-being and security of young users.

The Trade-Off Between Safety and Usefulness

One of the fundamental challenges in AI development is striking a balance between safety and usefulness, particularly when it comes to child users. Certain models, like Llama-2, prioritize safety by refusing to respond to potentially harmful queries, resulting in a high refusal rate. While such an approach minimizes immediate risks, it can inadvertently drive children to seek information from less secure sources, posing even greater dangers. The trade-off between safety and usefulness is an ongoing challenge that developers must navigate carefully to provide both protection and valuable interactions for children.

Balancing safety and informative responses is central to effective AI development. Developers need to find creative solutions that offer useful information while adhering to high safety standards for child users. Models that rely solely on caution by refusing responses may fail to fully support children’s needs, potentially leading them to explore less-controlled environments. Striking the right balance involves a thoughtful approach that ensures AI can provide accurate and helpful information without exposing children to harm. It is essential for AI systems to be both robust and user-centric, delivering meaningful interactions while safeguarding young users.

Personality Traits and Increased Vulnerability

Another significant finding from the study was the correlation between certain personality traits in children and the likelihood of eliciting harmful responses from AI models. Specifically, children exhibiting traits like impulsivity or social withdrawal were found to be more susceptible to generating harmful interactions. These vulnerabilities necessitate the development of AI systems that can adapt to and provide additional safeguards for at-risk children. Understanding these personality-driven risks is crucial for creating AI that offers tailored protections and support.

By addressing the specific needs of children with varying personality traits, AI can become a safer and more supportive tool. Developers must consider these vulnerabilities when designing AI systems to ensure they can provide personalized protections. Creating adaptable AI that can detect and respond to the unique needs of each child is a critical step in mitigating risks. By incorporating these considerations into AI design, the industry can enhance the overall safety and effectiveness of AI interactions for vulnerable populations. A deeper understanding of personality traits and their influence on AI interactions is essential for developing child-specific safeguards.

Multi-Turn Conversations and Safety Risks

The study also revealed that harmful content often emerges over multiple exchanges rather than within a single interaction. It was found that the third turn in conversations with AI models was particularly prone to generating harmful responses. This emphasizes the importance of extended conversational evaluations when assessing AI safety. Single-prompt testing is inadequate for identifying potential risks, as it overlooks the complexity of sustained interactions. Comprehensive evaluations that account for multi-turn conversations are essential to effectively identify and mitigate potential risks.

Assessing the safety of AI systems through multi-turn conversations enables developers to gain a deeper understanding of how harmful content may emerge over time. It provides a more accurate reflection of real-world interactions, where conversations typically involve multiple exchanges. Extended testing helps identify vulnerabilities that may not be apparent in initial interactions, thus allowing for more effective risk mitigation. By incorporating multi-turn evaluations into the safety assessments, developers can ensure a higher standard of protection for child users, reducing the risk of encountering harmful content during prolonged engagements.

Comparing Risks for Children and Adults

One of the stark findings from the study was the significant discrepancy in AI safety between children and adults. For instance, AI-generated sexual content had a defect rate of 75.4% for children compared to just 16.7% for adults. This substantial contrast underscores the insufficiency of current safety measures, which are often designed without considering the unique vulnerabilities of children. The higher risk posed to children necessitates the development of robust, age-specific protections to ensure their safety online. Addressing these disparities is crucial for creating a safer digital environment for young users.

Children are significantly more vulnerable to encountering harmful AI responses, highlighting the urgent need for tailored safety measures. AI systems must be designed with specific protections that account for the developmental and cognitive differences between children and adults. This includes implementing stringent safeguards and continuous evaluations to ensure that AI interactions remain safe and appropriate for younger audiences. By prioritizing the creation of age-specific protections, developers can better address the unique challenges children face in digital spaces. Ensuring robust safety measures for children is paramount to safeguarding their well-being in increasingly AI-driven environments.

Urgent Call for Child-Specific Safeguards

Artificial intelligence (AI) is swiftly becoming a crucial part of children’s lives, providing educational benefits and mental health support. As AI’s influence grows, so do the risks it presents to young users. These technologies, while offering tremendous advantages, bring unique vulnerabilities and safety gaps that require dedicated attention. The benefits of AI in education can be significant, offering personalized learning experiences and interactive tools that make learning more engaging. However, the very nature of AI means it can also expose children to dangers such as data privacy issues, cyberbullying, and inappropriate content. The rapid integration of AI in children’s daily lives makes it essential to address these risks promptly. This article explores these concerns in depth, emphasizing the critical necessity for child-specific protections in our increasingly digital era. We must implement stringent measures to ensure the safeguarding of our children as they navigate this technologically advanced landscape.