The intricate dance of billions of microscopic transistors within a single silicon sliver remains the most sophisticated achievement of modern human engineering, dictating the pace of global progress and defining the boundaries of what software can achieve. As the primary engine of any computing system, the Central Processing Unit has transitioned from a simple arithmetic logic device into a heterogeneous powerhouse that integrates specialized silicon for artificial intelligence, graphics, and secure enclave processing. This evolution is not merely a story of miniaturization but a profound narrative of architectural ingenuity, where designers have constantly found ways to extract more performance from the same physical footprints. The context of this evolution is rooted in the relentless pursuit of efficiency, where every milliwatt of power and every nanosecond of latency is scrutinized to meet the demands of an increasingly digital society.

In the broader technological landscape, the CPU remains the cornerstone of innovation, even as specialized accelerators like GPUs and TPUs gain prominence. While specific tasks are offloaded to these specialized units, the CPU retains its role as the orchestrator of the entire system, managing the complex interactions between hardware and software. The relevance of this technology is underscored by its ubiquity; it powers the massive server farms that facilitate global connectivity and the tiny embedded sensors that monitor critical infrastructure. Understanding the principles that govern processor design is essential for grasping how the next decade of computing will unfold, particularly as the industry reaches the physical limits of traditional silicon manufacturing.

The Foundations of Processor Technology

At the heart of every processor lies the Metal-Oxide-Semiconductor Field-Effect Transistor, a switch so small that millions can fit on the head of a pin. These transistors act as the fundamental units of digital logic, enabling the binary language of ones and zeros that forms the basis of all modern computation. The core principles of CPU technology involve the manipulation of electrical current through these gates to perform Boolean operations, which are then combined to execute complex mathematical functions. This foundation was established decades ago, yet the underlying physics remains the same, even as the scale has moved from micrometers to the atomic level of the latest process nodes.

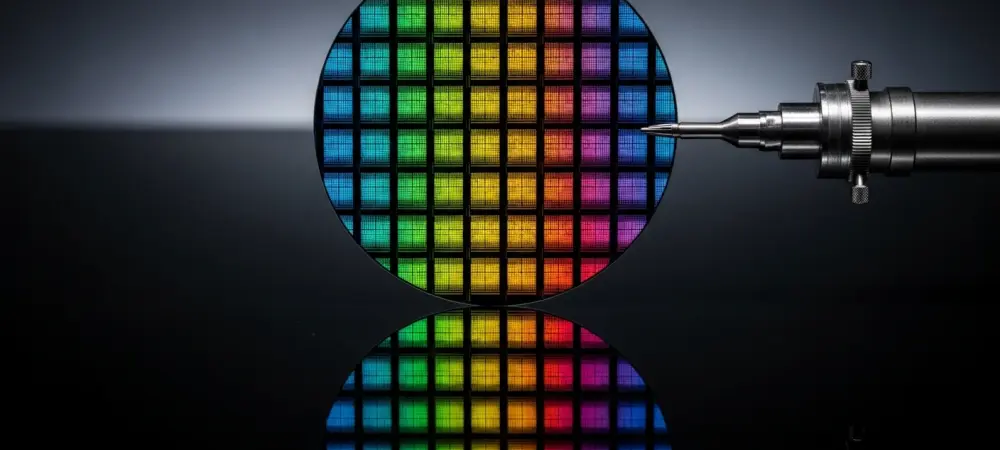

The production of these components is a feat of extreme precision, utilizing extreme ultraviolet lithography to etch patterns onto silicon wafers with a resolution that defies intuition. This manufacturing context is critical because it dictates the thermal and electrical characteristics of the final product. As the industry has evolved, the focus has shifted from merely increasing the number of transistors to optimizing how they are arranged to minimize the distance data must travel. This shift marks the transition from simple logic gates to sophisticated integrated circuits where the layout of the chip is as important as the speed of the switches themselves, ensuring that the processor can handle the vast amounts of data required by contemporary applications.

Architectural Milestones and Engineering Principles

The Fetch-Decode-Execute Cycle and Digital Logic

The fundamental operation of a processor is governed by a repetitive sequence known as the fetch-decode-execute cycle, which serves as the rhythmic heartbeat of all computing tasks. During the fetch phase, the processor retrieves an instruction from the system memory, a process that has become increasingly complex as the gap between CPU speed and memory latency has widened. To mitigate this delay, modern architectures employ sophisticated prefetching algorithms that attempt to predict which data will be needed next, effectively pulling it into high-speed local storage before the request is even made. This stage is crucial because the efficiency of the entire system is capped by the speed at which it can be fed with new instructions.

Once an instruction is fetched, the decoding stage translates the raw binary code into a series of control signals that the various parts of the processor can understand. In x86 architectures, this often involves breaking down complex instructions into simpler micro-operations, a technique that allows the chip to treat legacy code with the efficiency of a streamlined modern engine. The execution phase then carries out the actual work, whether it involves adding two integers or comparing values for a logical branch. This cycle is synchronized by an internal clock, but the true measure of a processor’s power is not just the frequency of this clock but how many instructions it can complete in parallel during a single tick, a concept known as instructions per cycle.

Internal Workings of the Modern Processor Core

Delving deeper into the internal anatomy of a core reveals a highly specialized environment where data is processed through multiple specialized units. The Arithmetic Logic Unit and the Floating-Point Unit handle the heavy lifting of mathematical computations, while the Load/Store unit manages the constant flow of information between registers and the cache hierarchy. Modern cores are designed with a “superscalar” architecture, meaning they contain multiple copies of these execution units to allow several instructions to be processed at the same time. This parallelism is managed by a dispatcher that ensures the units are kept busy, reducing the idle time that would otherwise result from waiting for a single instruction to finish.

To further enhance performance, modern processors utilize out-of-order execution, a sophisticated technique where the chip analyzes a stream of instructions and executes them in whatever order is most efficient, rather than strictly following the sequence provided by the software. This requires a massive amount of bookkeeping to ensure that the final result is the same as if the instructions had been followed linearly. Coupled with branch prediction—where the CPU guesses the outcome of a conditional “if-then” statement—these techniques allow the processor to maintain a high level of throughput even when faced with unpredictable code. The significance of these features lies in their ability to hide the inherent latencies of hardware, making the system feel much faster and more responsive to the user.

The Transition from 16-bit to 64-bit Computing

One of the most transformative milestones in architectural history was the shift toward 64-bit computing, a transition that fundamentally altered the memory landscape of the industry. In the 16-bit and 32-bit eras, processors were limited by the amount of memory they could directly address, with 32-bit systems hitting a hard ceiling of four gigabytes. As software became more resource-intensive and datasets grew in size, this limitation became a significant bottleneck for both professional workstations and consumer machines. The introduction of the x86-64 standard, pioneered by AMD and later adopted by Intel, solved this by dramatically expanding the addressable memory space, allowing for trillions of gigabytes to be utilized in theory.

This transition was about more than just memory; it also increased the number of general-purpose registers available to the processor, which directly improved the efficiency of many algorithms. By having more “workspaces” within the CPU itself, the chip could perform more complex operations without having to constantly swap data in and out of the slower main RAM. The shift toward 64-bit was unique because it maintained backward compatibility with older 32-bit applications through a hybrid execution mode, ensuring that the transition did not alienate the existing user base. Today, this standard is the bedrock of modern computing, enabling everything from high-fidelity gaming to complex scientific simulations that would have been impossible under the old memory constraints.

Recent Innovations and Emerging Trends

The current landscape of processor design is characterized by a move away from monolithic chips toward a modular philosophy known as chiplet architecture. Instead of trying to manufacture a single, massive piece of silicon, companies are now combining several smaller dies onto a single package, connected by high-speed interconnects. This approach is revolutionary because it allows for much higher manufacturing yields; if one small chiplet has a defect, it is much cheaper to discard than an entire large chip. Moreover, it allows designers to mix and match different manufacturing processes, using the most advanced nodes for the performance cores while utilizing more mature, cost-effective technologies for the input-output controllers and memory interfaces.

Another significant trend is the rise of heterogeneous computing, where different types of cores are placed on the same chip to handle varied workloads more efficiently. We are seeing a proliferation of “hybrid” architectures that pair high-performance cores with high-efficiency cores, allowing the processor to shift tasks between them based on the demand for power or speed. This is particularly relevant in the context of mobile devices and laptops, where battery life is a primary concern. Furthermore, the integration of dedicated Neural Processing Units has become standard, providing hardware-level acceleration for artificial intelligence tasks like image recognition, language translation, and real-time noise cancellation. These trends signify a shift in the industry’s trajectory toward a more specialized and efficient future where the CPU is a collection of diverse tools rather than a one-size-fits-all engine.

Real-World Applications and Sector Deployment

The deployment of advanced CPU architectures has had a profound impact on the datacenter sector, where the demand for compute density and energy efficiency is at an all-time high. Modern server processors now feature dozens of cores on a single socket, enabling cloud providers to host thousands of virtual machines on a single physical rack. In this environment, features like hardware-assisted virtualization and advanced security extensions are critical, as they allow multiple users to share the same hardware while keeping their data completely isolated. This has facilitated the explosion of software-as-a-service and cloud computing, making high-level computational power accessible to businesses of all sizes without the need for significant on-site infrastructure.

In the automotive industry, the evolution of the CPU has been a primary driver behind the development of autonomous driving and advanced infotainment systems. Modern vehicles are essentially mobile datacenters, requiring processors that can handle massive streams of sensor data from cameras, radar, and lidar in real-time. These chips must operate under extreme conditions and meet stringent safety certifications that do not apply to consumer electronics. The shift toward software-defined vehicles means that the CPU’s ability to be updated and its capacity for future growth are now major selling points for car manufacturers. These unique use cases demonstrate how processor technology is no longer confined to the desk but is integrated into the very fabric of our transportation and infrastructure.

Technical Hurdles and Industry Constraints

Despite the rapid pace of innovation, the industry faces a looming challenge known as the “power wall,” where the heat generated by densely packed transistors becomes impossible to dissipate efficiently. As chips get smaller, the leakage of current increases, leading to higher power consumption and thermal throttling that limits the maximum achievable clock speed. This has forced a shift in focus from raw frequency to architectural efficiency and multi-core performance. Managing this heat requires increasingly complex cooling solutions, ranging from sophisticated vapor chambers in laptops to liquid cooling systems in enterprise servers, adding cost and complexity to the overall system design.

Furthermore, the industry is grappling with the physical limits of silicon itself. As we approach the scale of individual atoms, phenomena like quantum tunneling—where electrons jump across barriers they are supposed to be blocked by—can lead to errors and instability. Regulatory issues and geopolitical tensions also play a role, as the manufacturing of advanced semiconductors is concentrated in a few key locations, making the global supply chain vulnerable to disruptions. To mitigate these limitations, researchers are exploring new materials like gallium nitride and carbon nanotubes, as well as novel manufacturing techniques like 3D stacking, where layers of logic and memory are piled on top of each other to increase density without expanding the chip’s footprint.

The Future Trajectory of Computing Power

Looking ahead, the trajectory of computing power is likely to be defined by a move toward even more radical forms of specialization and the integration of non-traditional technologies. One potential breakthrough is the adoption of optical computing, where light is used instead of electricity to transmit data within the chip. This would drastically reduce heat generation and allow for much higher bandwidth between the various components of the processor. While still in the experimental phase, the move toward photonics could solve many of the thermal bottlenecks currently facing the industry and pave the way for a new era of ultra-fast computing that transcends the limitations of copper wiring.

Another major development on the horizon is the rise of open-source architectures like RISC-V, which allow companies to design custom processors without the burden of expensive licensing fees. This democratization of chip design could lead to a massive wave of innovation in the IoT and embedded space, as more manufacturers can afford to create silicon tailored to their specific needs. We may also see the emergence of hybrid quantum-classical processors, where a traditional CPU works in tandem with a quantum co-processor to solve problems that are currently intractable. The long-term impact on society will be profound, as these advancements enable more sophisticated artificial intelligence, more accurate climate modeling, and a level of digital connectivity that is currently unimaginable.

Conclusion and Strategic Assessment

The review of CPU architectural evolution revealed a technological landscape defined by constant adaptation and the relentless pursuit of efficiency. The transition from the early days of 16-bit logic to the current era of chiplets and AI-integrated SoCs demonstrated that performance gains were achieved as much through clever engineering as they were through physical miniaturization. The shift toward heterogeneous computing and specialized accelerators suggested a future where the CPU acted as a versatile coordinator rather than a solitary worker. While the industry faced significant thermal and physical constraints, the ongoing development of new materials and 3D integration techniques provided a clear path forward.

The current state of the technology showed a high level of maturity, with modern processors delivering unprecedented power-per-watt that fueled the growth of mobile and cloud sectors. The impact of these advancements was visible across every major industry, from automotive safety to scientific discovery. Strategic assessment indicated that future growth would likely come from architectural specialization and the adoption of open-source standards, allowing for more customized solutions in niche markets. As the industry moved beyond the limits of traditional scaling, the emphasis on system-level optimization became the primary driver of value, ensuring that the evolution of the processor remained a central pillar of global technological progress.