Global enterprises are currently navigating a high-stakes digital landscape where the unyielding pursuit of generative intelligence often stands in direct opposition to the fundamental requirement for absolute data privacy. This tension, often referred to as the privacy-performance paradox, forces leadership teams to choose between the expansive capabilities of public cloud resources and the localized security of on-premises environments. While the early years of the current decade favored the agility of public hyperscalers, the necessity of protecting trade secrets and massive proprietary datasets is triggering a significant reevaluation of where AI workloads should reside. The concept of sovereignty is quickly becoming the primary driver for this architectural shift, as organizations move beyond the experimental phase into production-grade generative AI deployment. By regaining control over the entire stack, companies are finding they can achieve the performance required for massive model training without exposing their intellectual property to external entities. This analysis explores how the strategic adoption of VMware Cloud Foundation (VCF) 9.1 is facilitating this transition, offering a roadmap for cost-reduction breakthroughs and the operational readiness necessary to support the impending era of autonomous, agentic AI.

The Strategic Shift Toward Enterprise AI Sovereignty

Market Momentum and Adoption Statistics: The Sovereignty Mandate

A profound transformation is occurring in the data center market, with current data indicating that over 50 percent of global organizations now intend to run AI inferencing within private cloud environments. This movement is fueled by the realization that data exposure risks in shared environments represent an existential threat to competitive advantages. Consequently, the industry is witnessing a rapid transition from legacy perpetual licensing toward subscription-based models, a change that reflects a broader move toward continuous value delivery and deeply integrated software ecosystems.

This pivot is further evidenced by the massive growth in Kubernetes-native workloads that are now being integrated directly into traditional virtualized environments. Modern IT departments are no longer satisfied with silos; instead, they seek a unified fabric that can manage containerized AI microservices alongside established virtual machine infrastructures. This convergence allows for more agile resource allocation, ensuring that the heavy computational demands of large language models do not disrupt standard business operations, while maintaining a single point of governance.

Practical Applications and Technical Implementations: Unified Infrastructure

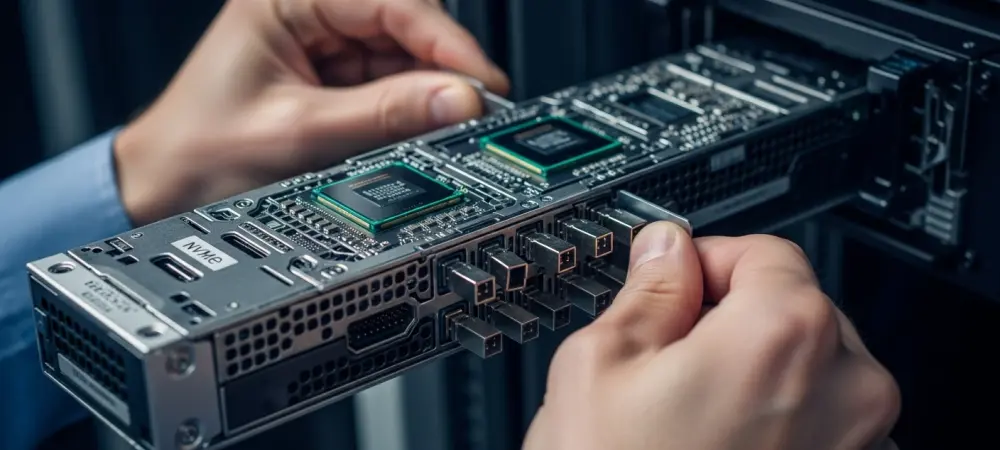

The arrival of VCF 9.1 provides a singular platform capable of managing mixed compute infrastructure across a diverse array of hardware providers, including Nvidia, AMD, and Intel. By abstracting the complexities of underlying silicon, the platform enables organizations to leverage the specific strengths of various GPU and CPU architectures without requiring specialized management tools for each. This level of technical cohesion is vital for high-performance computing environments where even minor bottlenecks can lead to significant delays in model output or training timelines. Technical innovations like vSphere topology-aware scheduling and NVMe memory tiering are playing a critical role in reducing latency for data-intensive AI operations. Furthermore, the implementation of parallel processing of DRS vMotion allows for the simultaneous migration of multiple virtual machines, ensuring that clusters remain balanced even during resource-heavy training sessions. These advancements represent more than just incremental updates; they are the fundamental building blocks of a high-velocity data center designed for the modern era.

Expert Perspectives on Cost Optimization and Security

The financial narrative surrounding private clouds has shifted dramatically, with recent industry evaluations suggesting that integrated platforms can reduce the total cost of ownership for server hardware by as much as 40 percent. This reduction is not merely a result of lower capital expenditure but stems from the dramatic improvement in resource utilization and the consolidation of management overhead. By optimizing how software interacts with physical assets, enterprises are successfully transforming their infrastructure from a traditional cost center into a high-velocity engine for innovation. Security architects are simultaneously advocating for zero-trust frameworks specifically tailored to the unique vulnerabilities of AI model weights and data pipelines. In a private cloud context, zero-trust principles ensure that every interaction between a model and a dataset is verified, protecting the integrity of the AI’s decision-making process. This granular level of control is essential for industries like finance and healthcare, where the accuracy of an autonomous model is just as important as the privacy of the data it processes.

The Future of Agentic AI and Autonomous Infrastructure

The evolution from static models to agentic AI systems marks a turning point for infrastructure design, as these autonomous agents require a resilient and highly responsive foundation to function. Unlike traditional chatbots, agentic systems act independently to solve complex problems, necessitating a private cloud that can dynamically reallocate resources in real time. This demand for responsiveness is pushing the boundaries of software-defined data centers, forcing a shift toward more intelligent and self-healing systems that can anticipate the needs of autonomous software.

Furthermore, a trend of reverse cloud migration is gaining traction as the long-term benefits of private infrastructure begin to outweigh the initial agility of public alternatives. Organizations are finding that by maximizing the lifecycle of their physical assets through intelligent software optimizations, they can achieve better long-term predictability in their budgets. While challenges such as the technical talent gap and the complexity of managing unified platforms at scale remain, the movement toward specialized, high-performance private environments continues to accelerate.

Strategic Summary and the Path Forward

The development of VCF 9.1 and its associated ecosystem provided the necessary bridge between the demands of cutting-edge AI and the strict constraints of corporate security. By reconciling the need for massive performance with the reality of limited budgets, these platforms offered a sustainable path for production-grade intelligence. The strategic shift toward sovereignty was not merely a reaction to security threats but a proactive choice to treat infrastructure as a competitive asset.

Enterprises that prioritized the integration of mixed hardware and zero-trust security found themselves better positioned to deploy autonomous agents without the risks of data leakage. The lessons learned during this period suggested that the future of enterprise technology belonged to those who could maintain total control over their data pipelines. Ultimately, the successful transition to private AI infrastructure required a fundamental rethink of how data centers were managed, leading to a more resilient and efficient digital foundation for the decade ahead.