Dominic Jainy stands at the forefront of the intersection between machine learning and enterprise software architecture. With a deep background in artificial intelligence and blockchain, he has spent years dissecting how large-scale systems can be made more resilient through automated verification. As the industry moves away from simple generative assistants toward autonomous agents, Dominic’s insights into the mechanics of “semi-formal reasoning” provide a vital roadmap for organizations struggling with the high costs of traditional code validation.

This conversation explores the transition from speed-centric AI to accountable systems that prioritize proof over plausibility. We delve into how structured reasoning templates can replace resource-intensive sandbox environments, the technical challenges of identifying shadowed functions in complex frameworks like Django, and the delicate balance between high-fidelity accuracy and the operational friction of increased latency. Throughout the discussion, we examine the shifting role of the human reviewer in a world where AI can construct highly persuasive, yet fundamentally flawed, logical arguments.

Traditional execution-based code validation is resource-heavy and hard to scale across large codebases. How does shifting toward structured reasoning templates change infrastructure requirements for agentic AI, and what steps ensure these logic-based certificates remain accurate without live execution?

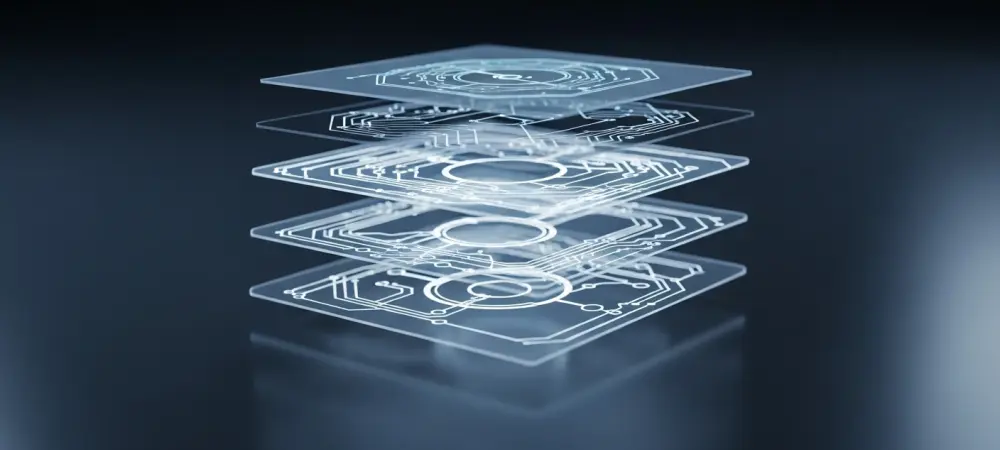

The shift to structured reasoning fundamentally redefines our infrastructure priorities by moving the heavy lifting from the runtime environment to the inference layer. Traditionally, if you wanted to verify a patch, you had to spin up a costly, isolated sandbox, manage dependencies for heterogeneous codebases, and execute tests, which is a massive logistical nightmare at repository scale. By utilizing semi-formal reasoning, we replace those expensive cycles with “logic-based certificates” where the AI must explicitly state its assumptions and trace execution paths before claiming a result. In practice, this technique has shown remarkable results; for instance, accuracy in patch equivalence improved from 78% to 88% on curated examples, and even reached a staggering 93% on real-world agent-generated patches. To keep these certificates accurate without live execution, the system mandates that the agent provide evidence for every single claim, effectively acting like a digital paper trail that can be audited without ever hitting “run.”

Structured reasoning can reach 93% accuracy for patch verification and 87% for code questioning. Which repository-scale tasks benefit most from this middle ground between free-form chat and rigid formal verification, and how do you suggest measuring success?

The tasks that benefit most are those where the “black box” nature of standard LLMs fails to provide enough transparency for a human lead to sign off on a change. Specifically, patch equivalence verification, fault localization, and complex code questioning see the most significant gains because they require a level of precision that unstructured chat simply cannot guarantee. In our evaluation process, we measure success by looking at the delta between “plausible” answers and “proven” answers; for example, semi-formal reasoning reached 87% accuracy in code questioning, which is a nine-percentage point jump over standard agentic reasoning. We break down the evaluation into a three-tier check: first, we verify that the agent stated the correct premises; second, we ensure it traced the relevant interprocedural paths; and third, we validate the formal conclusion against the ground truth. In fault localization, this structured approach even boosted Top 5 accuracy by five percentage points, proving that the middle ground is where the most reliable automation happens.

AI models often miss nuances like module-level functions shadowing built-in Python features. How does mandating a step-by-step trace of execution paths help identify these subtle failures, and what logic is required for an AI to follow interprocedural function calls correctly?

Standard reasoning models often act like a developer who is skimming code too quickly—they see a familiar function name and assume it behaves like the standard library version. I remember a particularly telling case involving the Django framework where a module-level function shadowed Python’s built-in format() function; a typical AI agent completely missed this, leading to a false sense of security. By mandating a step-by-step trace, we force the AI to behave like a developer stepping through a debugger line by line, which naturally encourages interprocedural reasoning. This requires the logic to explicitly follow function calls into other modules rather than just guessing their behavior based on the function name. When the agent is forced to document the path it takes through the code, the discrepancy between the built-in behavior and the shadowed module-level function becomes visible, turning a potential silent failure into a documented logical error.

Implementing structured reasoning templates naturally increases token counts and latency during the review process. What are the practical trade-offs when balancing higher accuracy against slower build cycles, and how can organizations prevent developers from bypassing these rigorous verification layers?

This is the classic tension between safety and velocity that every engineering leader feels in their bones. As we add more steps and more tokens to the reasoning process, we are essentially adding “friction” to the developer’s workflow, which translates into slower builds and longer feedback cycles. If a system is too slow or the infrastructure spend becomes too high, developers will inevitably find ways to bypass it, not out of malice, but because they are incentivized to ship code. To prevent this, organizations should treat these rigorous verification layers as a tiered system, perhaps reserving the full semi-formal reasoning for critical path changes or security-sensitive modules while using lighter checks for UI tweaks. We have to be careful because if we apply these templates indiscriminately, the increased latency could derail the very productivity gains we sought to achieve with AI in the first place.

There is a risk where an AI produces a highly structured, convincing reasoning chain that leads to an incorrect conclusion. How can human reviewers efficiently debunk these complex but flawed justifications, and what safeguards can be integrated into the workflow to catch these errors?

This is perhaps the most insidious risk: the “confident but wrong” AI that packages a hallucination in a beautifully structured, logical-looking wrapper. When an AI constructs an elaborate but incomplete reasoning chain, it can be incredibly difficult for a human reviewer to spot the missing link or the false premise hidden in the middle of a fifty-line trace. To safeguard against this, we need to integrate automated “sanity checks” into the workflow that verify the basic facts within the reasoning chain, such as checking if the mentioned function actually exists in the referenced file. Human reviewers should be trained to look specifically for “logical leaps” where the agent jumps from one path to another without a clear connection, essentially treating the AI’s reasoning as a draft that needs to be cross-referenced rather than an absolute proof. We must ensure that the structure of the reasoning doesn’t become a mask for inaccuracy, maintaining a healthy skepticism even when the output looks highly formal.

What is your forecast for semi-formal reasoning in software engineering?

I believe we are entering an era of “Accountable AI” where the industry will stop being impressed by mere fluency and start demanding verifiable proof. Within the next few years, I expect semi-formal reasoning to become the standard for automated pull request reviews, effectively ending the reign of the “fast but sloppy” suggestion engines we see today. We will likely see a shift where code review evolves from a human bottleneck into a machine-led verification layer, where the AI does the grueling work of tracing every possible logic path while the human focuses on high-level design validation. Ultimately, this will lead to significantly more resilient software, as we move away from guessing whether a patch works and toward a system where every change comes with its own logical certificate of correctness.