The global semiconductor landscape is witnessing a seismic shift as Arm Holdings moves beyond its traditional role as a silent architect to become a direct powerhouse in the competitive world of data center hardware. This transition marks a fundamental departure from a business model that served the industry for over three decades, during which the firm exclusively licensed its intellectual property to other chipmakers. By launching the Arm AGI CPU, the company is finally stepping onto the field as a physical manufacturer, signaling a bold attempt to capture the high-value market of artificial intelligence infrastructure. This development is not merely a product launch but a structural transformation that repositions a foundational tech giant as a direct participant in the hardware supply chain.

The primary objective of this discussion is to clarify the motivations, technical specifications, and market implications of this significant pivot. Throughout this exploration, readers will learn about the specific engineering choices that define the AGI CPU and how these decisions address the unique bottlenecks of modern AI agents. Furthermore, the article examines the complex relationship between Arm and its long-term partners, who now find themselves in a state of cooperation and competition. By understanding the scalability and efficiency of this new hardware, one can better grasp the direction of global computing as it adapts to the staggering power demands of the current technological era.

Key Questions Regarding the Shift in Semiconductor Strategy

Why Is Arm Moving From Licensing to Direct Hardware Sales?

For the majority of its existence, Arm functioned as a neutral provider of blueprints, allowing companies like Nvidia and Apple to build their own custom silicon. However, the rise of generative artificial intelligence has created a massive demand for finished, high-performance goods that can be deployed quickly into data centers. By selling its own physical chips, the firm can realize significantly higher revenue per unit compared to the relatively modest royalties earned through intellectual property licensing. This move allows the company to capitalize more directly on the AI boom, transforming its financial profile from a service-oriented firm into a product-driven hardware giant.

This strategic shift also addresses a gap in the market for specialized AI agent infrastructure. While many hyperscalers have the resources to design their own chips using Arm blueprints, many other large enterprises require a pre-built, optimized solution that offers high efficiency without the multi-year development overhead. By entering the hardware market, the company provides a middle path for these organizations, offering a “turnkey” processor that is specifically tuned for the needs of modern workloads. Moreover, this evolution enables the company to exert greater control over the entire technology stack, ensuring that its hardware and software integrations are as seamless as possible for the end-user.

What Technical Innovations Define the Architecture of the AGI CPU?

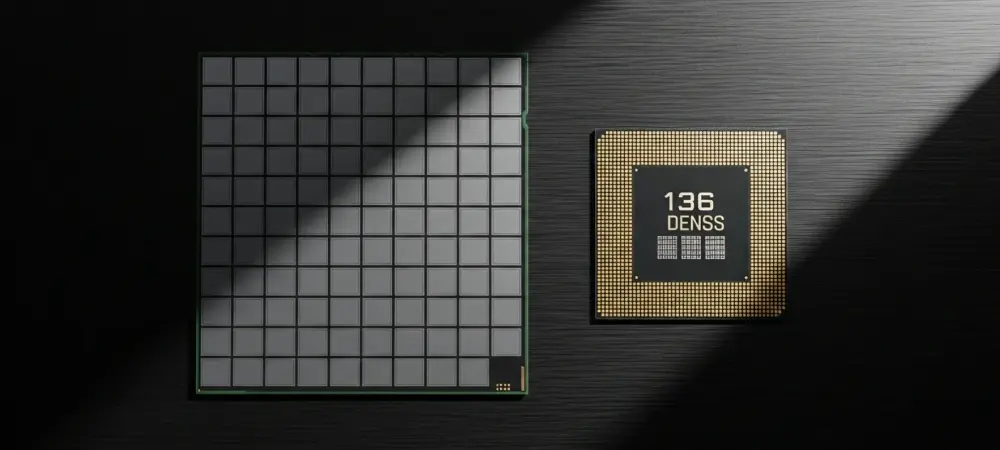

The engineering behind the AGI CPU is meticulously designed to overcome the memory and latency bottlenecks that frequently plague traditional server chips. Utilizing the Neoverse V3 core architecture, each processor can feature up to 136 cores, with every single core dedicated to a specific program thread. This design philosophy is critical because it eliminates the performance throttling often associated with simultaneous multithreading in older architectures. By ensuring that every process has its own dedicated physical resource, the processor maintains high performance even under the continuous, heavy loads typical of large-scale artificial intelligence operations.

In addition to core density, the memory subsystem represents a major leap forward in performance standards. The CPU provides an impressive bandwidth of 6 GB/s per core, coupled with a latency profile that remains under 100 nanoseconds. This is achieved through the use of TSMC’s advanced 3nm process, which allows for greater transistor density and energy efficiency. The inclusion of 96 lanes of PCIe Gen6 and support for CXL 3.0 further enhances the chip’s ability to communicate with external accelerators and expanded memory pools. These technical choices collectively ensure that the hardware can handle the massive data throughput required by autonomous agents and complex neural networks.

How Do Strategic Partnerships Influence the Competitiveness of This Hardware?

The successful entry into the hardware market is heavily bolstered by high-profile collaborations, most notably with Meta. As the lead development partner, Meta has helped shape the specifications of the AGI CPU to ensure it meets the real-world demands of hyperscale social media and AI operations. This partnership provides an immediate, large-scale customer base and serves as a powerful validation of the hardware’s capabilities. When a company of such magnitude adopts a new architecture, it creates a ripple effect throughout the industry, encouraging other data center operators to consider the platform as a viable alternative to established incumbents.

Despite the fact that this move places the firm in direct competition with some of its licensees, the broader industry has shown a surprising level of public support. Major players like Broadcom and Nvidia have endorsed the launch because a stronger Arm ecosystem ultimately benefits all participants using the same foundational architecture. If the AGI CPU can prove its superiority over traditional x86 alternatives, it strengthens the market position for every other company building on the same instruction set. This complex dynamic suggests that the market for AI infrastructure is currently large enough to support both the licensor and its customers simultaneously.

What Are the Power and Scalability Implications for Data Centers?

As global power demand for data centers is projected to rise significantly toward the end of the decade, energy efficiency has become the most vital metric for hardware success. The AGI CPU operates at a 300-watt Thermal Design Power, which is highly competitive given its core density. When these chips are integrated into rack-level systems, the efficiency gains become even more apparent. For instance, a liquid-cooled configuration developed in partnership with Supermicro can house 336 processors in a single 200kW rack. This setup supports over 45,000 cores, offering more than double the performance-per-rack compared to contemporary high-end server configurations.

The focus on air and liquid cooling flexibility allows for rapid deployment across a variety of existing data center environments. By optimizing the “compute-per-watt,” the architecture helps operators manage their total cost of ownership while adhering to increasingly strict environmental regulations. Moreover, the integration of standard interconnects ensures that these systems can be scaled up to meet the needs of the largest training clusters in the world. This focus on scalability ensures that the hardware remains relevant not just for current tasks, but for the increasingly complex workloads that will define the next several years of computing.

Summary: The Evolution of Arm’s Role in Global Computing

The launch of the AGI CPU represents a transformative era for the semiconductor industry, characterized by a shift toward integrated, high-performance hardware solutions. By moving from a pure licensing model to physical production, the company is successfully capturing a larger share of the value chain within the AI data center market. The technical specifications of the new processor, including its high core count and massive memory bandwidth, establish a new benchmark for performance in agent-based computing. These innovations are further supported by strategic alliances with major hyperscalers, ensuring that the hardware is both validated by the market and optimized for real-world applications.

Energy efficiency and rack-level density remain the core pillars of this new hardware strategy, addressing the most pressing challenges faced by modern infrastructure operators. The ability to deploy tens of thousands of cores in a single liquid-cooled rack offers a glimpse into the future of high-density computing. As the industry continues to move toward specialized architectures, the success of this hardware validates the idea that performance-per-watt is the ultimate differentiator in the race for AI supremacy. This progression confirms that the company is no longer just a designer of blueprints but a central architect and builder of the physical systems powering the modern world.

Final Thoughts: Navigating a New Era of Specialized Infrastructure

The emergence of the AGI CPU effectively redefined the boundaries between chip designers and manufacturers, proving that established business models must evolve to meet the demands of artificial intelligence. It became clear that the historical reliance on general-purpose processing was insufficient for the low-latency requirements of modern autonomous agents. By choosing to build its own silicon, the firm demonstrated a commitment to vertical integration that prioritized specific workload performance over broad compatibility. This decision prompted many industry observers to reconsider how hardware and software should be co-designed for maximum efficiency.

Looking back at this transition, the industry observed a significant migration of workloads toward architectures that emphasize parallel processing and high-speed interconnectivity. Organizations that recognized the importance of power efficiency early on were able to scale their operations more sustainably than those who remained tethered to legacy systems. Moving forward, the focus will likely remain on how specialized hardware can continue to reduce the environmental footprint of global computing while simultaneously increasing its intelligence. This shift served as a reminder that in a rapidly changing technological landscape, the willingness to disrupt one’s own business model was often the key to long-term relevance.