Escaping the Terrestrial Trap: Why AI Compute is Heading to Sea

The unrelenting appetite for computational power to support massive artificial intelligence models is currently redrawing the global map of digital infrastructure, forcing developers to look beyond the physical constraints of dry land. As high-performance computing clusters grow in both physical size and thermal intensity, the industry is colliding with a “triple threat” of dwindling real estate in major tech hubs, overtaxed electrical grids, and the cooling limitations of traditional air-based systems. This mounting pressure has transformed the idea of water-based data centers from a niche experimental concept into a strategic necessity for the next phase of the digital revolution. Moving compute infrastructure to the water—utilizing repurposed barges, seafloor capsules, and modular floating platforms—offers a radical departure from the limitations of traditional brick-and-mortar facilities. By positioning these massive server farms at the intersection of maritime logistics and digital connectivity, operators can bypass the bureaucratic and physical hurdles that often stall terrestrial projects for years. This shift represents a fundamental reimagining of what a data center looks like, moving away from static urban warehouses toward dynamic, buoyant assets that can be deployed wherever deep water and high-speed fiber meet.

Industry analysts suggest that the maritime shift is less about escaping the land and more about finding the most efficient path toward scalability. Traditional sites are often located in high-cost, high-regulation environments where every kilowatt of power and square foot of space is a subject of intense political debate. In contrast, the ocean provides a vast, underutilized frontier where industrial cooling and power delivery can be integrated more naturally. As the industry moves toward 2027 and beyond, the ocean floor and coastal waters are poised to become the new primary real estate for the engines of artificial intelligence.

Decoding the Nautical Advantage: Architecture, Energy, and Engineering Realities

Submerged Sustainability: Reimagining Thermal Management Through Seawater Exchange

The extreme heat generated by the latest generation of AI chips is rapidly exceeding the physical cooling capacity of conventional air-cooled data centers. High-density server racks now require liquid cooling solutions that can handle unprecedented thermal loads, and water-based infrastructure provides a massive leap in efficiency by utilizing surrounding seawater or river water for heat exchange. These maritime systems often achieve Power Usage Effectiveness (PUE) ratings as low as 1.15, a figure that is significantly better than the global average for terrestrial facilities, which typically hovers around 1.5 or higher.

Unlike land-based cooling towers that lose millions of gallons of water every day to evaporation, these innovative maritime systems utilize closed-loop heat exchangers. The facility pulls in cool water from the environment, uses it to absorb heat from the servers through a physical barrier, and then returns the slightly warmer water back to the source. This process provides high-density cooling without consuming local freshwater supplies, making it an attractive option for regions facing chronic water scarcity. Case studies of operational floating barges have already shown that this method satisfies the rigorous thermal requirements of modern hardware while maintaining a minimal environmental footprint.

Furthermore, the natural temperature stability of deep water provides a consistent cooling baseline that traditional facilities cannot match. On land, ambient air temperatures fluctuate wildly between seasons and even between day and night, forcing cooling systems to work harder during peak summer hours. Submerged or floating units benefit from the thermal mass of the ocean, which remains relatively constant throughout the year. This stability not only reduces energy consumption but also extends the lifespan of sensitive electronic components by minimizing the stress of thermal cycling.

Navigating the Land Scarcity Crisis in Global Digital Hubs

In hyper-dense coastal markets such as Singapore and Silicon Valley, land has become a prohibitively expensive and politically sensitive commodity. The competition for space between residential housing, public infrastructure, and industrial zones has reached a boiling point, often leaving data center developers at the back of the line for new permits. Floating data centers allow these developers to bypass traditional land-use friction by repurposing underutilized riverfronts, industrial port zones, and nearshore areas that are unsuitable for other types of development.

This strategic shift moves the infrastructure debate away from crowded neighborhoods and toward maritime jurisdictions where heavy industrial operations are already the norm. By utilizing modular designs, companies can manufacture data center components in specialized shipyards and then tow them to their final destination. This process can reduce deployment timelines from years to just a few months, effectively decoupling the growth of the digital economy from the sluggish pace of urban real estate development. The ability to “plug and play” capacity in a harbor environment provides a level of agility that terrestrial construction simply cannot provide.

Moreover, the proximity to submarine fiber landings is a critical geographic advantage for maritime facilities. Most of the world’s internet traffic travels through undersea cables that emerge at specific coastal landing points. By situating data centers directly in the water near these junctions, operators can reduce the physical distance data must travel, thereby minimizing latency. This is particularly crucial for real-time AI applications that require millisecond-level responsiveness. As coastal cities continue to grow, the water will likely become the only viable place to house the massive server arrays required to keep these urban centers connected.

Powering the Future: The Convergence of Offshore Renewables and Direct Compute

One of the most disruptive innovations in the current maritime shift is the “compute-at-the-source” model, which seeks to integrate data centers directly with offshore energy production. Positioning server clusters on or near floating wind platforms or wave energy converters allows for a direct connection to massive, untapped renewable sources. This arrangement solves the AI power paradox by tapping into energy that is otherwise difficult or expensive to transport back to the land-based grid. By consuming power where it is generated, these “energy-compute islands” eliminate the significant energy losses that occur during long-distance transmission.

While delivering 100 MW of power to a floating vessel presents significant engineering challenges, the potential to create self-sustaining hubs is a powerful incentive for investment. These projects envision a future where data centers act as a flexible load for offshore wind farms, absorbing excess energy during periods of high production and stabilizing the local energy ecosystem. This synergy not only provides the reliable, high-capacity power required for AI training but also aligns the tech industry with global decarbonization goals. The convergence of energy and data at sea represents a scalable blueprint for the future of industrial-scale computing.

However, the realization of this vision requires a significant leap in subsea cabling technology and marine power distribution. Current infrastructure is designed to send power from the sea to the land, not to distribute it among a network of floating consumers. Industry leaders are now exploring specialized underwater microgrids that can manage the variable output of renewable sources while providing the steady, “five-nines” reliability that data centers demand. If successful, this integration could turn the world’s oceans into a vast, green engine for the global intelligence network.

Confronting the Maritime Frontier: Resilience, Vibration, and Corrosive Realities

Transitioning to a water-based environment introduces a unique set of engineering hurdles that do not exist in the terrestrial world. One of the most significant concerns is the impact of low-frequency motion and vibration on sensitive server equipment. While land-based hardware is designed to remain stationary, equipment on a floating platform must endure the rhythmic swaying and occasional jolts caused by waves and tides. To maintain operational integrity, maritime facilities must employ advanced isolation systems and specialized ballast tanks that can counteract the movement of the sea.

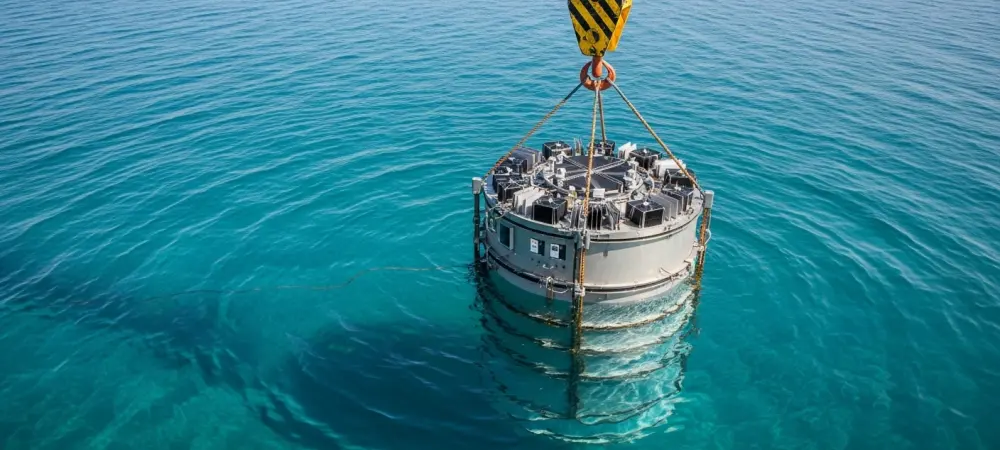

Furthermore, the harsh saltwater environment presents a constant threat in the form of corrosion and marine fouling. Salt air can be incredibly destructive to electronics if not properly managed, necessitating advanced filtration systems and airtight, pressurized server environments. Subsea capsules, like those tested in Microsoft’s Project Natick, have shown that a dry nitrogen atmosphere can actually lead to higher reliability than traditional air-cooled rooms by eliminating the corrosive effects of oxygen and humidity. Nevertheless, the cost and complexity of maintaining these specialized systems over a twenty-year lifecycle remain a primary point of debate among skeptics.

Security and logistics also take on a different dimension when infrastructure is moved offshore. Protecting a floating asset from physical threats or extreme weather events like hurricanes requires a specialized set of maritime security protocols and structural reinforcements. Accessing the facility for routine maintenance or hardware upgrades involves boats or helicopters, which can be more expensive and weather-dependent than a simple truck delivery on land. Despite these challenges, the industry is betting that the benefits of thermal efficiency and space availability will eventually outweigh the added costs of marine hardening.

Strategic Navigation for Industry Leaders: Adapting to Aquatic Infrastructure

To capitalize on the shift toward the water, stakeholders should prioritize investments in Tier 1 coastal markets where land and power constraints have reached critical levels. Industry leaders are encouraged to adopt a modular deployment strategy that utilizes refurbished barges or nearshore platforms as a bridge toward more permanent subsea or offshore installations. This approach allows companies to gain operational experience in the maritime environment without committing to the extreme engineering requirements of deep-sea deployments. By starting small and scaling horizontally, organizations can mitigate the risks associated with this new frontier.

Best practices for this transition include establishing deep partnerships with maritime engineering firms that understand the complexities of marine hardening and vibration mitigation. It is also essential to focus on “zero-water-consumption” cooling models to ensure long-term compliance with evolving environmental regulations and resource scarcity. Organizations that take the lead in developing standardized maritime data modules will likely set the technical benchmarks for the rest of the industry. Future-proofing infrastructure in this way requires a shift in mindset, treating the data center not as a building, but as a sophisticated marine vessel.

Anchoring the Digital Economy: Final Thoughts on the Offshore Compute Evolution

The rise of floating data centers marked a pivotal transition from the restrictive limits of the land to the expansive possibilities of the maritime frontier. This evolution was driven by the insatiable demands of AI, which forced the industry to reconsider every aspect of traditional infrastructure, from cooling methods to power procurement. While the ocean offered an elegant solution to the challenges of space and thermal management, the journey required a fundamental mastery of the harsh realities of the marine environment. Floating facilities did not replace land-based centers entirely, but they became an indispensable relief valve for the world’s most congested and power-hungry digital markets. The integration of deep-sea cooling and offshore renewable energy was recognized not merely as a feat of engineering, but as a requirement for a sustainable and scalable global intelligence network. Developers realized that the future of compute depended on the ability to decouple digital growth from terrestrial resource competition. By moving toward the water, the industry successfully navigated a path that balanced the need for massive processing power with the growing mandates for environmental responsibility. This offshore evolution provided the necessary foundation for the next generation of artificial intelligence, ensuring that the digital world could continue to expand even as the physical world reached its limits.