Artificial intelligence is running headlong into a wall built from copper and thermals, and the breathtaking market debut of Lightelligence showed how much capital believes light-based links can punch through that wall by lifting bandwidth, slashing latency, and cutting energy per bit across sprawling GPU fleets. The surge signaled conviction that interconnects, not compute, will set the pace of AI scale, and that photonics can reset the math of utilization and total cost.

Technology Overview and Industry Context

Optical interconnects move data as photons using modulators, waveguides, and detectors, while optical computing pushes some operations into analog photonic domains. Photonics matters because copper’s skin effect, crosstalk, and equalization overhead now exact steep penalties on power and reach.

Within AI clusters, thousands of GPUs synchronize via all-reduce, pipeline stages, and expert routing that hammer links with bursty, collective traffic. As supernodes swell, interconnect intensity rises faster than FLOPS, diverting budget and power from chips to data movement.

This shift refocuses value: utilization beats raw peak FLOPS. If optical links reduce queuing and retry, operators buy fewer accelerators for the same job, or finish sooner within power caps.

Architecture and Enabling Components

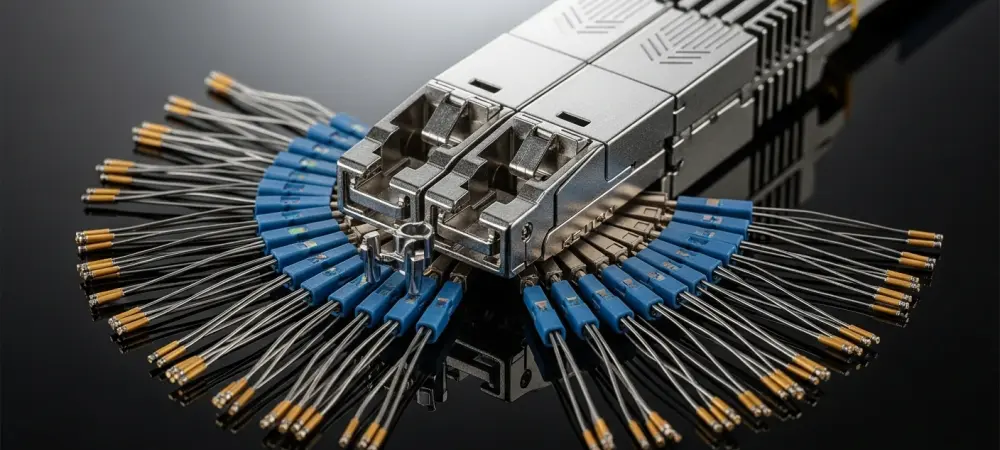

Photonic Transceivers and Modulation Schemes

Wavelength-division multiplexing stacks many colors on one fiber, multiplying throughput without more lanes. PAM4 offers cost and simplicity, while coherent brings longer reach and better OSNR at higher power and cost. Energy per bit trends below advanced electrical SerDes by avoiding long PCB traces and retimers. Error budgets improve as dispersion and crosstalk fall, translating to fewer retries and tighter application-level latency.

Optical Circuit Switching and LightSphere X

Distributed optical circuit-switching sets light paths between GPU islands, trading packetized flexibility for deterministic, low-jitter pipes. Topologies shift toward reconfigurable fabrics that collapse east–west contention. Lightelligence claims >50% model FLOPS utilization uplift and TCO cuts for collective-heavy and mixture-of-experts workloads. Gains hinge on batching communications into scheduled circuits; chatty, fine-grained RPCs benefit less.

Co-Packaged and Pluggable Optics

Pluggables win near term on serviceability and vendor neutrality. Co-packaged optics shorten electrical reaches to millimeters, unlocking higher rates but tightening thermal budgets and assembly complexity.

Board design becomes a thermal and signal-integrity puzzle: co-location reduces equalization power while forcing new cooldown paths, stricter co-planarity, and cleaner supply rails.

Hybrid Optoelectronic Computing Elements

Interferometer arrays and thermo-/electro-optic phase shifters perform analog matrix multiplies at low pJ/MAC, then digitize results. Calibration tracks drift from heat and aging, demanding fast feedback loops and error models.

This complements digital compute by accelerating fixed-weight or low-precision kernels, while leaving control flow and training updates to GPUs.

Control Software, Telemetry, and Integration

Routing software must coordinate circuit schedules with NCCL and RDMA, aligning collectives with available light paths. At scale, operators require multi-vendor interoperability, rich counters, and fault isolation. Standards around management and optics control remain fluid, raising integration time.

Recent Developments and Market Signals

Lightelligence’s first-day jump near 400% briefly implied roughly US$10 billion on projected US$15.5 million revenue, a valuation reading that investors prize utilization and power savings over near-term sales.

The HK listing raised about HK$2.4 billion with heavy oversubscription and marquee backers including Alibaba, GIC, Temasek, BlackRock, Fidelity, Schroders, Hillhouse, Lenovo, and ZTE, underscoring demand for credible optical bets.

Vendors now publish interconnect-centric roadmaps: larger GPU memory fabrics, optical backplanes, and tighter NIC-accelerator coupling point to optics moving from leaf–spine links into the rack and package.

Deployments, Use Cases, and Customer Profiles

GPU Supernodes and AI Training Clusters

Deployments span clusters with several thousand GPUs, where LightSphere X targets the collective spikes that stall training. Measured wins map to all-reduce and MoE expert exchanges that monopolize bandwidth. Higher utilization converts directly into lower queue times and better job throughput, an outcome CFOs can price into ROI models.

Intra-Node vs Inter-Node Connectivity in China

By revenue, Lightelligence led independent providers in China’s intra-node HPC optics with an 88.3% share, while Huawei held 98.4% overall. Buyers pursuing non-Huawei optionality maintain negotiating leverage and supply resilience.

This dynamic favors interoperability and fast qualification cycles, rewarding vendors that integrate cleanly with incumbent stacks.

Early Optical Computing Pilots

Pilot domains skew to inference pipelines and fixed-topology transforms where calibration hold times are manageable. NRE funds custom front-ends, with revenue near term still centered on interconnects.

Productization depends on robust temperature compensation and tooling that hides analog quirks from ML engineers.

Challenges, Risks, and Constraints

Technical and Productization Hurdles

Insertion loss, switch reconfiguration times, and packaging yield set practical limits on scale. Software maturity is equally decisive: transparent NCCL hooks and failure handling must match Ethernet’s muscle memory.

Manufacturing Scale and Supply Chain

Access to photonic CMOS lines, variability control, and high-throughput test define cost curves. Laser and modulator availability, along with thermal solutions, constrain ramp speed.

Assembly lines need precision alignment and hermeticity that many EMS providers are only now tooling for.

Financial Profile and Concentration Risks

Revenue climbed from RMB 38 million to 106 million across three years, but losses widened to RMB 1.34 billion and liabilities towered at a 473% asset-liability ratio. One customer contributed 40.6% of sales.

Such concentration magnifies forecasting error and funding risk, forcing disciplined pipeline conversion and cash management.

Regulatory, Standards, and Competitive Pressures

Export controls and procurement policies shape addressable markets and timelines. Standards fragmentation slows multivendor rollouts.

Domestically, Huawei’s breadth pressures pricing; globally, photonics leaders contest transceivers, co-packaged optics, and control planes.

Outlook, Adoption Pathways, and Scenarios

Adoption Timelines and Inflection Points

Optics look set to become standard inside supernodes first, then propagate across racks as bandwidth per GPU surpasses what copper can serve within power caps. Cost-per-bit crossovers and serviceability tooling will trigger broader shifts. Mature orchestration that treats circuits as first-class resources will accelerate this turn.

Performance Roadmap and Metrics That Matter

Operators track bandwidth density, pJ/bit, and fabric latency alongside realized utilization.

Vendors that publish audited cluster-level gains, not just PHY specs, will win procurement committees.

Business Model Evolution and Go-to-Market

Expect a blend of hardware, software licenses for orchestration, and SLAs for availability. Partnerships with GPU vendors, OEMs, and clouds will shortcut qualification and service channels.

Bundles that guarantee utilization targets could command premium margins.

Competitive Scenarios and Strategic Options

Outcomes range from incumbent dominance to open ecosystems with swappable optics. M&A could fuse switching IP with transceiver scale, or marry photonics to accelerator roadmaps. Alliances that standardize telemetry and control APIs will compound advantage.

Synthesis and Overall Assessment

Interconnects emerged as the next chokepoint, and photonics offered credible relief on bandwidth, latency, and energy, with Lightelligence positioned as an aggressive contender linking optical circuit-switching to tangible utilization gains. The IPO enthusiasm met fragile finances and concentration risk, creating a spread between technological promise and execution capacity.

The verdict favored cautious optimism: near-term credibility rested on repeatable supernode wins and clean NCCL integration; medium-term upside depended on scaling manufacturing, diversifying customers, and proving TCO at fleet scale; key watch items included audited utilization uplift, interoperability milestones, and a path to narrowing losses.