The traditional boundary between human intuition and machine execution in software operations has blurred as autonomous agents transition from mere script-runners to decision-making partners in the cloud infrastructure. This evolution marks a departure from static automation toward dynamic systems that not only execute code but also interpret the complex state of global clusters. While DevOps has historically relied on rigid pipelines, the rise of large language models has introduced a layer of cognitive reasoning that allows systems to handle ambiguity. This technological leap addresses the growing complexity of microservices that have long since outpaced the cognitive load capacity of human engineers.

The Emergence of Autonomous DevOps Intelligence

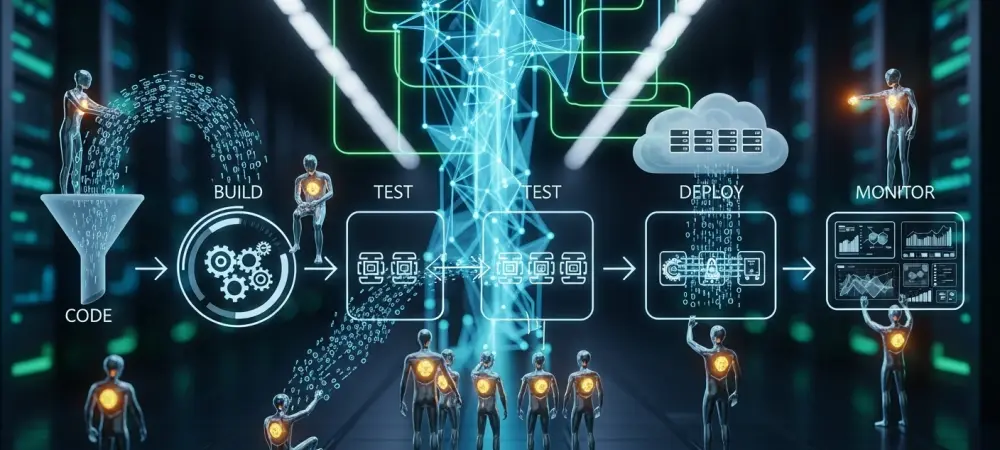

The emergence of these autonomous entities is a direct response to the explosion of telemetry data and the persistent burden of operational toil. In recent years, the industry moved from simple continuous integration to a state where systems must reconcile disparate inputs across security, performance, and cost. The core principle of an AI agent lies in its ability to operate independently within a set of constraints, moving beyond the “if-this-then-that” logic of traditional scripts. By utilizing transformer-based architectures, these agents can process natural language requirements and translate them into infrastructure modifications, bridging the gap between developer intent and operational reality.

This technology has emerged within a landscape defined by a shortage of specialized site reliability engineering talent. As organizations scale, the frequency of deployments and the density of logs create a noise floor that human observers cannot effectively monitor. Autonomous DevOps intelligence functions as a filter and a force multiplier, allowing teams to manage vast environments without a linear increase in headcount. Its relevance is underscored by the shift toward platform engineering, where the agent serves as an intelligent interface that abstracts away the underlying complexity of the cloud provider.

Core Architectural Pillars and Technical Framework

Perception: Multi-Source Data Ingestion

The foundation of any functional AI agent in this space is its ability to ingest multi-source data with a high degree of fidelity. Unlike traditional monitoring tools that look for specific threshold breaches, AI agents practice what can be described as environmental perception. They aggregate unstructured logs, structured metrics, and trace data from distributed systems, creating a holistic view of the application lifecycle. This ingestive capability is crucial because it allows the agent to recognize the subtle nuances between a localized glitch and a systemic failure.

Performance in this layer is measured by the agent’s ability to maintain context across massive datasets. The ingestion process involves real-time correlation of events across the stack—from the networking layer to the application layer. This allows the system to understand that a spike in database latency is not a standalone event but is likely caused by a specific deployment that occurred minutes prior. By centralizing this perception, the technology removes the data silos that typically prevent human teams from seeing the bigger picture during a high-pressure crisis.

Reasoning: Autonomous Decision-Making

Once the data is perceived, the reasoning component of the architecture takes center stage. This involves using high-parameter models to synthesize the gathered information and determine a logical path forward. The reasoning engine does not simply match patterns; it evaluates causal relationships within the system. For example, when an agent detects an increase in error rates, it queries the codebase, reviews recent configuration changes, and assesses the current resource utilization to hypothesize the root cause.

This autonomous decision-making process represents a significant technical hurdle, as the system must weigh the risks of various interventions. An agent might decide that a simple pod restart is sufficient, or it might determine that a full rollback is the only way to ensure system stability. This level of technical sophistication is what distinguishes an agent from an advisory chatbot. The agent’s ability to navigate these decisions in real-time dramatically reduces the time spent in troubleshooting meetings, although it necessitates a rigorous framework for governance and safety.

Current Trends: The Shift Toward Managed Autonomy

A prominent trend currently reshaping the sector is the move away from unconstrained autonomy toward a model of managed or bounded intelligence. Initial excitement surrounding fully autonomous agents has been tempered by the reality of production risks, leading to architectures where AI acts as a co-pilot with strict guardrails. Organizations are increasingly adopting human-in-the-loop systems where the agent performs the complex analysis and suggests a solution, but the final execution requires a human signature. This shift reflects a maturing understanding that machine intelligence excels at processing volume, while human intelligence excels at evaluating consequences. Innovation is also moving toward specialized, domain-specific models rather than relying on general-purpose linguistic engines. These models are being fine-tuned on vast repositories of infrastructure-as-code and historical incident reports. This specialization improves the accuracy of the agent’s reasoning and reduces the likelihood of hallucinations—a critical requirement for any tool managing production environments. Moreover, there is a growing emphasis on agentic observability, where the logic used by the AI to reach a conclusion is logged and made transparent to the engineering team.

Real-World Applications: Deployment Successes

In practice, AI agents are proving most effective in the realm of automated incident triage. Many enterprise organizations have deployed agents that monitor alert systems to provide immediate context when a page occurs. By the time a human responder is on the scene, the agent has already summarized the recent changes, pulled the relevant logs, and identified the primary suspect for the failure. This application has led to measurable reductions in Mean Time to Resolution, often cutting the initial investigative phase from thirty minutes down to mere seconds.

Another notable success is found in the governance of cloud costs and security compliance. Agents can continuously scan configuration manifests for deviations from organizational policy. If a developer attempts to deploy a resource that violates a security protocol or exceeds a budget threshold, the agent can automatically flag the Pull Request with a detailed explanation and a suggested fix. This proactive stance prevents issues from ever reaching the production stage, transforming the pipeline into a self-correcting loop that maintains high standards without slowing down the development cycle.

Critical Challenges: Technical Limitations

Despite the clear benefits, several critical challenges prevent the universal adoption of fully autonomous agents. The most significant technical limitation remains the reliability of causal reasoning in heterogeneous environments. Most AI agents perform exceptionally well in controlled cloud-native stacks but struggle when faced with the messy reality of legacy hardware and undocumented bespoke scripts. The lack of a unified data layer in many older enterprises creates blind spots that lead to inaccurate diagnoses and potentially dangerous actions.

Regulatory and security hurdles also play a major role in slowing deployment. There is a deep-seated concern regarding the security of the agents themselves, particularly if they have the authority to modify production infrastructure. A compromised agent could theoretically take down an entire global service or leak sensitive data during its ingestion process. Furthermore, the black box nature of some AI decision-making processes creates a conflict with compliance requirements in highly regulated industries where every change must be fully auditable and explainable.

Future Outlook: The Path to Full System Orchestration

Looking forward, the trajectory points toward a future of full system orchestration where the agent acts as the primary operator of the cloud. We are likely to see the development of multi-agent systems where specialized entities handle different parts of the lifecycle—one for security, one for performance, and another for cost—all coordinating under a master orchestrator. This would allow for a level of precision and speed in infrastructure management that is currently impossible for human-led teams to achieve. The long-term impact on the industry will likely involve a redefinition of the DevOps role itself. Instead of managing servers, future engineers will focus on defining the policy and intent that the agents must follow. The breakthrough will occur when agents can not only fix problems but also predict them before they manifest, using advanced forecasting to scale resources or reroute traffic based on environmental signals. This proactive orchestration will move the industry from a reactive posture to a state of permanent optimization.

Summary of Findings: Industry Impact

The review demonstrated that AI agents in DevOps transitioned from experimental prototypes to essential components of the delivery lifecycle. It was observed that while fully autonomous systems remained a distant goal for complex architectures, the practical application of agents in triage and governance provided immediate and significant value. The technology proved its worth by reducing the cognitive load on engineers and accelerating the feedback loops that are central to modern philosophy. The path forward required a strategic focus on building trust through transparency and bounded autonomy. Organizations that successfully integrated these agents did so by treating them as sophisticated assistants rather than replacements for human judgment. The evolution of this technology suggested that the future of cloud operations would be defined by a collaborative intelligence, where the speed of AI is tempered by the oversight of experienced engineers. Ultimately, the industry moved toward a more resilient and efficient future where the complexity of the digital world is managed by the very tools it helped to create.