The digital landscape has shifted so fundamentally that the act of “searching” is rapidly being replaced by the act of “delegating” to autonomous AI agents that no longer simply point to websites but execute complex tasks on behalf of the user. While the previous decade was defined by the struggle to rank on the first page of a search engine, the current era is defined by the struggle to be the “trusted source” for a machine that prioritizes data integrity over marketing flair. As these agents evolve from simple chatbots into decision-making entities, the traditional methods of SEO—relying on keyword density and backlink profiles—are proving insufficient for a world where the primary consumer of web content is often an LLM-based agent rather than a human browsing a screen.

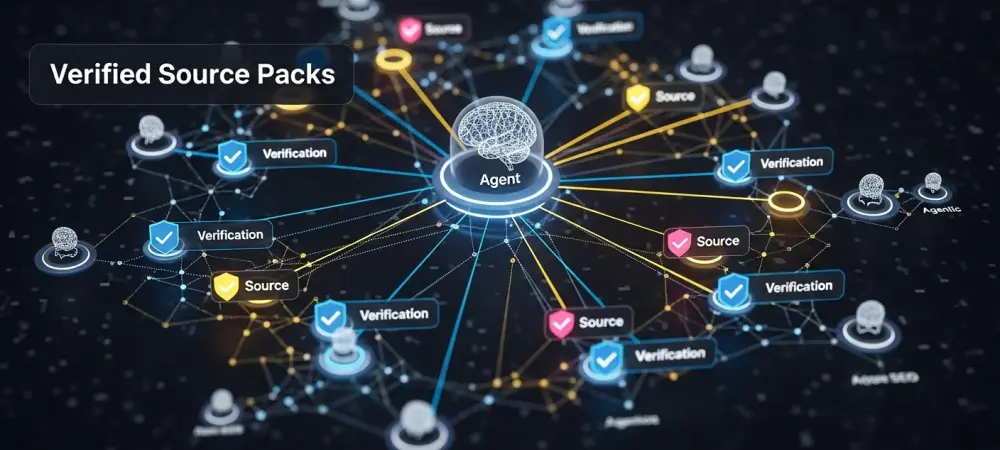

This shift has created a widening trust gap between what brands publish and what AI agents require to function safely. Agents are inherently risk-averse; if a model cannot verify the “official truth” of a product price, a warranty term, or a shipping exception, it will either hallucinate, hedge its response with vague language, or bypass the brand entirely in favor of a more structured competitor. To bridge this gap, a new infrastructure is emerging: Verified Source Packs. These are not just metadata or schema; they are comprehensive, machine-readable artifacts that package a brand’s operational reality into a format that agents can ingest, verify, and act upon with absolute certainty.

The evolution of these source packs represents the next critical layer of SEO infrastructure. By moving beyond simple link retrieval toward a model of autonomous execution, businesses must now treat their data as a product in itself. This analysis explores how these packs function, the technical standards currently being adopted by industry leaders, and why the transition from “content marketing” to “operational truth” is the most significant pivot for technical SEO leads in the current market.

The Evolution of Machine Trust: From Schema to Verified Source Packs

Data Trends: The Rise of Agentic Retrieval

The transition from structured data, like Schema.org, to Verified Source Packs marks a significant leap in how machines interact with the web. In the past, Schema was used primarily to help crawlers interpret the context of a page—telling a search engine that a string of numbers was a price or a date. However, as agentic retrieval becomes the dominant mode of interaction, agents need more than just context; they need a definitive, verifiable dataset that can be used for “inference-time” decision-making. Recent statistics indicate that LLM-driven interactions now account for a substantial portion of web traffic, while traditional web scraping is becoming less reliable for complex, multi-step tasks that require high precision.

To meet this demand, the industry has seen the emergence of new standards such as llms.txt, a protocol designed to provide a curated map of a site specifically for AI models. Platform providers like Yoast and Optimizely have already begun adopting these standards, signaling a move toward a more organized, machine-facing web. This trend suggests that the future of visibility is no longer about how well a page “looks” to a crawler, but how efficiently its core truths can be extracted and validated by an agent in real-time.

Real-World Applications: The “Official Truth” in Practice

In the ecommerce sector, the transformation is particularly visible as brands move toward centralizing their product catalogs, warranty terms, and shipping exceptions into machine-consumable artifacts. Instead of forcing an agent to scrape five different pages to determine a return policy, companies are publishing “truth packs” that offer a single, authoritative source. This shift moves the focus from traditional content marketing—which is often designed to persuade—to operational logic, which is designed to inform. By publishing explicit constraints, such as specific geographic limitations or eligibility rules, brands prevent models from hallucinating and making promises the business cannot keep. Technically, this is being achieved through the use of OpenAPI specifications and the Model Context Protocol (MCP), which allow for seamless communication between brand data and AI agents. Furthermore, companies are increasingly utilizing C2PA-inspired provenance techniques to secure their data. By using cryptographic signatures and hashed manifests, a brand can ensure that when an agent retrieves a piece of information, it can verify that the data has not been tampered with and originated from the official domain. This creates a level of technical benchmark that traditional SEO never required but is now essential for maintaining brand integrity in an automated ecosystem.

Expert Perspectives on the Agentic SEO Landscape

The Technical SEO Pivot: Data Governance and Index Hygiene

Traditional SEO roles are undergoing a massive evolution, shifting away from simple keyword optimization toward complex data governance and version control. Technical SEO leads are now finding themselves acting as “index hygienists” for non-human users, ensuring that the machine-readable versions of their sites are as clean and updated as the user-facing ones. Industry experts argue that the currency of the modern web is no longer just “authority” in the sense of backlinks, but “structure, provenance, and freshness.” If a machine cannot determine the last time a piece of data was updated, it will likely treat that data as stale and unreliable.

Moreover, this shift requires a new level of collaboration between SEO, legal, and product teams. Since Verified Source Packs often contain legally binding information like terms of service or pricing logic, the “index” is no longer just a marketing asset; it is a legal one. Technical SEOs are now responsible for managing the “truth domains” of a company, ensuring that the information provided to agents matches the current operational state of the business. This necessitates a move toward “Contract Mode” endpoints, where agents can perform live validation of data rather than relying on cached, potentially outdated information.

Machine Trust vs. Human Branding: The New Authority Signals

While human branding relies on emotional resonance and visual identity, machine trust is built on a foundation of cryptographic certainty. Many leaders in the field suggest that we are currently in an “early sitemap” era, reminiscent of the early 2000s when those who adopted clean, structured signals gained a massive long-term advantage over those who did not. In the agentic era, the brands that provide the most “frictionless” data will be the ones that agents recommend most frequently. It is a pragmatic survival strategy: agents are designed to complete tasks efficiently, and they will naturally gravitate toward sources that make that completion easy and risk-free.

The distinction between persuading a human and informing an agent is critical. A human might be swayed by a beautiful layout or a compelling narrative, but an agent only cares about the accuracy of the underlying facts. Consequently, the “authority signals” of the future are becoming technical in nature. A signed manifest that proves a document’s origin is becoming more valuable to an AI agent than a thousand high-quality backlinks. This does not mean branding is dead, but it does mean that branding now has a technical prerequisite: if the machine doesn’t trust the data, the human will never see the brand.

The Future of Verified Source Packs: Challenges and Implications

Technological Integration: Cryptography and Real-Time Validation

Looking ahead, the integration of cryptographic signatures and hashed manifests will become a standard requirement for ensuring content integrity against AI-generated misinformation. As the web becomes flooded with synthetic content, agents will require a way to distinguish between “official” brand data and “unofficial” third-party interpretations. The move toward “Contract Mode” endpoints allows for this by enabling live, secure validation of rapidly changing data like inventory levels or dynamic pricing. This ensures that an agent never recommends a product that is out of stock or quotes a price that has expired.

The Model Context Protocol (MCP) is likely to play a central role in this integration, serving as a bridge between diverse brand ecosystems and various AI agents. By establishing a common language for these interactions, the industry can avoid a fragmented landscape of bespoke integrations. However, this also introduces a need for constant infrastructure maintenance. Unlike a traditional website that might sit unchanged for weeks, a Verified Source Pack requires an “owner” who manages the freshness of the data, handles credential renewals, and monitors for any breaks in the machine-readable path.

Industry-Specific Implications: Regulatory and Market Hurdles

Different industries face unique hurdles when implementing these source packs, particularly those with strict regulatory requirements. In healthcare, for instance, companies must navigate the delicate balance between providing automated clinical data and adhering to strict privacy laws like HIPAA. The packs in this sector must be carefully scoped to include provider credentials and service coverage without straying into patient-specific advice. Similarly, the finance industry must manage the volatility of interest rates and the legal necessity of non-negotiable disclosure language. For these sectors, the “truth pack” is not just a convenience; it is a mechanism for regulatory compliance in an automated world.

The broader market impact of this trend is the potential for an “infrastructure decay” for those who fail to adapt. As AI agents become the primary way users interact with the web, businesses that do not provide machine-facing data may find their digital footprint shrinking. The necessity for dedicated brand owners of machine-facing data will likely lead to new corporate roles focused entirely on “AI Relations” or “Agentic Data Governance.” This shift will redefine the competitive landscape, where the winners are those who can provide the most reliable, verifiable truth to the algorithms that now manage human intent.

Strategic Summary: Preparing for the Agentic Era

The transition toward Agentic SEO was characterized by a fundamental reorganization of how digital information was packaged and delivered. At its core, the implementation of a Verified Source Pack relied on four distinct pillars: Content, Structure, Provenance, and Discoverability. Content represented the operational truth of the business—facts that the company was willing to defend. Structure ensured that these facts were presented in a boring, predictable format like JSON or OpenAPI that machines could parse without error. Provenance utilized cryptographic tools to guarantee that the information was authentic and unmodified. Finally, Discoverability ensured that these packs were hosted in stable, predictable locations that agents could find reliably.

The distinction between the different layers of the web became clearer during this period. Pages were designed to persuade humans, Schema was used to clarify the context of those pages, and Verified Source Packs were used to package truth for agents. Technical SEO leads who recognized this early began inventorying their “truth domains”—such as pricing, policies, and inventory—long before agentic behavior became the primary mode of web consumption. By establishing a canonical, machine-readable source of truth, these organizations protected themselves against the risks of model hallucination and third-party misinformation.

Ultimately, the move toward Verified Source Packs was a move toward transparency and reliability. As the digital ecosystem became more automated, the value of a brand was no longer just in its message but in its ability to provide high-fidelity data that machines could trust. This era required a departure from traditional marketing strategies in favor of technical rigor and data integrity. Organizations that successfully bridged the trust gap by treating their data as a verifiable product ensured their relevance in a world where the most important “customer” was often an AI agent looking for the truth.