Search visibility is no longer decided by a single spider; AI crawlers and real-time agents now shape how facts flow, how pages are cited, and who gets the credit, shifting the ground beneath every site that still audits only for Googlebot. That shift has practical stakes: Cloudflare estimates that 30.6% of web traffic is bots, and the AI share of that slice keeps rising, which means a growing portion of content discovery, interpretation, and attribution happens through systems that may neither execute JavaScript nor follow familiar directives. Technical teams can keep pace, but it requires broadening the audit lens to handle new user agents with different motives, capabilities, and referral behaviors.

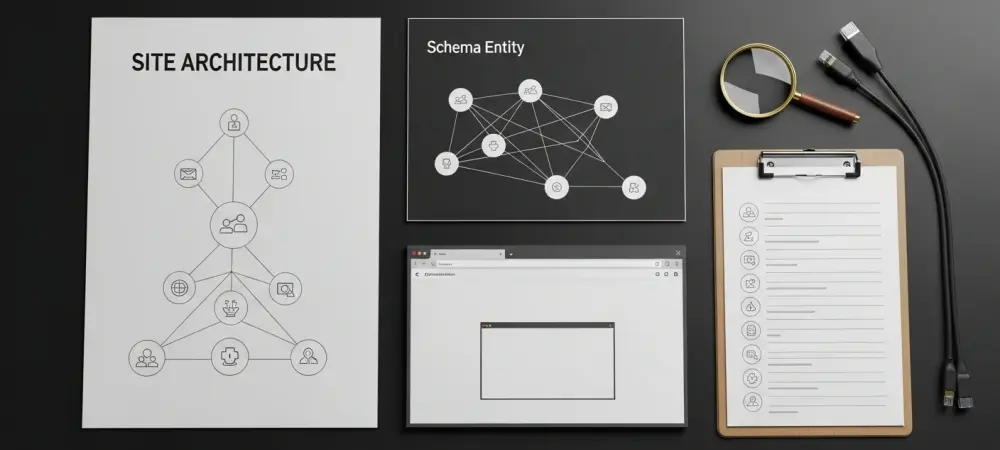

This research summary presents a pragmatic extension to the standard technical SEO audit so that sites remain discoverable, interpretable, and citable in AI-driven experiences. The work maps how today’s AI crawlers and agents interact with content to five concrete audit layers: explicit access policies, static HTML availability, robust structured data, semantic and accessible markup, and discoverability signals that influence whether machines find and credit key facts. The result is a blueprint that reuses core SEO skills while adapting them to a multi-agent web.

Central Thesis and Key Questions for Tech SEO in the AI Crawler Era

The central claim is straightforward: a traditional audit aimed at crawlability, indexability, performance, and basic schema must expand to account for AI crawlers that consume unrendered HTML and user-triggered agents that browse and summarize on demand. Where a single consumer once set the rules, a diverse ecosystem now applies different constraints, from non-rendering fetchers to authenticated proxies that disregard robots.txt. Without updating the audit, organizations risk invisibility in AI search, poor citation rates, and muddled identity resolution.

From that thesis flow several guiding questions. Which agents should be allowed, throttled, or blocked, and how should those choices be justified against referral value and brand risk. Can non-rendering crawlers access the critical text that defines products, pricing, and claims. Is site identity machine-resolvable, with connected entities and enough data density to support reliable citation. And, finally, how should traffic from agents be verified and measured so decisions reflect real patterns rather than spoofed signals.

The intended audience includes technical SEOs, developers, and digital leaders who own crawl management, rendering strategy, structured data, and analytics. The objective is to add five audit layers—each with tests, tooling, and decision rules—so teams can steer content toward machine readability and attribution, not just human usability in a browser.

Background, Market Shift, and Why It Matters Now

The makeup of automated traffic changed meaningfully. AI-oriented user agents now include training crawlers such as GPTBot, ClaudeBot, and CCBot; AI search crawlers such as OAI-SearchBot and PerplexityBot; and user-triggered agents such as Google-Agent, ChatGPT-User, and Claude-User that fetch and summarize in real time. These categories differ in whether they render JavaScript, honor robots.txt, or send referral traffic, which means a single rule of thumb no longer suffices. Data reinforces the urgency. With roughly 30.6% of traffic attributed to bots, quarter-over-quarter gains in AI agents are not a curiosity but a durable trend. Yet most audits still assume Googlebot’s behavior: deferred rendering is acceptable because Google executes scripts; identity is “nice to have” because ranking signals seem clear; and referrals are measured primarily through search console data. Those assumptions break when a crawler does not render, a proxy ignores robots.txt, or a summary engine cites only well-structured, unambiguous facts.

The stakes are concrete and multifold. Visibility depends on whether pages are accessible as static HTML and catapulted into AI search indexes. Attribution depends on whether identity is clear, schema is complete, and key facts are extractable as self-contained statements. Governance depends on balancing value and risk—some crawlers train models with little to no referral return—so policies must be deliberate, recorded, and revisited as behaviors evolve.

Research Methodology, Findings, and Implications

Methodology

The approach synthesized vendor documentation, standards, and traffic analyses into a modern audit blueprint that ties agent behaviors to specific technical controls and content structures. The research mapped categories—training crawlers, AI search crawlers, user-triggered real-time agents—to the levers that actually govern access, renderability, and extractability. Where reliable figures existed, they were used to anchor decision rules; where evidence was directional, recommendations were calibrated with caveats.

Data sources included official user agent documentation and verification methods; server-side logs and Cloudflare dashboards to gauge crawl-to-referral ratios; and industry research on accessibility and schema adoption. Because agent identity spoofing remains common, the study emphasized IP verification where supported, particularly for agents like Google-Agent that do not rely on robots.txt for access control.

Testing leaned on practical, repeatable techniques. Rendering checks used curl, View Source, and text-only browsers such as Lynx to confirm static HTML availability of mission-critical text. Accessibility and semantics were audited with axe or Lighthouse and validated with accessibility snapshots via Playwright MCP, then spot-checked in VoiceOver or NVDA to mirror how agents consume the accessibility tree. JSON-LD was validated with structured data testing tools and Google’s Rich Results Test, with special attention to completeness and relationships.

Findings

Five audit layers emerged as essential to AI-era readiness. First, access control must move from defaults to explicit, per-agent decisions. Grouping by purpose—training, AI search, user-triggered—clarifies trade-offs and informs policies in robots.txt for named crawlers, while acknowledging special cases like Google-Agent, which functions as a user proxy and ignores robots.txt. In those cases, server-side controls, IP allowlists, tokens, or authentication gates become the only reliable levers. Maintaining a living register of agents, IP ranges, and verification steps reduces guesswork and curbs spoofing. Second, JavaScript rendering is a pass/fail gate for visibility. Many AI crawlers do not execute scripts; if critical text is missing from the raw HTML, those agents will not see it. The audit therefore favors server-side rendering (SSR), static site generation (SSG), or pre-rendering as pragmatic solutions. Frameworks such as Next.js, Nuxt, and Angular Universal make SSR/SSG feasible, while pre-rendering can bridge legacy single-page apps until a full rollout is viable. Third, structured data needs depth and connectedness, not just presence. JSON-LD remains the preferred format, but the value comes from completeness—properties like price, availability, author, and publisher filled thoroughly—and from linking entities with sameAs to authoritative profiles. Evidence from industry leaders suggests that richer schema improves machine understanding and supports AI features, although a strict causal link to citation frequency has not been proven. Treat schema as scaffolding for identity and as groundwork for future, machine-verifiable claims.

Fourth, semantic HTML and the accessibility tree matter because many agents use that representation to navigate and act. A clickable div is not a button; a skipped heading level fractures document logic; unlabeled inputs become invisible. Clean landmarks, coherent headings, and native controls make the accessibility tree faithful and actionable. Automated checks catch common issues, but real assistive tech and accessibility snapshots surface structural flaws that trip up agents. ARIA should be used sparingly and correctly; misapplied roles often degrade semantics. Fifth, discoverability signals tip the balance between being read and being cited. Monitoring AI bot activity, verifying agent identity, and separating spoofed from genuine requests guide governance and ROI calculations. Clear organizational and author entities anchor attribution, while content placed early on the page and phrased as self-contained, citable statements travels better in summaries. An llms.txt file may be harmless and cheap to add, but it should not distract from foundational improvements with proven impact.

Implications

Practical implications are immediate. Sites gain AI search visibility when essential content exists in static HTML and when identity is explicit in schema and prose. Access choices influence brand reach and risk; organizations that document and periodically revisit allow/deny policies make trade-offs with eyes open and can adjust as referral patterns shift. Theoretically, the findings recast technical SEO as machine-centered design. The discipline becomes less about coaxing a single indexer and more about signaling to a federation of automated readers that extract, rank, and act. Data density, connected entities, and semantic clarity reduce ambiguity and improve retrieval, which in turn support accurate citation and downstream recommendations. Societally and organizationally, accessibility work now returns value on two fronts: inclusive user experiences and agent compatibility. As agent identities proliferate, governance maturity becomes a necessity. Policy exceptions, spoofing defenses, and consent-aware access models will be table stakes, especially for sectors handling sensitive or proprietary material.

Reflection and Future Directions

Reflection

Several realities shaped the blueprint. Agent labels, capabilities, and verification methods continue to evolve, so any fixed list decays quickly; the durable asset is a process for validating identity and recording policy choices. JavaScript-heavy stacks remain common, which makes phased SSR/SSG adoption—starting with high-value routes and fallback pre-rendering—a pragmatic path rather than an ideological shift.

Evidence quality also varied. Signals that schema and data density aid AI understanding are strong but mostly directional. This summary therefore prioritizes completeness and connections in JSON-LD while avoiding promises about direct citation gains. The thread running through all guidance is testability: if a measure cannot be verified in logs, snapshots, or raw HTML, it should not anchor a policy. Challenges were predictable yet nontrivial. Spoofed traffic inflated perceived AI interest until IP validation or server-side checks filtered it. ARIA misuse, often introduced during hurried redesigns, undermined semantics even on visually polished pages. And hype-prone suggestions—such as serving raw markdown or relying on llms.txt as a silver bullet—distracted from structural work that consistently moves the needle.

Future Directions

Research opportunities remain open. Controlled experiments that vary schema completeness and relationship richness could illuminate their contribution to AI citation frequency. Benchmarks that correlate accessibility snapshots with agent navigation success would provide a shared yardstick for structure quality. Both would move the conversation from plausibility to measurable effect. Standards and infrastructure are likely to mature. Machine-verifiable facts—publication dates, prices, policies—signed and versioned could enable agents to trust and act on information with fewer heuristics. Shared taxonomies for agent identity and verification would reduce spoofing and clarify consent signals. On the engineering side, token-based, consent-aware access for user-triggered agents could protect sensitive content while preserving utility. Measurement will broaden. Cross-channel attribution models that include AI search and agent referrals will give teams a clearer picture of value created by access decisions. Open datasets of AI citations, tied to content placement and phrasing, would help editors design for extractability without sacrificing readability.

Research Methodology, Findings, and Implications

Methodology

To ground the audit layers in reproducible practice, the research combined hands-on tests with policy reviews. For rendering, curl against production URLs and View Source confirmed whether mission-critical text appeared in the initial HTML. For semantics, automated tools surfaced common violations, and accessibility snapshots exposed how an agent would “see” controls, labels, and headings. For structured data, validators checked syntax and coverage, while entity resolution was stress-tested by following sameAs links to authoritative profiles. Bot analytics completed the loop. Server logs and Cloudflare dashboards identified which agents arrived, what paths they requested, and how behavior aligned with published capabilities. Where available, IP allowlists provided stronger proof of identity than user agent strings, particularly important for Google-Agent, which required server-side logic rather than robots.txt for control.

Evaluation criteria emphasized clarity and completeness. Policies needed to be documented per agent category, rendering had to supply static HTML for essential text, JSON-LD required thorough properties and connected entities, semantic HTML had to produce a clean accessibility tree, and discoverability depended on explicit identity signals and extractable content placement.

Findings

The audit layers translated into concrete, testable steps. Access decisions became a matrix keyed to purpose and referral value, with training crawlers scrutinized more closely than AI search crawlers or user proxies. Rendering checks turned subjective debates into binary outcomes: if curl could not find the product name, price, or claim in the HTML, the page failed for non-rendering agents. Schema audits shifted focus from minimal markup to dense, relationship-rich JSON-LD, closing gaps that often stemmed from copy-and-paste templates. Semantic corrections improved both human and machine experience. Replacing clickable divs with buttons, restoring heading order, and labeling forms reduced friction for screen readers and elevated agent comprehension. Discoverability work tied content craft to machine behavior: placing key facts in the top third of the page and phrasing them so they stood alone raised the odds of accurate extraction and citation, even when summaries were brief.

Sequencing proved decisive. Addressing identity first, then structure, then content, and finally interaction minimized rework and kept improvements compounding. Sites that tried to optimize extractability before resolving organization and author entities often saw facts reused without proper attribution, a preventable failure given straightforward schema fixes.

Implications

For operations, the takeaway is that AI readiness belongs in the technical SEO remit because the tools and habits already fit the job. Crawl management, rendering verification, structured data design, semantic validation, and log analysis align cleanly with the five layers. Teams that codify policies, automate checks, and review logs on a cadence will outpace those chasing one-off hacks. Strategically, access becomes a lever rather than a default. Organizations can accept reduced training exposure while improving AI search visibility, or invert that posture based on brand priorities and referral patterns. Either way, the decision is measured, tested, and revisited, not assumed. Editorially, design and content teams can collaborate to surface the most citable facts early and phrase them with clarity that travels.

Ethically and legally, better identity and verifiable facts support transparency. As agents carry more of the browsing workload, clear ownership and provenance reduce misattribution and build trust. Accessibility investments serve both people and machines, knitting inclusion and discoverability into the same technical foundation.

Reflection and Future Directions

Reflection

The process underscored that the multi-agent web rewards fundamentals. Sites that succeeded did not chase novelty; they executed on server-rendered visibility, identity-rich schema, semantically honest HTML, and disciplined analytics. Spoofing remained a persistent irritant until verification tightened, after which patterns stabilized and policy debates could rely on evidence rather than impressions. Migration paths mattered. Teams running heavy client-side applications gained traction fastest when they carved out content-critical routes for SSR or SSG, leaving complex interactive tools to evolve later. Pre-rendering bought time, especially for evergreen pages like docs, product overviews, and pricing. Treating this as a staged transformation, not a flip of a switch, reduced risk and improved outcomes. Caution around unproven tactics paid dividends. Adding llms.txt caused no harm but delivered no measurable lift on its own; the real gains came from structure and semantics. Similarly, overusing ARIA in hopes of “helping AI understand layout” often backfired; native elements already supplied the semantics that agents and assistive tech rely on.

Future Directions

The next horizon likely involves machine-verifiable truth. Publishing signed, versioned facts—return policies, compatibility matrices, pricing tiers—would let agents act with confidence and minimize hallucinated inferences. Standards bodies and large platforms are signaling interest in these capabilities, which suggests audits will soon include checks for verifiable data endpoints. Identity governance will tighten. Shared taxonomies for agent verification, together with token-based or consent-aware access models, could reduce spoofing and bring predictability to user-triggered proxies. Engineering patterns for incremental SSR/SSG adoption will mature into playbooks, making it easier for legacy single-page apps to expose content without a ground-up rebuild. Measurement frameworks need to catch up. As AI search and agent referrals contribute more value, analytics will expand beyond classic organic metrics to include agent-led discovery and citation tracking. Open, anonymized datasets of AI citations could help the ecosystem learn which content structures earn credit and why, enabling more rigorous editorial strategies.

Conclusion and Contribution to Practice

The investigation found that a modern technical SEO audit benefited from five added layers aligned to how AI crawlers and agents actually consume the web: explicit access policies, static HTML availability, robust and connected JSON-LD, semantic and accessible markup, and discoverability signals that support extraction and attribution. Evidence from traffic patterns, vendor documentation, and hands-on testing showed that sites implementing these layers achieved clearer identity resolution, broader AI search presence, and cleaner citation paths.

Practically, the path forward involved codifying per-agent policies, verifying rendering with curl and View Source, enriching schema for completeness and relationships, validating semantics with accessibility snapshots and assistive tech, and measuring agent activity with verified identities. Sequencing work as identity → structure → content → interaction reduced rework and amplified gains. Future-facing teams also prepared for token-based access to user-triggered agents and explored machine-verifiable facts to strengthen trust.

Taken together, the research pointed teams toward an audit that treated machine readability and attribution as first-class outcomes. It shifted technical SEO from optimizing exclusively for a single crawler to enabling a network of automated readers that train, index, summarize, and act. By operationalizing these findings, organizations were positioned to secure durable visibility and accurate credit across AI-driven experiences while building a foundation ready for the next wave of standards and agent capabilities.