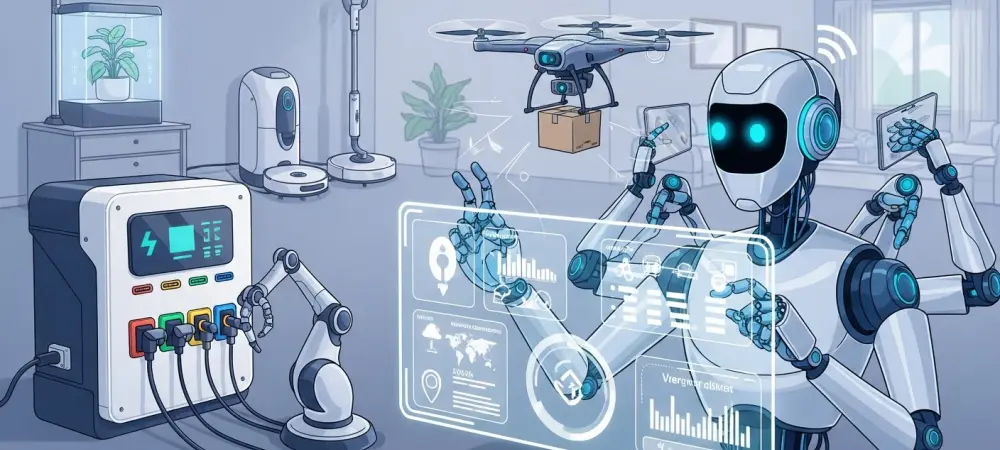

The rapid proliferation of autonomous software agents and automated procurement systems has fundamentally altered the global commercial landscape by moving the center of gravity away from human decision-makers toward highly efficient algorithmic entities that prioritize logic over emotion. For decades, the pillars of commerce were built on the foundation of human psychology, focusing on how to trigger a purchase through clever storytelling, aesthetic appeal, and the navigation of cognitive biases. However, the current shift toward an agentic economy means that a significant portion of purchasing power is now held by non-human actors that do not respond to traditional marketing tactics. This evolution necessitates a complete redesign of service architectures to accommodate these digital intermediaries, which are projected to drive more than half of all consumer purchasing activity by the end of the current decade. To remain competitive, organizations are forced to expand their strategic focus from traditional User Experience (UX) to include a robust Agent Experience (AX), ensuring that their brand remains visible and accessible to the machines that now act as the primary gatekeepers of commerce.

Mastering Machine Discovery and Evaluation

DatThe New Visibility

In the current era of automated commerce, visibility is no longer a matter of search engine optimization or high-profile billboard placement; it is defined almost entirely by machine readability and structured data availability. If a product’s essential specifications, real-time pricing, and current inventory levels are not presented in a highly structured format such as a standardized API or a specialized JSON feed, the product effectively ceases to exist in the eyes of an autonomous agent. While a human shopper might enjoy browsing a visually stunning website gallery to find the right item, a machine customer executes precise queries against databases to find the most efficient solution. Consequently, any friction encountered during this data exchange—whether it be an undocumented API endpoint or an inconsistent data field—acts as a hard barrier to entry that prevents a brand from even being considered in the initial discovery phase of the automated marketplace.

Beyond the mere presence of data, the quality and granularity of that information determine how effectively a machine can categorize and evaluate a brand’s offerings against its competitors. For example, in the industrial procurement sector, an AI agent does not just look for a part number; it looks for metadata regarding material composition, shipping lead times, and compatibility with existing infrastructure. Brands that fail to provide this level of detail in a machine-friendly format find themselves excluded from the procurement cycle before a human ever sees their name. This shift requires companies to treat their data catalogs as dynamic, customer-facing assets rather than static back-office spreadsheets. By prioritizing technical legibility, organizations ensure that their products are not just “online,” but are actively “discoverable” by the sophisticated algorithms that now manage complex supply chains and personal shopping preferences.

From Persuasion: To Verification

Marketing to non-human customers requires a fundamental pivot from the art of emotional persuasion to the science of objective verification. AI agents are entirely immune to the psychological triggers that have historically defined brand loyalty, such as catchy slogans, celebrity endorsements, or aspirational lifestyle imagery. Instead, these digital customers operate on a framework of strict verification, where every claim a brand makes must be backed by accessible and quantifiable proof. An autonomous agent will not “trust” a brand story about sustainability; it will query a blockchain or a third-party certification database to verify the actual carbon footprint of a specific SKU. In this environment, the experience is no longer about how a product makes a person feel, but rather about the accuracy and transparency of the data provided to support its performance claims.

The move toward verification means that the decision-making process for a purchase is now measured in milliseconds rather than days or weeks of research. When a machine is programmed to find the most reliable supplier for a specific component, it evaluates historical reliability data, compliance certifications, and real-time operational metrics. If a company cannot provide this evidence in a format that the agent can process and ingest immediately, it is eliminated from the selection matrix without a second thought. This creates a high-stakes environment where the “brand promise” must be mathematically provable. Organizations are now finding that their technical documentation and performance reports are their most valuable marketing collateral, as these are the only inputs that matter to the algorithmic evaluators that are increasingly responsible for executing multi-million dollar contracts and routine household purchases alike.

Building the Agent Experience (AX) Framework

The API: As the Primary Interface

In the modern world of non-human commerce, the Application Programming Interface (API) has moved from a behind-the-scenes technical tool to the primary customer touchpoint, effectively replacing the traditional storefront for a large segment of the market. The quality of a brand’s Agent Experience (AX) is now measured by the stability, predictability, and clarity of its technical interfaces. Because AI agents are built on strict computational contracts, they require a high degree of consistency to operate effectively. If a brand changes its API structure without warning or experiences frequent, unpredictable downtime, the agent perceives this as a major operational risk. Unlike a human customer who might patiently refresh a page or wait for a site to come back online, an autonomous agent will immediately flag the service provider as unreliable and switch to a competitor that offers a more stable connection.

Building a superior AX also involves providing comprehensive and machine-readable documentation that allows agents to self-onboard and understand the parameters of the service without human intervention. This documentation must be treated with the same care as a flagship store’s interior design, as it is the first point of contact for the developer or the AI agent that will be interacting with the system. Predictability is the cornerstone of trust in this ecosystem; therefore, versioning strategies must be clearly communicated, and breaking changes must be avoided at all costs. By focusing on the reliability of the API as a core product feature, brands can foster long-term relationships with autonomous entities that prioritize seamless integration over all other factors. This shift highlights a new reality where the engineering team is as responsible for the customer experience as the marketing department, necessitating a deeper collaboration between these two historically separate functions.

Precision: And Scalability in Data

One of the most significant challenges in designing for non-human customers is that machines lack the innate human ability to infer meaning from context or to resolve ambiguities through intuition. This makes explicit and precise data modeling a front-line business priority rather than a technical afterthought. Every unit of measurement, every metadata tag, and every piece of terminology used in a company’s data feed must be defined with absolute consistency across the entire organization. For instance, if one department lists weight in kilograms while another uses pounds, or if product categories are labeled differently across different regions, an autonomous agent will likely encounter errors that halt the transaction. Ensuring that data is standardized and unambiguous allows machines to process information with the speed and accuracy required in a high-velocity automated market.

In addition to precision, brands must invest in infrastructure that can handle the massive scale and extreme speed of machine-to-machine interactions. Unlike human shoppers who browse at a leisurely pace, AI agents can perform thousands of requests per second, searching for the best deals or checking for stock updates. To accommodate this, organizations must implement robust pagination for large data sets and ensure their systems support idempotent requests, which prevent accidental duplicate transactions if a network error occurs during a purchase. This level of scalability requires a move toward cloud-native architectures and highly optimized database management systems. By building a technical foundation that supports high-volume, automated traffic, companies can participate in the emerging “batch-buying” trends where agents aggregate demand and execute massive orders across multiple suppliers simultaneously, a process that would be impossible to manage through traditional human-centric web interfaces.

Trust and Organizational Evolution

Reimagining Credibility: And Infrastructure

In a machine-to-machine ecosystem, the traditional concept of brand trust is being redefined as a function of infrastructure performance and operational transparency. While human customers might rely on social proof, reviews, or a long-standing history of a brand, machine customers evaluate credibility through live status endpoints, uptime statistics, and real-time performance benchmarks. Trust is no longer a nebulous feeling but a quantifiable metric that can be tracked in real-time. For example, an autonomous logistics agent might monitor the “health” of a shipping partner’s API to ensure that tracking updates are consistently delivered within a specific latency window. If the partner fails to meet these technical requirements, the trust is broken, and the agent may autonomously redirect future business to a more technically competent provider.

This shift toward infrastructure-based trust also forces organizations to be more transparent about their operational realities, including their supply chain ethics and environmental impact. Because machines can be programmed to prioritize specific criteria—such as a low carbon footprint or fair labor certifications—brands must expose this data through verifiable, machine-readable reports. If there is a gap between what a brand claims in its public marketing and what its actual performance data reveals, an algorithm will detect the discrepancy in an instant. This demand for transparency is leading to the adoption of technologies like distributed ledgers and real-time reporting dashboards that allow brands to “show their work” to automated auditors. As a result, maintaining a high-performance, transparent technical stack is becoming a form of brand equity that is just as valuable as a traditional reputation.

Breaking Internal Silos: For Unified Design

Successfully navigating the transition to an agentic economy requires a radical reorganization of internal business structures to break down the silos that have traditionally separated technical and creative roles. To design effectively for non-human customers, Customer Experience (CX) leads must work in lockstep with data scientists, software engineers, and database architects. This convergence is necessary because the decisions made at the database level—such as how a product is categorized or how an API is structured—now have a direct and profound impact on the customer experience. Data governance can no longer be seen as a back-office compliance task; it is now a core capability that determines whether a brand can even enter the automated buying funnel.

When companies begin to treat their database architects as experience designers, they unlock the ability to create assets that are perfectly tuned for machine consumption. This organizational evolution often involves the creation of cross-functional teams dedicated to “Agent Experience,” where technical documentation is given the same level of attention and investment as high-end marketing collateral. By integrating these disciplines, brands can ensure that their digital identity is consistent and powerful across all interfaces, whether they are being viewed by a person or parsed by a piece of software. This collaborative approach also helps the organization respond more quickly to the rapidly changing requirements of the automated market, allowing them to iterate on their technical offerings with the same agility that they once applied to their seasonal advertising campaigns.

The Two-Speed Strategy: For Future Growth

As we move deeper into this decade, organizations must master a “two-speed” strategy that allows them to satisfy two vastly different types of customers simultaneously without compromising the quality of either experience. This approach requires maintaining a high-touch, emotionally resonant interface for human users while concurrently providing a high-performance, logic-driven technical layer for autonomous agents. For instance, a luxury retail brand must continue to offer a visually stunning, immersive website that tells a story and builds an emotional connection with a person. At the same time, it must provide a lightning-fast, highly structured API that allows a personal shopping AI to check sizes, prices, and shipping options in an instant. The challenge lies in ensuring that these two paths are perfectly synchronized, as any inconsistency between the brand promise shown on the screen and the data provided in the API can lead to systemic failures and a loss of market share.

Managing this dual-track strategy involves a sophisticated approach to content and data management, where a single source of truth feeds both the human-facing and machine-facing channels. This ensures that when a price is updated or a new product is launched, the information is reflected accurately across all touchpoints in real-time. Brands that successfully navigate this complexity will find themselves with a significant competitive advantage, as they can capture the loyalty of human consumers while also becoming the “preferred provider” for the algorithms that manage an increasing share of global commerce. This two-speed model is not merely a temporary fix but the new standard for experience design in an automated world, where the most influential customers may no longer have a heartbeat, but they certainly have a strict set of requirements and the autonomy to act on them.

In the previous decade, the focus of digital transformation was largely on moving human interactions online; however, the shift toward the agentic economy has necessitated a much more fundamental change in how businesses operate. Organizations moved beyond simple website optimization and instead prioritized the creation of robust technical ecosystems that are legible to both people and machines. This transition required a significant investment in structured data, API stability, and cross-functional collaboration, effectively merging the worlds of marketing and engineering. By treating technical documentation as a primary customer-facing asset and infrastructure as a core component of brand equity, companies were able to secure their place in the automated procurement funnels that now dominate many sectors. Ultimately, the brands that thrived were those that recognized that serving a non-human customer was not about replacing the human experience, but about extending their reach into a new, purely logical domain where verification and performance are the ultimate markers of success.