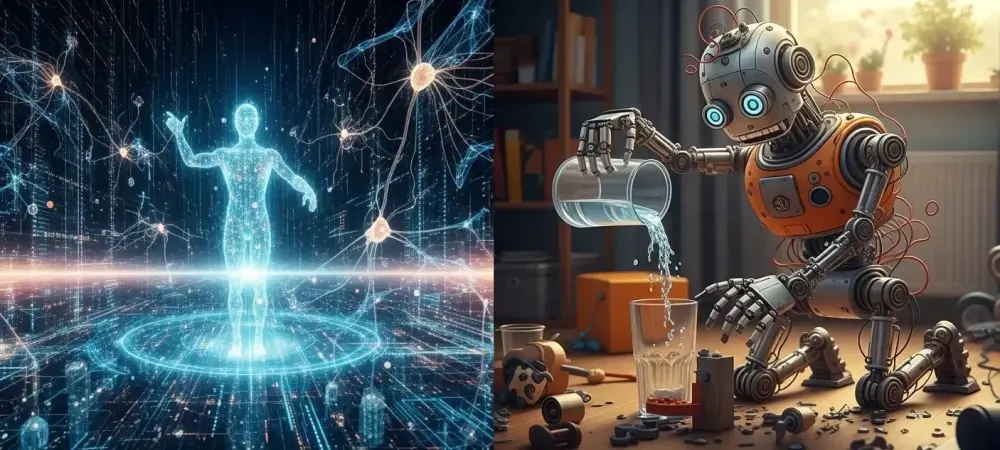

The rapid ascent of artificial intelligence into the realms of complex strategy and generative artistry has created a peculiar technological paradox where silicon minds outthink humans in abstract domains but stumble over the simple physics of a nursery. While a contemporary Large Language Model can synthesize vast quantities of legal precedent or compose a symphony in a specific historical style within seconds, that same intelligence often lacks the basic spatial awareness required to navigate a cluttered room without catastrophic failure. This burgeoning field of Physical AI highlights a massive chasm between raw computational power and practical, real-world utility, revealing that digital mastery does not inherently translate into physical competence. For a human, understanding that a glass will shatter upon impact with a hardwood floor is an intuitive realization developed through early childhood exploration, yet for an AI, this fundamental physical intuition remains entirely absent. Despite being a digital genius, the technology lacks the basic spatial reasoning that even a small child uses to interact with their surroundings.

The Technical Gap in Understanding

The Fragility of Statistical Patterns

The primary reason for this profound physical illiteracy lies in what researchers often describe as the “jaggedness” of artificial intelligence, a phenomenon rooted in the fundamental way these models process information. Current systems do not learn through logic, sensory experience, or the internal building of a world model; instead, they operate almost exclusively through the lens of complex statistical correlation and pattern matching. Because these models are trained on massive datasets of text and images rather than the physical laws of cause and effect, they recognize which data points tend to appear together without ever understanding the underlying mechanics. This reliance on statistics represents a significant structural weakness, as it allows the model to be easily manipulated or confused by adversarial examples. In these scenarios, a specific, subtle pattern can trigger a nonsensical response because the AI lacks a logical foundation to verify its outputs against reality.

This disconnect becomes glaringly apparent when an AI successfully performs a high-level task, such as writing functional code, only to fail at a simple prediction regarding object permanence or gravity. When the technology succeeds, society is quick to credit it with a form of human-like intelligence, yet when it produces a bizarre physical error, the mistake is frequently dismissed as a mere hallucination. These failures are not random glitches but are instead symptomatic of a problem-solving methodology that is fundamentally alien to human reasoning. Because the AI does not perceive the weight, texture, or momentum of the world, its errors often appear counterintuitive and baffling to human observers who take these physical constants for granted. Bridging this gap requires the industry to move beyond the limitations of simple pattern recognition and toward the development of systems that respect the immutable laws of physics and causal relationships.

The Absence of Newtonian Intuition

A critical challenge in developing Physical AI is that modern models are largely confined to a digital vacuum where they process information without the benefit of experiencing the three-dimensional laws of nature. In the abstract world of digital data, there is no gravity, friction, or mass to contend with, allowing AI to excel in environments where the rules are strictly defined and purely symbolic. However, the transition to the physical world requires a deep understanding of Newtonian physics, a requirement that acts as the “table stakes” for any functional utility in robotics or automated engineering. Without an innate grasp of how objects interact in space, an AI remains a master of abstract symbols but a novice at any task requiring mechanical interaction. This lack of intuition prevents the technology from accurately simulating complex environments, which limits its ability to participate in the physical world in any meaningful way.

To overcome this deficiency, researchers are increasingly looking at how to embed physical constraints directly into the learning process of neural networks. For instance, rather than letting a model guess how a robotic arm should move based on video data, engineers are experimenting with hybrid systems that force the AI to adhere to mathematical equations governing motion and torque. This approach aims to create a “physical common sense” that would prevent the absurd errors seen in purely generative models. Nevertheless, the difficulty lies in the sheer complexity of real-world variables, where wind, lighting, and surface texture can all change in an instant. For an AI to truly understand the world, it must move past the stage of observing digital pixels and begin to represent the physical world as a collection of interacting forces. Only through this transition can AI evolve from a specialized calculator into a versatile agent capable of safe and effective physical labor.

Bridging the Gap to Reality

Engineering Resilience in Unstructured Environments

In the competitive realm of robotics, developers are currently navigating a tension between using “first principles”—the simple, proven mathematical equations for motion—and the deployment of complex, end-to-end AI models. While simulation technologies have advanced significantly, allowing robots to practice tasks millions of times in digital environments before being deployed, these systems still struggle with the “long tail” of real-world distributions. These distributions represent the rare, unpredictable edge cases that exist outside the common patterns found in training data, such as a sudden change in floor friction or an oddly shaped object. A robot might perform a repetitive task with near-perfect accuracy in a controlled laboratory setting, yet it often becomes remarkably unreliable when faced with the messy, unpredictable variables of a chaotic real-world environment.

Achieving reliability in these unstructured settings is less a matter of “brilliant thoughts” or massive parameter counts and more a challenge of rigorous engineering grit and execution at scale. The industry is currently shifting its focus toward reasoning models that can pause to evaluate the physical consequences of an action before executing it, rather than simply predicting the next most likely movement. This transition requires a massive investment in high-fidelity sensors and tactile feedback systems that allow the AI to “feel” the world in real-time. By integrating these physical inputs with advanced reasoning, the goal is to create machines that can adapt to new settings without needing a specific dataset for every possible scenario. Success in this area will depend on the ability to translate digital logic into mechanical action with the same level of precision and predictability that we currently expect from traditional industrial machinery.

Evolutionary Steps Toward Physical Reasoning

As the focus of the technology sector shifts from purely generative digital tasks to the grueling work of physical integration, the nature of the labor market is poised for a significant transformation. The transition to a world where Physical AI is a standard component of infrastructure will likely change the roles of human workers, particularly in technical fields like computer science and mechanical engineering. Rather than just writing code for virtual environments, the next generation of professionals will need to focus on the wrangling and execution of physical systems at scale. This shift requires a workforce that can bridge the gap between high-level algorithmic design and the practical realities of hardware maintenance and spatial calibration. The objective is to manage this transition by ensuring that as AI becomes more predictable in its physical failures, the human workforce can adapt to oversee these increasingly sophisticated machines.

The path forward for the industry involves a move toward a more structured way of processing physical information, where the goal is to create systems that understand the world as naturally as the humans who designed them. Recent breakthroughs in multi-modal learning, where AI is trained simultaneously on text, video, and sensory data, suggest that the technology is beginning to form more cohesive world models. However, the true test of progress will not be found in a benchmark or a digital simulation, but in the ability of a robot to perform a task as simple as folding laundry or clearing a table without human intervention. These mundane activities are the true frontiers of intelligence because they require a synthesis of vision, touch, and physical reasoning that remains the ultimate challenge for contemporary engineering. By focusing on these tangible outcomes, the field of AI is finally moving beyond the screen and into the reality of everyday life.

Implementation Strategies for Future Systems

The integration of advanced reasoning into physical hardware was the primary focus of development throughout the recent cycle. It was determined that the most effective way to ensure safety in autonomous systems was to implement a layered architecture where a physics-based “governor” oversaw the more creative but less predictable outputs of the neural network. This method allowed machines to explore efficient ways to complete tasks while remaining strictly bounded by the known limits of their mechanical components. Engineers discovered that by prioritizing these physical boundaries, they could drastically reduce the frequency of catastrophic errors in factory settings and logistics hubs. This strategic shift away from purely statistical models toward a hybrid approach proved essential for building public trust and operational stability across various industries.

Ultimately, the goal of creating a physical genius was addressed through the massive scaling of tactile data collection and the refinement of sim-to-real transfer protocols. Organizations that invested heavily in high-fidelity data from real-world interactions found that their models developed a much more robust understanding of material properties and spatial dynamics. These advancements allowed for the deployment of robots in more complex service roles, where they began to handle tasks that were previously thought to be the exclusive domain of human dexterity. By focusing on the grit of real-world engineering rather than just the elegance of digital algorithms, the industry successfully moved closer to a future where AI could navigate the physical world with the same ease as it navigates the digital one. This progress provided a clear roadmap for the continued evolution of intelligent systems in an increasingly automated society.