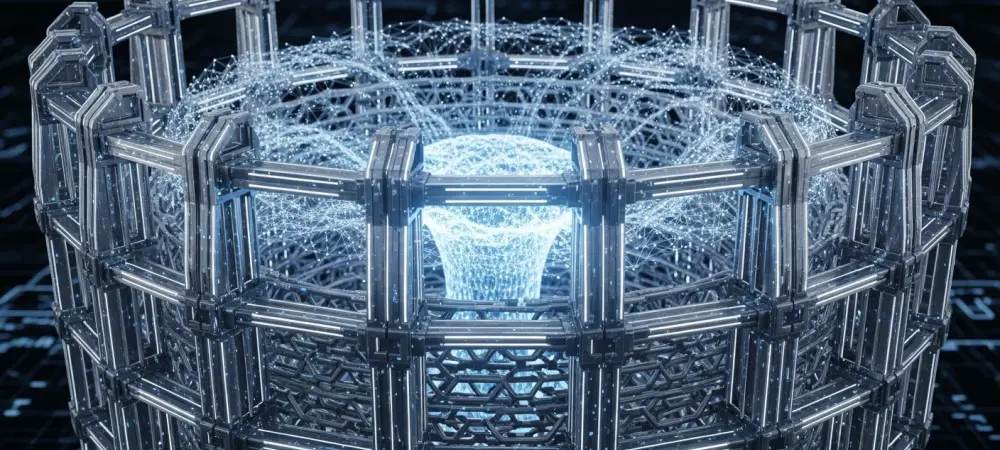

The era of typing prompts into a chat box and waiting for a clever response is rapidly fading into the background of technological history. While the initial wave of artificial intelligence focused on human-to-machine conversation, the current landscape is dominated by an “Invisible Giant”: Agentic AI. These systems do not merely suggest text; they execute multi-step workflows, manage complex software ecosystems, and make operational decisions without a human ever touching a keyboard. This shift toward total autonomy represents a monumental leap in productivity, but it also introduces a precarious level of risk that necessitates a completely new architecture for safety and reliability.

As these background-operating agents become the connective tissue of the modern enterprise, the stakes of their autonomy have never been higher. Unlike a chatbot that might hallucinate a fact in a poem, an agentic system tasked with inventory management or medical diagnostics could cause tangible, real-world damage if it deviates from its intended path. Consequently, the industry is witnessing a frantic yet methodical movement to establish industry-specific guardrails. These frameworks are not just about filtering words; they are about defining the boundaries of digital behavior to ensure that the next generation of AI integration remains both powerful and predictable.

The Shift from Conversational to Agentic Workflows

Market Growth: The Transition to Autonomy

The enterprise sector is currently undergoing a massive pivot away from the human-in-the-loop “chatbot” model toward independent Agentic AI systems. Adoption statistics from the current year indicate that businesses are no longer satisfied with assistants that require constant hand-holding. Instead, capital is flowing toward background-operating workflows that handle mundane and complex tasks autonomously. This transition is driven by a measurable decline in preference for chat interfaces, as professionals realize that a conversation is often just another hurdle to getting actual work done.

Research insights suggest that the true value of AI lies in its ability to operate silently. When an AI can navigate an internal database, cross-reference it with market signals, and update a procurement order without being prompted, it transforms from a tool into a workforce. This move toward operational efficiency is the primary engine behind the current market expansion. However, as the human moves further away from the immediate feedback loop, the necessity for automated oversight and strict behavioral parameters becomes the top priority for developers and stakeholders alike.

Real-World Applications: From Operating Rooms to E-Commerce

In the medical field, the application of agentic systems is already redefining surgical precision. Digital twins and autonomous agents are providing data-driven support that goes far beyond traditional heuristics or “rules of thumb.” By analyzing vast arrays of patient-specific data in real time, these systems can recommend implant placements or surgical maneuvers with a level of accuracy that accounts for anatomical variations often missed by human surgeons. This is not just a digital assistant; it is a highly specialized partner that operates with a level of data-depth previously unavailable in the operating room.

In the realm of e-commerce, “Autopilot” systems are now managing massive product inventories and optimizing listings with zero manual intervention. These agents monitor global trends, adjust pricing, and rewrite product descriptions to maximize conversion rates across hundreds of thousands of items simultaneously. Similarly, in the broader enterprise landscape, invisible agents are monitoring corporate signals and executing complex business logic. These systems function as the quiet engine of the organization, making split-second decisions that keep supply chains moving and customer service pipelines flowing without the need for a single user-generated prompt.

Perspectives from the Frontier: Expert Insights on Reliability

The Black Box Challenge: Software Artifacts

Krishna Gade, a leader in AI observability, argues that the industry must stop treating AI as a mystical entity and start treating it as a first-class software artifact. The “Black Box” challenge arises when an agent makes a decision based on probabilistic logic that its human creators cannot easily trace. To mitigate this, rigorous monitoring and observability tools are being integrated directly into the agent’s architecture. By treating every autonomous action as a trackable piece of code, developers can ensure that when an agent deviates from its mission, the failure is caught and corrected before it cascades through the system.

The Autopilot Metaphor: The 95/5 Rule

Christian Umbach advocates for a specific philosophy known as the 95/5 rule to manage the relationship between humans and autonomous systems. In this framework, the AI handles 95% of the labor-intensive, repetitive, and data-heavy tasks, while humans retain the final 5% of control—the critical decision points and emergency overrides. This “Autopilot” metaphor suggests that while the machine flies the plane during the steady state, the human pilot is always present to manage the takeoff, landing, and any unforeseen turbulence. This balance ensures that efficiency does not come at the cost of ultimate accountability.

Regulatory Guardrails: Institutional Validation

Gilly Yildirim emphasizes that technical guardrails alone are insufficient; institutional oversight must play a foundational role. In high-stakes environments like healthcare, the role of agencies like the FDA is crucial in validating autonomous recommendations. Clearance from such bodies acts as a primary guardrail, confirming that an AI’s logic has been tested against rigorous safety standards. This external validation provides the necessary trust for institutions to deploy agentic systems in life-critical situations, moving the burden of proof from the developers to a standardized regulatory framework.

The Death of the Prompt: Data-Driven Utility

Cyrus Roepers-Chamlou suggests that the most significant evolution in AI utility is the move away from text-based prompts. True agentic power comes from systems that “listen” to data signals rather than waiting for a user to type a command. When an AI can react to a drop in server performance or a shift in stock market volatility automatically, it provides a level of utility that a chat-based assistant never could. This shift marks the end of the prompt engineering era and the beginning of the era of proactive, signal-driven automation.

The Future Landscape: Navigating Probability and Risk

From Deterministic to Probabilistic Logic

The move from traditional software to AI agents represents a shift from deterministic logic to probabilistic reasoning. Traditional programs follow a “if this, then that” structure, but Large Language Models operate on likelihoods. Managing this inherent uncertainty is the greatest technical challenge for the next wave of AI. Guardrails must be designed to contain the “hallucinations” of probabilistic models, ensuring that even when a model is guessing the next best step, it stays within a predefined corridor of safety. This requires a layer of logic that sits above the AI, acting as a filter for its autonomous outputs.

The Visibility Paradox: Transparency vs. Efficiency

A significant risk in the rise of the “Invisible Giant” is the visibility paradox: the more efficient an agent becomes at working in the background, the harder it is to spot errors when they occur. “Invisible” errors can accumulate over time, leading to systemic failures that are difficult to diagnose. To counter this, the technical infrastructure of the future must prioritize transparency without sacrificing the speed of autonomy. This involves creating “human-readable” logs of AI decision-making processes, allowing for retrospective audits even if the agent operates without real-time human supervision.

Conclusion: Balancing Autonomy with Accountability

The landscape of artificial intelligence was transformed by the realization that visible, conversational interfaces were merely a stepping stone toward a more potent, silent form of labor. As agentic systems took over the heavy lifting in specialized sectors, the industry shifted its focus from improving “talk” to refining “action.” It became clear that the success of these autonomous entities was not found in their intelligence alone, but in the strength of the technical and regulatory walls built around them. Organizations discovered that true scalability required a move away from manual prompting in favor of signal-based execution, provided that a robust layer of observability remained in place to manage the transition from deterministic code to probabilistic thought.

Moving forward, the primary objective for developers and policy makers must be the refinement of real-time intervention tools that can halt an agent the moment it drifts outside of its operational bounds. Establishing global standards for agentic behavior will be essential to prevent a fragmented landscape where safety protocols vary wildly between jurisdictions. This requires a collaborative effort to build “circuit breakers” into the very fabric of autonomous models, ensuring that as AI becomes more invisible, its accountability remains crystal clear. The ultimate mission was always to create systems that could act on our behalf, but the enduring lesson is that even the most capable agents must remain tethered to human intent through a rigorous, multi-layered framework of oversight.