The temperature reading on a graphics card often represents the invisible ceiling on its performance, a boundary that manufacturers define with standard hardware but which enthusiasts are relentlessly determined to shatter through unconventional means. This fundamental conflict pits the reliable, factory-installed stock cooler against the world of extreme, custom-built solutions, where practicality is sacrificed at the altar of raw thermal efficiency.

The Default and the Drastic: Understanding GPU Cooling Solutions

Every graphics card ships with a stock cooler, a solution born from compromise. Manufacturers must balance thermal performance with cost, size, and ease of installation to create a product that works for the vast majority of consumers in a wide array of PC cases. This default setup is designed to be adequate, keeping the GPU within safe operating temperatures under typical gaming loads without requiring any user intervention. It is the baseline—reliable, accessible, and universally compatible.

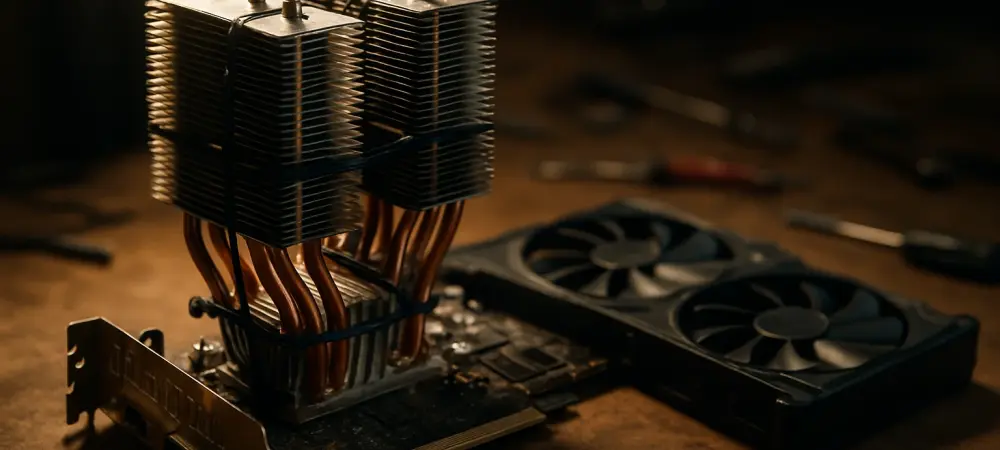

In stark contrast, extreme cooling methods abandon these compromises entirely. They exist to demonstrate the untapped thermal potential locked within a GPU. A compelling case study is the TrashBench experiment with an RTX 2060, where the standard cooler was replaced with oversized and unconventional hardware. This approach is not about creating a consumer product but about exploring the absolute limits of performance, revealing just how much thermal headroom is left on the table by standard, mass-market designs.

Performance Under Pressure: A Head-to-Head Comparison

Raw Thermal Efficiency and Temperature Reduction

When measured by pure cooling capability, the difference between stock and extreme solutions is staggering. The stock cooler on the Asus RTX 2060 allowed the card to reach a load temperature of 74°C, a perfectly acceptable figure for normal operation but one that leaves little room for aggressive overclocking or silent operation. This temperature serves as the benchmark against which all modifications are measured.

The experimental modifications showcase a performance gap that is nothing short of dramatic. By attaching a single, oversized Thermalright CPU tower cooler directly to the GPU die, the temperature plummeted by an incredible 27°C. Pushing the concept to its absolute limit, a second tower cooler was affixed to the back of the card, achieving a total temperature reduction of 31°C. Such a significant drop fundamentally changes the card’s thermal behavior, allowing for higher clock speeds at lower noise levels.

Design, Size, and System Compatibility

Stock coolers are engineered for compatibility. Their compact, standardized designs ensure they fit within the established dimensions of most PC cases, from large towers to smaller form factors. They respect the boundaries of PCIe slots and do not interfere with other components, making system assembly a predictable and straightforward process. This universal fit is a core part of their design philosophy.

The extreme dual-tower solution, however, is a monument to impracticality. Its sheer size makes it incompatible with virtually any standard computer case, requiring an open-air test bench for operation. This oversized assembly obstructs adjacent slots and makes the GPU a fragile, unwieldy apparatus. This contrast highlights a crucial trade-off: achieving peak thermal performance often means sacrificing the real-world usability and hardware compatibility that stock coolers guarantee.

Cost, Effort, and Accessibility

From a user’s perspective, stock cooling is the path of least resistance. It is included with the GPU at no additional cost and requires zero effort or technical knowledge to use. The solution is pre-installed, covered by the manufacturer’s warranty, and ready to go right out of the box, making it the most accessible option by far.

Conversely, the extreme setup presents significant financial and technical barriers. It necessitates purchasing additional components that were never intended for this purpose, demanding considerable technical skill to adapt and assemble them without damaging the delicate GPU die. Furthermore, this process invariably voids the product warranty, placing the full risk of a costly mistake squarely on the user’s shoulders. It is an endeavor reserved for dedicated enthusiasts willing to invest time, money, and risk for the sake of performance.

Practicality vs. Possibility: Challenges and Limitations

Stock coolers, for all their convenience, have inherent limitations. In thermally constrained environments, such as poorly ventilated cases, they can struggle to dissipate heat effectively, potentially leading to thermal throttling where the GPU automatically reduces its performance to stay within a safe temperature range. For overclockers, the stock solution often becomes the primary bottleneck, preventing them from pushing the hardware to its full potential.

The extreme method, while thermally superior, is defined by its challenges. Its physical impracticality makes it unsuitable for daily use in a conventional PC. More importantly, the risk of permanently damaging the GPU is high; improper mounting pressure can crack the GPU die, rendering the entire card useless. Consequently, such a setup remains an enthusiast’s proof-of-concept—a demonstration of what is possible, not what is practical for the average consumer.

The Verdict: Finding a Sensible Middle Ground

The analysis reveals a clear divide: stock coolers provide adequate, no-fuss performance but leave significant thermal headroom untapped, while extreme methods prove what is technically possible at the cost of all practicality, safety, and warranty. Neither extreme represents an ideal solution for the typical user seeking better performance.

Ultimately, the lessons from this experiment point toward a sensible middle ground. Rather than attempting a high-risk, impractical modification, users can achieve significant and practical temperature improvements through more accessible means. Improving case airflow, reapplying high-quality thermal paste, or even employing simple but effective mods—like placing a fan over the GPU’s backplate to achieve a 13°C drop—offer a much more balanced path to unlocking a GPU’s hidden potential. These methods synthesize the spirit of experimentation with the demands of daily usability.