In the ever-evolving world of technology, the semiconductor industry plays a crucial role in advancing various technological innovations. However, the process of designing semiconductors is highly intricate and time-consuming. To address these challenges, semiconductor engineers at Nvidia have recently released a groundbreaking research paper showcasing the potential of generative artificial intelligence (AI) in assisting semiconductor design. This research highlights the use of Nvidia NeMo, a powerful tool that offers customized AI models, providing a competitive edge in this field.

Challenges in semiconductor design

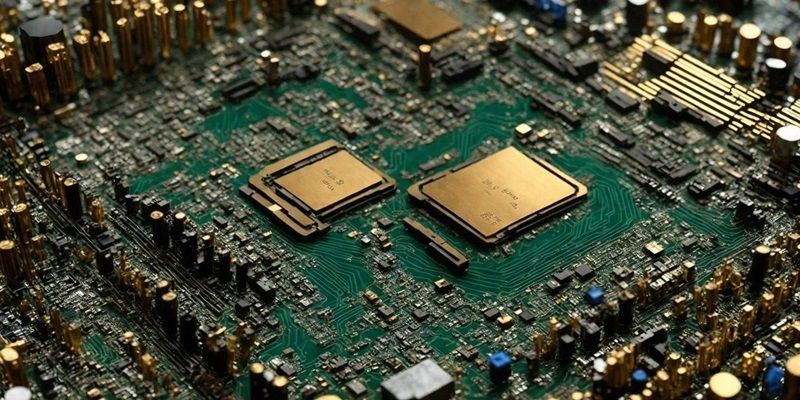

Semiconductor design is a highly complex endeavor that involves the meticulous construction of chips containing billions of transistors on 3D circuitry maps, comparable to the intricacies of city streets but thinner than a human hair. The immense density and sophisticated nature of these designs pose a significant challenge for human designers. Therefore, the utilization of generative AI in this field has the potential to revolutionize the way semiconductor chips are created.

Utilizing LLMs in semiconductor design

Delving into the research conducted by Nvidia chip designers, they have developed an innovative approach to leverage large language models (LLMs) in creating semiconductor chips. By harnessing the power of LLMs, they can enhance the efficiency and accuracy of the design process. Exploring this avenue, Nvidia engineers have developed a custom LLM named ChipNeMo, which has been trained using the company’s internal data. This groundbreaking LLM assists in generating and optimizing software, working side by side with human designers.

Applications of ChipNeMo

The capabilities of ChipNeMo are truly impressive. One of the most well-received use cases thus far is an analysis tool that automates the time-consuming task of maintaining updated bug descriptions. By automating this previously laborious process, ChipNeMo significantly reduces the workload of designers, freeing them up to focus on more critical aspects of the design. This automation not only saves time but also improves the overall quality of the design by ensuring accurate bug descriptions.

Gathering design data and creating a generative AI model

A significant aspect of Nvidia’s research paper centers around the team’s efforts to gather design data and create a specialized generative AI model. By collecting a vast amount of design data, the research team was able to train ChipNeMo on real-world examples. This process helped fine-tune the LLM’s capabilities, ensuring its effectiveness and accuracy. This emphasis on data collection and model refinement highlights the importance of using specialized generative AI models in semiconductor design.

Refining Pretrained Models with Custom Data

The research conducted by Nvidia showcases how a deeply technical team can refine a pre-trained model with their own data, tailored to their specific requirements. This approach highlights the flexibility and adaptability of generative AI models, proving their potential to address specific challenges in semiconductor design. By leveraging pre-trained models and augmenting them with custom data, designers can achieve highly optimized and efficient designs.

Insights and Future Possibilities

The semiconductor industry is only scratching the surface when it comes to exploring the possibilities of generative AI. Nvidia’s research provides valuable insights into the potential of this technology in revolutionizing semiconductor design. By automating time-consuming tasks, improving accuracy, and enhancing overall efficiency, generative AI models like ChipNeMo can undoubtedly give companies a competitive edge in the ever-evolving world of semiconductor design.

Nemo Framework for Building Custom LLMs

Enterprises interested in building their custom LLMs can leverage the NeMo framework, developed by Nvidia. This comprehensive framework is available on GitHub and the Nvidia NGC catalog, providing the necessary tools and resources to develop and train customized generative AI models. With the NeMo framework, companies can tailor LLMs to their specific design needs, further enhancing their capabilities in semiconductor design.

Nvidia’s research highlights the immense potential of generative AI in revolutionizing semiconductor design. Through the development of the custom LLM, ChipNeMo, powered by Nvidia NeMo, the research showcases how AI can significantly streamline and improve the design process. By automating tasks, optimizing software, and leveraging pretrained models, designers can achieve remarkable advancements in semiconductor design efficiency and accuracy. As the semiconductor industry continues to explore the possibilities of generative AI, Nvidia’s research provides valuable insights and sets the stage for future innovations in this field.