Containerization has become a vital technology in modern software development and deployment pipelines. It simplifies application deployment, ensures consistency, and optimizes resource utilization. Among the containerization technologies available, Docker stands out as the pioneer, having been developed in 2013. However, managing a large number of containers at scale and ensuring high availability required a more sophisticated solution, leading to the rise of Kubernetes. Together, Docker and Kubernetes have revolutionized the way applications are deployed and managed.

The need for advanced container management

As software applications became more complex, managing a vast number of containers became a challenging task. Docker, with its simple yet powerful approach to containerization, solved many problems but fell short when it came to managing containers at scale. High availability and resilience also became critical factors in containerized environments. This necessity for more advanced container management led to the emergence of Kubernetes, an open-source container orchestration system.

Docker and Kubernetes

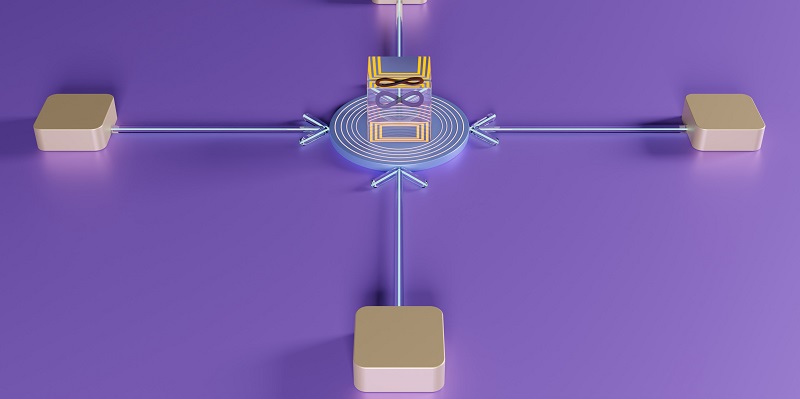

Docker and Kubernetes complement each other perfectly, offering a comprehensive containerization and orchestration solution. Docker allows developers to build and package their applications into Docker images, which encapsulate all the dependencies and configurations needed to run the application. These Docker images can then be deployed and managed by Kubernetes, which takes care of the scaling, load balancing, and high availability aspects of the containers.

Building Docker images

Developers rely on Docker to build and package their applications into Docker images. Docker simplifies this process by providing a standardized and portable environment for application development. Developers can define the application’s dependencies, configurations, and runtime environment in a Dockerfile. With a simple command, Docker builds the image, ensuring that the application is reproducible across different environments. This approach ensures consistency in the deployment process and makes it easier to reproduce and test applications.

Scaling and Load Balancing with Kubernetes

One of Kubernetes’ most significant benefits is its ability to automatically scale the number of container replicas based on resource utilization or traffic load. Kubernetes utilizes a concept called “pods” that group containers together and allow them to share resources. By monitoring resource utilization and traffic load, Kubernetes can dynamically adjust the number of replicas to provide optimal performance and resource utilization. Load balancing is also a crucial aspect of Kubernetes, distributing incoming traffic across multiple containers to prevent any single container from becoming overloaded.

Efficient Application Updates with Kubernetes

Kubernetes supports rolling updates and rollbacks, making application updates efficient and reliable. Rolling updates allow applications to be updated seamlessly without downtime. Kubernetes gradually replaces existing containers with new ones, ensuring continuous availability of the application. In case of any issues, rollbacks can be performed, reverting the application to a previous version. These features enable organizations to update their applications regularly with minimal disruption and ensure that deployments can be easily rolled back if necessary.

Profound impact on application deployment practices

The adoption of Docker and Kubernetes has had a profound impact on modern application deployment practices. These technologies have brought significant advancements in terms of deployment simplicity, consistency, scalability, and availability. By leveraging Docker and Kubernetes, organizations can streamline their deployment processes, reduce time-to-market, and achieve higher reliability and stability.

Portability of Containerized Applications

One of the key advantages of containerization is the portability it offers. Containers encapsulate everything an application needs to run, including the runtime, libraries, and dependencies. This encapsulation makes containers highly portable across different environments, allowing applications to be reliably deployed on different machines or cloud platforms. Portability enables developers to build applications once and deploy them anywhere, reducing dependencies and ensuring consistency across various deployment environments.

DevOps Enablement with Docker and Kubernetes

Docker and Kubernetes play a crucial role in enabling and promoting DevOps practices. DevOps is a collaborative approach that integrates development and operations teams, aiming to automate repetitive tasks, enable seamless collaboration, and accelerate the software delivery lifecycle. Docker and Kubernetes provide the means for developers and operators to work together seamlessly. Developers can package their applications into containers, which can then be deployed, scaled, and managed by the operations team using Kubernetes. This collaboration and automation streamline the entire deployment process and foster a culture of continuous integration and continuous delivery.

Docker and Kubernetes have revolutionized application deployment practices. Their impact on modern software development and deployment pipelines cannot be overstated. Docker simplifies application packaging into portable containers, while Kubernetes offers sophisticated container orchestration, ensuring high availability, scalability, and efficient updates. Moreover, the portability and DevOps enablement provided by these technologies allow organizations to improve their deployment processes, collaborate seamlessly, automate tasks, and accelerate the software delivery lifecycle. As containerization continues to evolve, its importance and future potential in software development and deployment are further solidifying.