Solid-state drives, or SSDs, have become increasingly popular in recent years due to their faster speed, durability, and reliability compared to traditional mechanical hard drives or HDDs. SSDs use flash memory to store data and have no moving parts, which makes them less susceptible to physical damage. However, understanding the factors that affect SSD reliability is crucial in making an informed decision when choosing these drives for your computer or enterprise storage needs. In this article, we will explore SSD technology and discuss the critical factors that affect their reliability.

Explanation of SSD technology

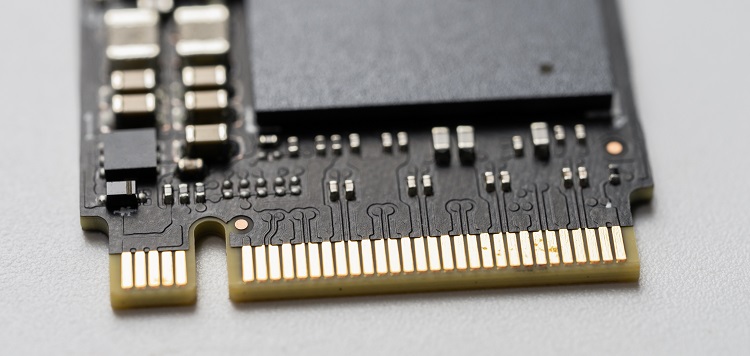

SSDs store data electronically using flash memory. Data is retained in NAND flash memory cells that are arranged in an array. These cells store charges on a floating gate to represent binary data that can be read or written using electrical pulses. NAND flash cells are commonly used in SSDs due to their high density, low cost, and fast write and read speeds. A controller chip manages the operations of the flash cells and acts as an interface between the computer’s operating system and the SSD.

Non-volatile memory in SSDs

Even though SSDs are much faster than mechanical hard drives, they have non-volatile memory, which means that data is retained even when there is no power source. This makes them ideal for situations where power outages can cause data loss.

SSDs have a DWPD value that refers to the amount of data that can be written to the drive each day during the warranty lifespan of the SSD. The DWPD value measures how much data can be written to the drive over time without causing premature failure due to excessive wear on the flash cells.

There are mainly three factors that determine SSD reliability: the age of the SSD, the total terabytes written over time (TBW), and the drive writes per day (DWPD). The age of the SSD is a critical factor in determining its performance and reliability. As SSDs age, the flash cells may degrade, leading to data loss or system crashes. Total terabytes written, or TBW, refers to the number of times that data can be written to the SSD throughout its lifetime. The DWPD value is also critical as it determines how much data can be written to the drive daily within its warranty period.

SSDs in the IT industry are measured in terms of their lifespan, which is indicated by the TBW (Total Bytes Written). Typically, the service life of an SSD is around 256 TBW. Therefore, it is important to select an SSD with a higher TBW rating to ensure its longevity.

The endurance rate, also called the program/erase cycle or P/E cycle, is a measure of how many times a flash cell can be written to or erased before it becomes unusable. A higher endurance rate indicates a longer lifespan for an SSD.

Inadequacy of MTBF in Measuring SSD Performance

The Mean Time Between Failures (MTBF) is popularly used for HDDs to measure performance. However, it is not meaningful in the case of SSDs. SSDs have a different failure mode than HDDs, making MTBF an irrelevant metric.

Comparison of errors in SSDs and HDDs

Even though SSDs are durable, they may show more errors than HDDs in less time. This can be attributed to the fact that SSDs have non-volatile memory, and data cannot be overwritten. Thus, errors that occur during writes, such as power outages, can lead to the corruption of data that cannot be easily restored as opposed to HDDs.

The role of wear leveling is to ensure uniform distribution of program/erase cycles in SSDs. It is a critical mechanism that prevents overuse of a single flash memory cell over others, ultimately avoiding the early failure of an SSD.

The importance of MTBF in determining asset reliability is that the asset is considered more reliable if the MTBF value is higher. It is worth noting that this parameter can only be used to determine the reliability of HDDs and not SSDs.

In conclusion, SSDs are becoming increasingly popular for computer and enterprise storage needs due to their faster speed, durability, and reliability. It is crucial to understand the factors that affect SSD reliability, such as age, TBW, and DWPD, when choosing an SSD. The MTBF metric is not a reliable measure of SSD reliability. Wear leveling is a critical mechanism used in SSDs to help distribute the program/erase cycles evenly over the entire memory block of the SSD. Considering these factors will help you make an informed decision when selecting an SSD for your data storage needs.