Modern data centers are currently grappling with a thermal paradox where the rapid expansion of artificial intelligence requires more power than traditional infrastructure can effectively cool. As rack densities climb toward unprecedented levels, the standard method of pushing massive volumes of chilled air through a room is proving to be both physically insufficient and economically draining. Operators are searching for a middle ground that provides the efficiency of liquid cooling without the astronomical costs associated with completely submerging servers or redesigning every internal component. The objective of this analysis is to evaluate whether Rear-Door Heat Exchanger (RDHx) technology represents the most efficient and pragmatic solution for today’s infrastructure. By exploring the mechanics, configurations, and strategic advantages of these systems, the following sections provide a comprehensive guide for those looking to optimize their cooling strategy. Readers can expect to learn how RDHx fits into the broader cooling landscape, its specific operational benefits, and the limitations that might influence a long-term deployment strategy.

Key Questions and Industry Insights

What Exactly Is a Rear-Door Heat Exchanger and How Does It Function?

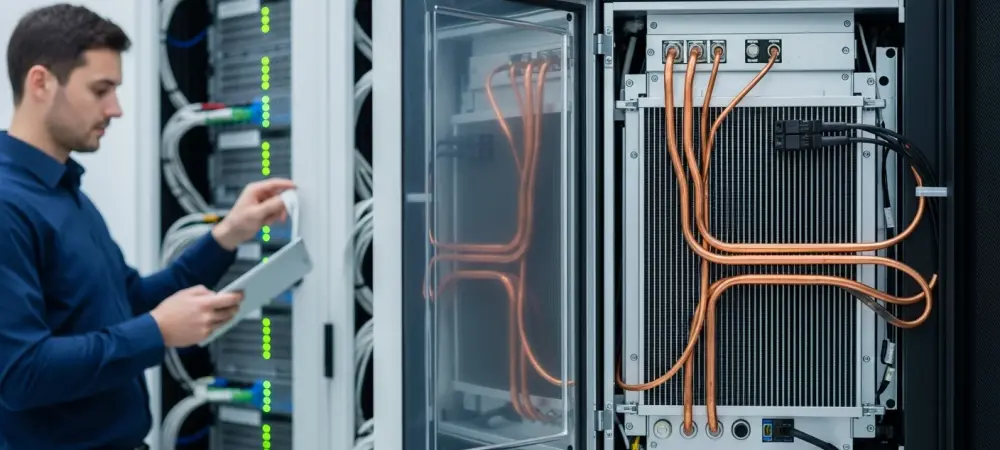

A Rear-Door Heat Exchanger is a specialized thermal management unit that replaces the standard rear door of a server rack to capture heat at the source. It functions by circulating a cooling medium, typically water or a glycol mixture, through a series of coils located directly in the path of the server exhaust. As hot air exits the servers, it passes through these liquid-filled fins, which absorb the thermal energy before it ever enters the wider room. This process effectively neutralizes the heat, allowing the air to return to the data center floor at or near the ambient room temperature.

The primary advantage of this mechanism is the drastic reduction in the workload placed on the facility’s central computer room air conditioning (CRAC) units. By managing heat within the footprint of the rack itself, the system prevents the mixing of hot and cold air streams, which is the leading cause of inefficiency in older designs. Furthermore, because the heat is transferred to a liquid loop, it can be transported more efficiently to external heat rejection equipment like cooling towers or dry coolers, often enabling the use of warmer supply water and reducing the need for energy-intensive mechanical chilling.

What Are the Primary Differences Between Passive and Active RDHx Systems?

Passive RDHx units are designed for simplicity and rely entirely on the internal fans of the servers to move air through the cooling coils. Because they lack their own fans, these units do not consume additional electricity at the rack level, making them an attractive option for operators focused on minimizing the total power draw of their cooling hardware. However, this approach requires careful management of server airflow; the server fans must be powerful enough to overcome the static pressure created by the cooling coil without causing the hardware to overheat or significantly increasing internal fan speeds. In contrast, active RDHx systems incorporate dedicated, high-efficiency fans within the door itself to pull exhaust air through the heat exchanger. This setup provides more consistent and predictable cooling performance, particularly in ultra-high-density environments where server fans alone might struggle to maintain optimal pressure. While active doors introduce a small additional power load, they often lead to a more efficient overall ecosystem by allowing server fans to run at lower, more stable speeds. This configuration is generally preferred for racks pushing the upper limits of air-cooling capacity, providing a safety net for mission-critical hardware.

How Does RDHx Compare to Immersion or Direct-to-Chip Liquid Cooling?

When compared to more radical technologies like immersion cooling or direct-to-chip systems, RDHx stands out as a less invasive and more cost-effective bridge to liquid cooling. Direct-to-chip methods require specialized cold plates and intricate plumbing inside every server, while immersion cooling necessitates completely submerging hardware in dielectric fluid. Both require significant capital expenditure and often demand specialized server configurations. RDHx, however, offers a “drop-in” solution that works with standard, off-the-shelf server hardware, allowing operators to gain liquid cooling benefits without a total overhaul of their procurement processes.

Moreover, the implementation friction for RDHx is remarkably low. An operator can retrofit an existing data center by simply swapping doors, whereas moving to immersion usually requires a complete redesign of the floor layout and weight-loading calculations. While immersion and direct-to-chip systems offer higher thermal limits for extreme high-performance computing, RDHx provides a sufficient and much more affordable thermal ceiling for the vast majority of enterprise AI and cloud workloads. It serves as a pragmatic compromise that balances high-density requirements with the realities of existing facility constraints.

What Are the Main Challenges and Limitations of This Technology?

Despite its many benefits, RDHx is not a universal remedy and comes with specific logistical considerations. One of the most significant challenges involves the physical compatibility of the racks; non-standard or legacy rack dimensions can make it difficult to find a perfectly fitting door, potentially leading to air bypass and reduced efficiency. Additionally, the technology requires the installation of liquid manifolds and piping throughout the data center rows. For facilities that were originally designed as strictly air-cooled environments, introducing liquid to the white space can present a perceived risk of leaks, necessitating the use of leak detection systems and specialized hose couplings.

Another limitation is the point of diminishing returns in extremely varied environments. RDHx provides the best return on investment when deployed in high-density clusters where heat output is consistent. In a data center where power density is low or highly inconsistent across different racks, the fixed cost of the heat exchanger—often starting around five thousand dollars per unit—might not be justified compared to traditional aisle containment. Operators must also consider that for the absolute highest densities, such as experimental liquid-cooled GPU clusters, even a high-performance active RDHx may eventually reach its physical limit for heat rejection.

Summary of Key Takeaways

The exploration of Rear-Door Heat Exchangers reveals a technology that is uniquely positioned to handle the current transition from traditional cloud computing to AI-driven high-density workloads. By capturing heat at the source, these systems significantly lower power usage effectiveness (PUE) and reduce the burden on facility-wide HVAC systems. The choice between passive and active configurations allows for flexibility based on server fan capacity and density needs. Most importantly, RDHx provides a scalable path to liquid cooling that avoids the extreme costs and hardware modifications required by immersion or direct-to-chip alternatives. This makes it an essential tool for operators who need to increase capacity within their existing physical footprint while maintaining a focus on energy efficiency.

Final Thoughts and Future Considerations

The decision to adopt RDHx technology marked a significant shift in how infrastructure managers approached thermal management. By moving away from the “brute force” method of room-level cooling, the industry moved toward a more modular and precise philosophy. Organizations that implemented these systems found themselves better prepared for the sudden spike in power demands, as the rack-level approach allowed for incremental upgrades without disrupting the entire facility. Looking forward, the integration of smart sensors and automated fluid control will likely further refine these systems, making them even more responsive to real-time workload fluctuations. For any facility facing a density crisis, evaluating the compatibility of the existing plumbing and rack architecture with RDHx was the logical first step toward a more sustainable and high-performance future.