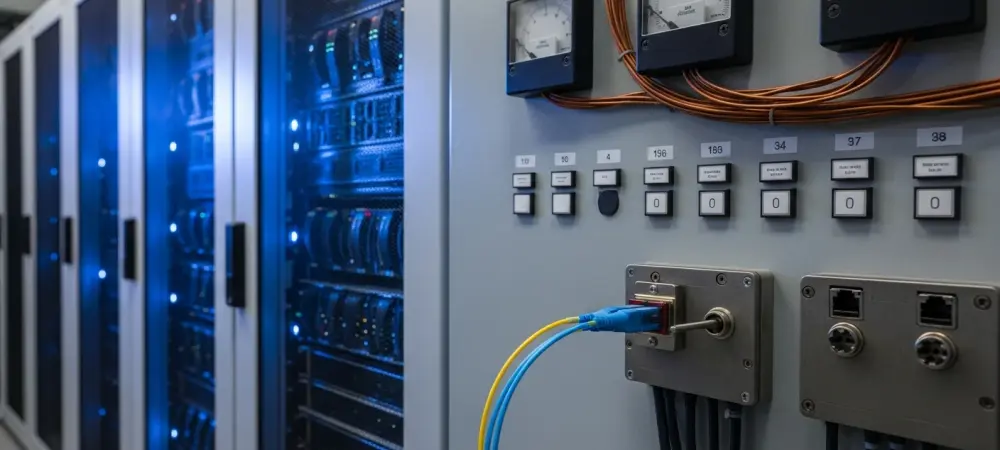

The silent operation of a power grid or water treatment facility often masks a terrifying technical debt that is rapidly becoming a liability in the face of quantum computing advancements. As 2026 progresses, the push for cryptographic readiness has moved from a theoretical discussion to a regulatory mandate, yet the physical reality of operational technology suggests a massive disconnect. Most industrial control systems were never designed with modern encryption in mind, let alone the complex requirements of post-quantum algorithms. This creates a situation where the digital defenses of critical infrastructure are increasingly out of sync with the physical hardware that actually keeps the lights on and the water flowing for millions of citizens. While Information Technology departments have spent the last few years rotating keys and upgrading servers, the Operational Technology side remains tethered to devices that prioritize physical reliability over digital agility. The resulting gap is not just a technical hurdle; it is a fundamental systemic vulnerability that leaves the very backbone of modern society exposed to sophisticated adversaries who are already planning for a post-classical computing era.

Architectural Constraints and Technical Barriers

Resource Limitations: The Constraints of Legacy Hardware

Many industrial controllers currently managing vital infrastructure operate with extreme resource constraints, sometimes possessing as little as 32KB of random-access memory. These devices lack the computational overhead and memory required to execute complex post-quantum cryptographic algorithms, which are significantly more demanding than current elliptic curve standards. Because many of these systems were designed long before cybersecurity was a primary concern, they lack the flexibility to adapt to the heavy processing requirements of next-generation encryption. The silicon inside a typical programmable logic controller was never intended to perform the high-order polynomial multiplication or lattice-based calculations that define modern quantum resistance. Attempting to force these algorithms onto such limited hardware often results in unacceptable latency or total system failure, which is a non-starter for processes that require millisecond-level precision. This technical ceiling effectively anchors the security posture of critical infrastructure to the limitations of late-twentieth-century hardware design.

Furthermore, the physical rigidity of these environments means that hardware replacement cycles are measured in decades rather than years. In a standard corporate office, a five-year-old laptop is considered ancient, but in a substation or a chemical plant, a controller installed at the turn of the century is often considered to be in the middle of its operational life. This longevity creates a massive stockpile of “un-patchable” assets that cannot be upgraded via a simple firmware push. Even if a vendor were to develop a quantum-resistant firmware update, the underlying processor might physically lack the registers or clock speed to execute it without crashing. The cost and logistical complexity of performing a “rip and replace” operation on a continental scale are so prohibitive that many asset owners are forced to look for external workarounds. These stop-gap measures, such as industrial firewalls or protocol converters, add complexity and potential points of failure without actually addressing the root cause: the hardware itself is cryptographically obsolete in a post-quantum world.

Visibility Gaps: The Challenge of Invisible Cryptography

A major hurdle in achieving readiness is the lack of visibility into where cryptographic functions actually reside within a complex and sprawling operational environment. Encryption is often buried deep within forgotten software libraries or hard-coded into firmware that is difficult, if not impossible, to update without physical access or direct vendor intervention. Without specialized tools to interrogate hardware and create an accurate inventory of existing cryptographic assets, infrastructure owners cannot effectively plan for a migration they cannot even map. Many organizations find themselves in a position where they are running proprietary code from vendors that may no longer exist, or using libraries that have not been audited in over a decade. This “shadow cryptography” makes it nearly impossible to determine which systems are vulnerable to quantum-assisted attacks and which are using standard-compliant protocols. The lack of a clear cryptographic bill of materials for industrial devices leaves security teams blind to the specific risks hidden within their own control networks.

Moreover, the interrogation of these devices is often hindered by the very protocols they use to communicate. Traditional IT scanning tools can inadvertently crash sensitive industrial equipment by sending unexpected packets, leading to a culture of caution that prevents deep technical audits. Consequently, many asset owners rely on outdated documentation or manual inventories that fail to capture the reality of the firmware versions currently running in the field. This lack of transparency extends to the supply chain, where components from multiple sub-vendors are integrated into a single industrial device, each bringing its own set of cryptographic dependencies and vulnerabilities. To achieve true readiness, the industry must develop non-intrusive methods for discovering and analyzing embedded encryption. Until then, any plan for a post-quantum transition remains a theoretical exercise based on incomplete data. The inability to see the problem clearly ensures that the most critical vulnerabilities remain hidden until they are eventually exploited by an adversary with superior technical capabilities.

The Evolving Nature of Quantum Threats

Data Harvesting: The Long-Term Risks of HNDL Strategies

The threat of quantum computing is not a distant concern but a present-day reality through the “Harvest Now, Decrypt Later” strategy employed by sophisticated adversaries. State-sponsored actors are currently collecting vast amounts of encrypted traffic from critical infrastructure, banking on the future ability of quantum computers to break today’s encryption standards. This means that sensitive data stolen today acts as a “time bomb,” waiting for technology to catch up so it can be exploited in the coming years. For a utility provider, this could mean that network maps, authentication tokens, or operational schedules captured in 2026 will become transparent to an attacker by the end of the decade. The value of this data does not expire quickly; the layout of a power grid or the logic of a water filtration plant remains relevant for many years. By the time quantum computers are powerful enough to run Shor’s algorithm on live traffic, the historical data collected today will provide a perfect blueprint for a physical attack on the infrastructure.

Building on this risk, the shift in adversary behavior suggests that the focus is moving from immediate disruption to long-term strategic positioning. When a threat actor successfully exfiltrates encrypted configuration files or administrative credentials, they are effectively securing a future key to the kingdom. Even if an organization upgrades its security protocols tomorrow, the historical data remains in the hands of the adversary, potentially revealing architectural secrets that cannot be changed without massive capital expenditure. This creates a persistent state of vulnerability where the “ghost” of past security failures haunts the future stability of the grid. The current inability to prevent data harvesting means that even if the industry reaches quantum readiness by 2030, the damage may have already been done during the mid-2020s. The long shelf life of industrial data makes it an ideal target for this type of delayed exploitation, turning today’s encryption into nothing more than a temporary delay for a well-funded and patient adversary.

Forged Trust: The Danger of Compromised Signing Keys

Beyond data theft, quantum advancements pose a significant risk to the integrity of system updates through the potential forgery of firmware signing keys. If an attacker gains access to a vendor’s keys today, a future quantum computer could allow them to sign malicious code that devices will accept as legitimate. This creates a permanent backdoor, enabling “ghost” access where an adversary can push updates to the grid without ever having to break through traditional perimeter defenses again. In an environment where trust is established through digital signatures, the ability to forge those signatures is the ultimate weapon. Once a malicious update is accepted by a controller, it can lie dormant for years, bypassing all traditional security monitoring because it is perceived as a “trusted” part of the system. This type of attack targets the very foundation of the OT security model, which relies on the assumption that only the manufacturer can modify the device’s core logic.

Furthermore, the centralized nature of firmware distribution means that a single compromise at the vendor level can have a cascading effect across thousands of utility providers. If a quantum-capable adversary can forge a signature for a widely used industrial controller, they can theoretically compromise an entire sector of the economy with a single update. This risk is exacerbated by the fact that many industrial devices do not have a mechanism to revoke compromised keys or verify the integrity of their firmware against a secondary source. The “Trust Now, Forge Later” scenario is particularly dangerous because it does not require the attacker to have a quantum computer today; they only need to steal the keys now and wait for the hardware to catch up. This long-term threat to the integrity of the supply chain necessitates a move toward multi-signature schemes and more robust hardware-based roots of trust that are inherently resistant to quantum cryptanalysis. Without these changes, the entire update mechanism of critical infrastructure remains a glaring single point of failure.

Regulatory Misalignment and the Illusion of Safety

Paperwork Security: The Paradox of Empty Attestations

Current regulatory frameworks for cryptographic readiness are largely derived from IT standards, making them ill-suited for the unique constraints of the industrial world. When asset owners are forced to sign attestations of readiness without the proper tools to verify their own systems, it leads to “empty attestations” that prioritize administrative compliance over actual defense. This “check-the-box” mentality provides a false sense of security to the public while the actual technical vulnerabilities remain unaddressed on the plant floor. Regulators often demand an inventory of all cryptographic assets, but for an operator of a hundred substations filled with legacy gear, providing a truly accurate list is a technical impossibility with current tools. As a result, many organizations focus on the legal aspects of compliance—ensuring the paperwork is filed and the boxes are checked—rather than engaging in the difficult and expensive work of hardware remediation.

This trend toward “security by paperwork” creates a dangerous feedback loop where both the regulator and the regulated believe that progress is being made while the underlying risk remains static. If an auditor accepts a high-level summary as proof of readiness, the asset owner has no incentive to dig deeper into the actual firmware of their devices. This administrative layer acts as a buffer that hides the severity of the problem from policymakers who might otherwise allocate the funding necessary for a true technological transition. The reliance on IT-centric frameworks also means that many OT-specific nuances, such as the inability to patch without a scheduled outage, are ignored in favor of standardized reporting. To move past this illusion of safety, regulatory requirements must be grounded in the technical realities of the field, acknowledging that “ready” for a 1995-era controller looks very different from “ready” for a modern cloud server. Without this shift, the industry risks building a mountain of compliance documents that will provide zero protection when a real threat emerges.

Strategic Resilience: The Need for OT-Specific Solutions

Achieving true resilience against quantum threats required a departure from the path of least resistance and a move toward developing specialized tools for industrial environments. Meaningful security only emerged from a long-term investment in hardware that could support new standards and the creation of frameworks designed for the “un-patchable” nature of OT. This shift was facilitated by the introduction of protocol-agnostic security wrappers that allowed legacy devices to participate in modern cryptographic exchanges without needing internal hardware upgrades. By placing a quantum-resistant gateway in front of older controllers, engineers successfully created a “protected zone” that could be updated as standards evolved without disrupting the underlying process. This approach recognized that the physical equipment might last another twenty years, so the security layer had to be decoupled from the hardware lifecycle. This strategic pivot allowed for a more flexible and realistic defense posture.

Ultimately, the transition to post-quantum security in the industrial sector was treated as a generational shift rather than a simple software patch. Stakeholders moved away from generic IT templates and adopted specialized maturity models that accounted for the constraints of industrial control systems. These models prioritized the visibility of cryptographic assets and the implementation of multi-layered defense-in-depth strategies that did not rely on a single algorithm for protection. The industry also benefited from increased collaboration between manufacturers and operators to ensure that future hardware would be “crypto-agile,” allowing for the swap of cryptographic primitives without a full firmware rebuild. By addressing the technical and visibility gaps inherent in legacy systems, the infrastructure supporting modern life finally began to move toward a state of genuine readiness. These steps ensured that the vital services upon which society depends were no longer operating in a state of unacknowledged peril, but were instead protected by a robust and future-proof digital foundation.