The glistening promise of an autonomous enterprise often shatters against the reality of a fragmented database that cannot distinguish a customer’s lifetime value from a simple transaction code. For several years, the technology sector has remained fixated on the sheer cognitive acrobatics of large language models, treating every incremental update to GPT or Claude as a definitive solution to complex business problems. This model-centric perspective suggests that the algorithm is the brain and therefore the most vital organ of the modern company. However, a sobering reality is currently sweeping through corporate boardrooms as organizations realize that even the most brilliant model is ineffective without a structural foundation.

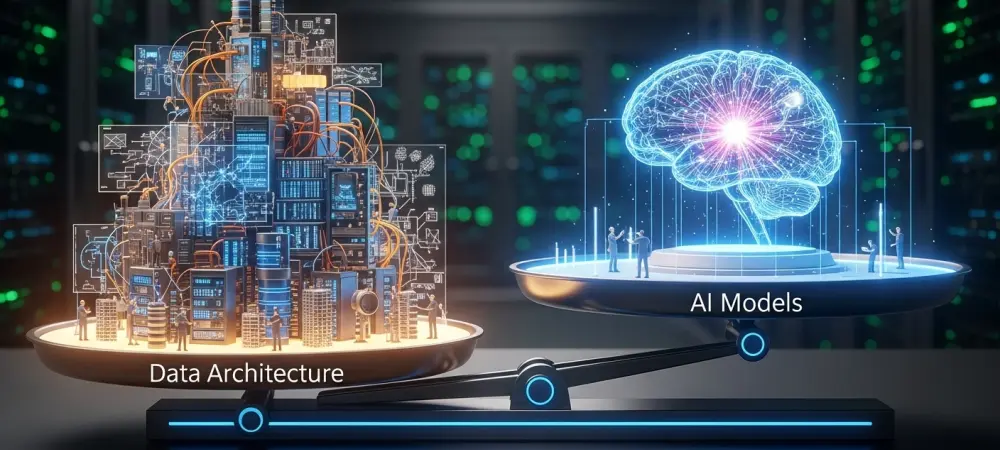

A sophisticated artificial intelligence model paired with a chaotic data foundation resembles a high-performance Ferrari engine mounted onto the frame of a rusted bicycle. While the engine possesses the theoretical capacity for incredible speed and torque, the underlying structure lacks the integrity to handle the power, leading to an inevitable and expensive mechanical failure. The true bottleneck in the current technological landscape is not a lack of mathematical intelligence, but rather the structural inability of traditional data systems to feed that intelligence the context it requires to function reliably.

The Illusion of the Intelligent Algorithm

The captivation with human-like reasoning in software has led many to believe that the model itself is the ultimate driver of efficiency. Business leaders often assume that deploying a state-of-the-art model will automatically fix deep-seated operational friction. This focus on the “magic” of the algorithm ignores the fact that a model is only as useful as the specific, high-quality information it can access in real time. Without a clean data pipeline, these models frequently generate confident but entirely fabricated answers, essentially hallucinating based on the noise within an unorganized system.

The cost of maintaining this illusion is becoming prohibitively high for those who ignore the plumbing of their digital estates. When a model attempts to reason through inconsistent datasets or poorly labeled metadata, it consumes excessive compute power to resolve contradictions. The industry is reaching a consensus that the “intelligence” perceived by the end-user is actually the byproduct of how well the data has been curated and served to the model, rather than just the number of parameters the model contains.

Why the Architecture-First Shift is Non-Negotiable

As early experimental pilots transition into widespread enterprise rollouts, many organizations are hitting a metaphorical wall. The excitement of a successful proof-of-concept often evaporates when the system is asked to handle the complexity of an entire global supply chain or a massive customer support database. This failure is rarely due to a deficiency in the AI model; instead, it stems from the fragility of unoptimized data pipelines that cannot sustain the rigors of production-level demands. Infrastructure that worked for a small test group often collapses under the weight of real-world scale.

The financial consequences of neglecting architecture are equally severe, with a significant percentage of initiatives being abandoned before they generate any return on investment. High compute costs and inefficient storage methods create a scenario where the operational expense of the AI exceeds the value it provides. Furthermore, the lack of a traceable architecture makes it nearly impossible to satisfy the strict requirements of contemporary privacy regulations like GDPR or HIPAA. Without a transparent data foundation, the AI remains a “black box” that fails internal audits and external compliance checks, posing a significant liability to the organization.

Deconstructing the Data Foundation: Beyond the Model

Understanding the dominance of architecture requires looking at the actual mechanics that drive reliability. A common misconception persists that simply increasing the volume of data will improve the intelligence of the system. In reality, massive data growth without a governing structure leads to the “Scaling Paradox,” where more information actually results in more confusion. When an AI ingests departmental silos that contain conflicting records, the output becomes unreliable. Architecture acts as the essential governor that prevents this digital sprawl from becoming a permanent liability.

A modern foundation also requires the decoupling of storage and compute to ensure elasticity. In older, monolithic systems, these two layers are joined, meaning an organization must pay to scale both even if they only need more processing power. By separating them, companies can scale up compute resources during heavy reasoning tasks and scale down during idle periods, ensuring they only pay for active consumption. This structural shift transforms the data environment from a rigid expense into a flexible asset that can adapt to the fluctuating needs of artificial intelligence workloads.

Expert Perspectives on Engineering vs. Intelligence

Field veterans are increasingly vocal about the reality that successful artificial intelligence implementation is roughly 80 percent data engineering and only 20 percent modeling. Pratik Jain, a prominent figure in the industry, has observed that the path toward functional AI is frequently blocked by the lack of a robust, elastic foundation. He points out that while the models receive the headlines and the public acclaim, the underlying infrastructure determines whether a project delivers actual savings or becomes a bottomless pit for the budget. The data suggests that “choked” pipelines—those struggling with unoptimized and messy data—are a far greater threat to success than the technical limitations of the models themselves.

This perspective shifts the focus from the search for the “perfect” algorithm to the construction of a reliable data highway. Research consistently indicates that organizations prioritizing their data architecture see a much higher rate of successful production deployments compared to those that focus solely on model selection. The consensus among engineers is that a mediocre model supported by a world-class data architecture will consistently outperform a world-class model supported by a mediocre data architecture. It is the quality and accessibility of the input, rather than the complexity of the processor, that defines the limit of what the system can achieve.

Strategies for Building a High-Performance AI Infrastructure

Constructing an architecture designed for longevity requires a fundamental change in strategy, moving from a model-first to a foundation-first mindset. One of the most effective methods involves implementing a hybrid Retrieval-Augmented Generation framework. Instead of the expensive and time-consuming process of constant model fine-tuning, this approach provides the AI with real-time context from existing databases. By using vector embeddings to represent unstructured information, the system can pinpoint relevant data points almost instantly. This reduces the number of calls to high-cost models and ensures the information provided to the user is both current and grounded in fact. Strategic model routing is another pillar of a smart architecture. Not every internal query requires the power of a trillion-parameter reasoning engine; a well-designed system can route simpler tasks to smaller, more cost-effective models while reserving premium compute power for complex problem-solving. Finally, establishing a governed access point through a semantic layer ensures that the AI only interacts with authorized data. This semantic layer provides the necessary business logic and context, allowing the AI to understand the “why” behind the numbers without needing to move or duplicate sensitive information.

The organizations that emerged as leaders in the digital landscape did so by recognizing that the data foundation was the true source of competitive advantage. They moved away from chasing the latest model iterations and instead focused on the meticulous engineering required to make their data accessible, clean, and secure. This shift in perspective allowed them to bypass the financial and technical hurdles that stalled their competitors. The final transition toward a truly intelligent enterprise required more than just selecting the right software vendor; it demanded a total commitment to the structural integrity of the data itself. By treating architecture as the primary concern, these pioneers ensured that their technological investments remained scalable and profitable long after the initial hype of the algorithm had faded.