Modern medical devices and autonomous transport systems rely on millions of lines of sophisticated code that must interact flawlessly with physical sensors and actuators under extreme real-time constraints. The days of “fire and forget” firmware are officially over, replaced by an era where a car or a diagnostic tool is essentially a high-performance computer wrapped in specialized casing. As the complexity of embedded code grows exponentially, the traditional hardware-first approach has hit a breaking point. This friction often leads to costly recalls and missed market windows that can cripple a brand. Engineering teams now face a daunting challenge: maintaining the uncompromising safety of physical systems while adopting the lightning-fast delivery cycles of the cloud. The solution lies in a specialized adaptation of DevOps that finally bridges the gap between rigid silicon and fluid software.

The High Stakes of the Software-Defined Hardware Era

The transition to software-defined hardware has fundamentally altered the risk profile of industrial and consumer engineering. In the past, a hardware product was considered “done” once it left the factory floor, but the current market demands continuous improvement and security vigilance. When a vulnerability is discovered in a connected thermostat or an industrial controller, the expectation is a near-instantaneous patch. However, without a robust delivery pipeline, these updates can introduce regressions that cause catastrophic physical failure. The convergence of these two worlds means that a software bug is no longer just a digital nuisance; it is a potential mechanical breakdown.

Furthermore, the economic pressures of global competition have squeezed development timelines to their absolute limit. Companies can no longer afford the luxury of sequential development where software waits for final hardware revisions. This environment necessitates a shift toward concurrent engineering where the digital and physical components evolve in a symbiotic loop. By adopting a DevOps mindset, organizations can treat firmware as a living entity, capable of evolving safely throughout the entire lifecycle of the product. This approach not only mitigates the risk of post-launch failures but also allows for the monetization of new features via over-the-air updates, turning a static piece of hardware into a platform for ongoing value.

Navigating the Legacy Constraints of Embedded Development

Embedded systems were historically insulated from modern software trends due to their unique relationship with physical components and strict regulatory oversight. The most significant obstacle has always been the hardware bottleneck. Historically, software development was held hostage by the availability of physical prototypes. This dependency led to the notorious phenomenon of integration hell, where developers discovered critical timing issues or resource conflicts only during the final stages of a project. Because the software could not be fully tested without the physical board, the end of the development cycle became a high-stress period of manual troubleshooting and frantic code patching.

Beyond the hardware itself, the compliance burden in sectors like aerospace and automotive creates a massive administrative drag. Manual documentation and safety audits often double the time required to bring a product to market. In these regulated environments, every change must be meticulously traced from a high-level requirement down to a specific line of code and its corresponding test result. This paper-heavy process was designed for a world of infrequent updates, making it incompatible with the modern need for rapid security patches. The rise of the Internet of Things has rendered these old-school manual validation cycles obsolete, as the window for addressing a security threat is now measured in hours rather than months.

The Architectural Pillars of Embedded DevOps

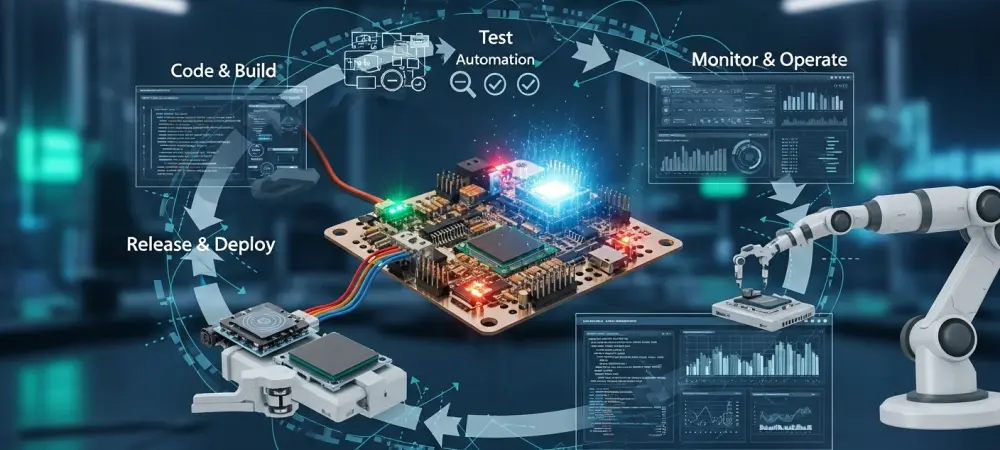

Applying DevOps to firmware requires more than just installing a common continuous integration tool; it necessitates a fundamental restructuring of how code interacts with target hardware. One of the primary pillars is moving away from monolithic merges toward daily code integration. By ensuring that firmware is built and cross-compiled for multiple hardware variants simultaneously, teams can identify architectural drift before it becomes a structural problem. This level of automation ensures that the software remains portable and resilient, even as the underlying silicon evolves through different revisions or supplier changes. Another essential pillar is the use of automated validation and virtualization. Modern teams utilize simulation environments and digital twins to replace manual lab testing with automated regression suites. These virtual targets allow developers to run thousands of tests in parallel in the cloud long before a physical prototype is even manufactured. To ensure the integrity of these tests, practitioners implement deterministic build environments using containerized toolchains. This approach guarantees that every build is reproducible, effectively eliminating the common “it works on my machine” discrepancy that plagues decentralized engineering teams. Finally, a digital thread must be established to provide automated traceability, linking requirements to commits and test artifacts to satisfy regulatory demands without manual intervention.

Technical Execution through Hardware-in-the-Loop Automation

Modern embedded pipelines leverage specialized infrastructure to provide real-time feedback on how code performs on actual silicon. Expert practitioners advocate for connecting physical test benches directly to the continuous integration pipeline, a process known as Hardware-in-the-Loop automation. This setup allows the system to automatically flash and monitor firmware on real microcontrollers as soon as a developer pushes code. By bypassing the need for a human to manually interface with the hardware, the feedback loop is shortened from days to minutes, allowing for much more aggressive experimentation and refinement.

These automated benches do more than just check for logic errors; they are designed for real-time telemetry capture. By automating the collection of power consumption, memory usage, and timing data, teams can identify performance regressions long before the product reaches the field. This level of insight is crucial for battery-operated devices where a minor increase in processor utilization can lead to a significant reduction in product lifespan. Moreover, this infrastructure supports a shift-left security model. Identifying vulnerabilities during the initial coding phase—rather than during final quality assurance—reduces the cost of fixes by a factor of ten. Automated static analysis and fuzzing become non-negotiable steps that ensure the device is hardened against attack from the very first build.

Strategies for an Incremental Organizational Transition

Shifting an entire engineering culture is a marathon rather than a sprint, and success depends on a measured approach to modernization. The first step for any team is to establish an automated build baseline. This involves automating the compilation process and generating a Software Bill of Materials for every release to ensure total visibility into third-party dependencies. Once the build is stable, the focus can shift toward synchronizing siloed departments. Breaking down the walls between firmware engineers, testers, and security experts ensures that compliance is a shared responsibility from day one, rather than a final hurdle to be cleared by a separate team. In the embedded world, teams must prioritize predictability over velocity. While web-based DevOps often emphasizes the sheer speed of deployment, the goal for firmware is to have total visibility into the product state at any given moment. This predictability allows for more confident decision-making and reduces the chaos often associated with complex hardware launches. Finally, the documentation pipeline must evolve from manual report writing to automated evidence collection. When audit logs and traceability reports are generated as standard pipeline outputs, the organization moves toward a state of continuous compliance. This transition ensures that the engineering team stays focused on innovation while the system handles the heavy lifting of regulatory alignment.

As the industry moved toward a fully integrated model, the focus shifted toward the implementation of self-healing pipelines and advanced hardware abstraction layers. Engineering leads prioritized the creation of modular test frameworks that functioned across varying processor architectures, which simplified the transition between different hardware generations. The adoption of standardized metadata for every software component allowed for the rapid assembly of compliance packages, significantly reducing the time required for safety certifications. Organizations that successfully embraced these automated workflows reported a drastic reduction in late-stage integration errors, while the ability to perform secure, remote diagnostics became a standard feature of every new product. The final push involved training teams to treat infrastructure as code, ensuring that the entire development environment could be replicated with a single command. These efforts collectively ensured that the boundary between software agility and hardware reliability vanished, resulting in a new standard for resilient engineering.