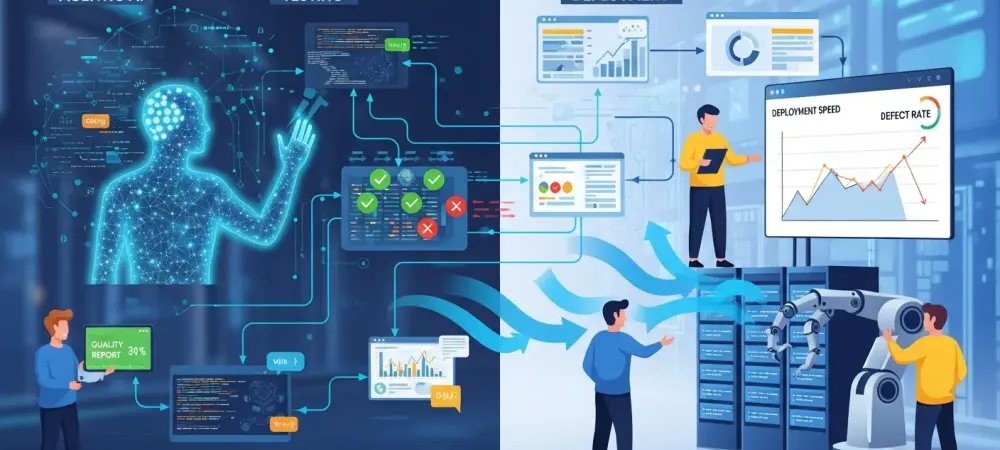

The fundamental shift from traditional automated testing to a fully autonomous software development lifecycle represents the most significant advancement in engineering productivity since the initial widespread adoption of the cloud. Modern software development stands at a pivotal crossroads as the traditional Continuous Integration and Continuous Deployment (CI/CD) pipeline evolves into a more autonomous ecosystem that no longer requires constant human supervision. While standard automation has successfully streamlined testing and deployment for years, it still relies heavily on manual intervention to resolve the specific issues it unearths during the build process. The integration of agentic AI aims to bridge this critical gap, moving beyond the simple identification of defects toward a sophisticated, self-healing infrastructure. This shift enables true “shift-left” strategies, where complex problems are caught and autonomously corrected at the earliest possible stages of the development lifecycle, thereby reducing technical debt.

Bridging the Gap Between Detection and Remediation

The Human Bottleneck: Navigating Modern Pipelines

The current DevOps landscape is often hindered by a persistent “human bottleneck” where developers spend exhaustive hours triaging alerts and writing repetitive fixes for flagged vulnerabilities. Although modern tools can easily spot a buffer overflow or a coding standard violation, they have historically lacked the agency to rectify these errors without direct human guidance and code submission. This creates a significant lag in the release cycle, as engineering teams become bogged down by the sheer volume of maintenance tasks required to keep a growing codebase healthy and secure. Instead of focusing on innovative features, senior engineers are frequently relegated to reviewing mundane pull requests generated by static analysis tools. This manual triage process not only slows down the pace of delivery but also introduces the risk of human error during the remediation phase, especially when teams are under pressure to meet tight deadlines.

Autonomous Interventions: Achieving True Shift-Left Strategies

By integrating agentic AI into the heart of the development workflow, organizations are finally realizing the long-promised potential of self-healing software systems that operate without fatigue. These autonomous agents do not merely report a failure; they analyze the root cause, explore the surrounding codebase for context, and propose verified architectural changes that align with project standards. This capability transforms the traditional “shift-left” philosophy from a theoretical goal into a practical reality by ensuring that code quality is maintained continuously rather than at specific milestones. When a security flaw is detected during the initial commit, an agentic system can immediately draft a patch and verify it against the existing test suite before a human developer even reviews the ticket. This proactive approach significantly lowers the cost of bug fixes, as resolving issues during the development phase is exponentially cheaper than addressing them after a full production deployment has occurred.

Harnessing Agentic Reasoning for Technical Excellence

Test-Time Scaling: Implementing Context Protocols

The emergence of agentic AI introduces the revolutionary concept of “test-time scaling,” which allows specialized models to iterate on complex logic problems until a viable solution is reached. Unlike traditional generative tools that provide a single static suggestion, agentic systems utilize the Model Context Protocol to interact directly with specialized software analysis tools and local environments. This allows the AI to function as the “brain” of the pipeline, where it can independently draft a code fix, execute a comprehensive test suite to verify the results, and refine its approach based on the error logs it receives. This iterative reasoning process mimics the cognitive workflow of a human engineer but operates at a much higher velocity and scale. By scaling the computation time during the inference phase, the agent can explore multiple branching paths for a single bug fix, ensuring that the final solution is not just functional but also optimized for performance and maintainability.

Reliable Automation: Integrating AI With Proven Guardrails

This transition from static automation to dynamic agents requires a sophisticated synergy between artificial intelligence and the established software guardrails that have protected codebases for decades. To prevent common AI pitfalls such as hallucinations or the introduction of logical regressions, these agents must operate within a closed loop where unit tests and static analysis tools serve as the ultimate arbiters of truth. By embedding agentic AI within these rigorous frameworks, organizations ensure that every autonomous change is strictly verified against existing security standards and functional requirements before it reaches the main branch. The AI acts as the creative engine for problem-solving, while the CI/CD pipeline provides the deterministic environment that validates those solutions. This symbiotic relationship creates a robust safety net, allowing teams to trust autonomous systems with critical infrastructure changes while maintaining the high reliability required for enterprise-grade software.

Governance and the Evolution of Engineering Roles

Strategic Deployment: Risk-Managed Integration

Adopting agentic AI is most effective when approached as a goal-driven, incremental process that gradually builds trust within engineering teams through consistent and measurable results. Organizations typically begin this journey by assigning agents to low-risk tasks, such as correcting minor deviations from style guides or patching well-documented security flaws that follow predictable patterns. As the system proves its reliability over several release cycles, its responsibilities can expand to include more complex duties, such as generating comprehensive unit tests to meet specific code coverage targets or monitoring for subtle performance regressions. This phased implementation allows developers to familiarize themselves with the agent’s behavior and ensures that the AI’s logic aligns with the specific architectural nuances of the project. By setting clear coverage goals, management can treat the agent as a dynamic resource that actively seeks out and fills gaps in the testing suite, ensuring the software remains resilient.

Privacy and Security: Prioritizing Agentic Infrastructure

As enterprises scale their AI capabilities from 2026 to 2028, concerns regarding data privacy and the protection of proprietary source code have become paramount for modern security officers. The prevailing trend involves moving away from public, cloud-hosted models in favor of on-premises or private cloud environments that keep intellectual property within the corporate firewall. This localized infrastructure allows companies to leverage the full power of agentic workflows while maintaining strict control over their most sensitive data assets and meeting rigorous compliance standards. Furthermore, by hosting these models internally, organizations can fine-tune them on their own historical data and coding patterns, resulting in agents that are more attuned to the company’s specific technical requirements. This move toward sovereign AI infrastructure ensures that the productivity gains of autonomous DevOps do not come at the expense of corporate security or the exposure of trade secrets to third-party providers.

Strategic Oversight: Shifting Management Roles

Ultimately, the role of DevOps management shifted from the daily supervision of individual code changes to the high-level monitoring of quality trends and overall system performance metrics. Through advanced dashboards and reporting tools, managers tracked how effectively agents were meeting organizational goals such as security compliance and test coverage. This paradigm shift increased productivity and reduced operational costs by transforming the CI/CD pipeline into a self-optimizing environment capable of delivering high-quality software at an unprecedented scale. To successfully implement these systems, organizations established clear audit trails to maintain transparency and accountability for every autonomous modification. Moving forward, engineering leaders should prioritize the training of their staff to oversee these agentic systems, ensuring that human expertise remains focused on high-level architecture and strategic decision-making. By embracing this autonomous future, teams realized a more sustainable development pace where technical excellence was an inherent property of the pipeline.