The transition from experimental artificial intelligence pilots to integrated corporate infrastructure has forced modern enterprises to abandon the pursuit of raw power in favor of specialized, cost-effective performance metrics that align with specific operational goals. Success in the current landscape no longer depends on selecting the model with the highest parameter count, but rather on finding the specific architecture that balances computational efficiency with task-specific accuracy. This shift represents a broader movement toward architectural maturity, where decision-makers treat large language models as any other critical piece of industrial equipment, requiring rigorous due diligence, performance auditing, and a clear understanding of the total cost of ownership over several years.

Technical Performance and Resource Allocation

Balancing Hardware Constraints and Processing Speed

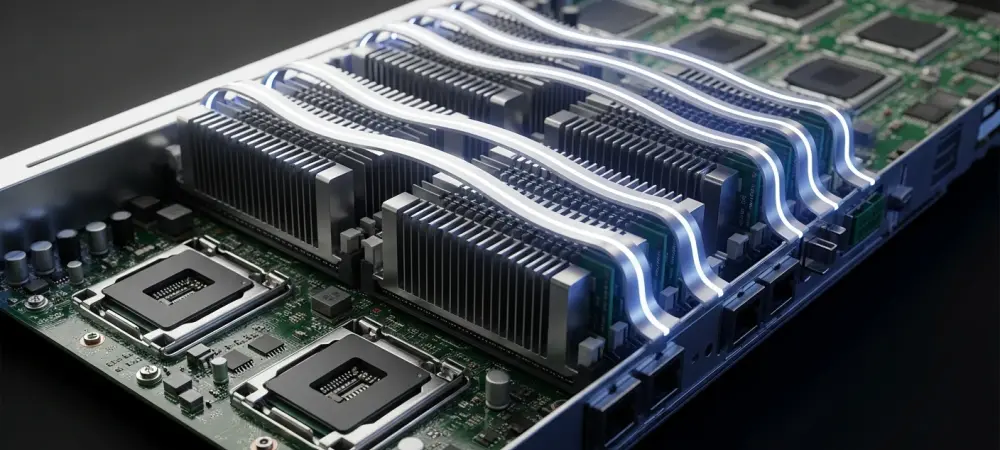

The physical reality of hosting and running large-scale models remains one of the most significant barriers to entry for organizations looking to maintain localized control over their data. Consequently, developers are increasingly looking at quantized models and smaller, distilled architectures that offer eighty percent of the performance at a fraction of the memory footprint. This allows for smoother deployment on existing high-performance computing clusters without the constant need for aggressive hardware expansion, effectively turning the “chore” of infrastructure management into a predictable operational expense.

Beyond the raw hardware requirements, the definition of performance has branched into several distinct metrics, most notably the distinction between latency and throughput. Modern procurement strategies now involve mapping specific business functions to these performance profiles, ensuring that low-latency models are reserved for user-facing tasks while high-throughput, batch-processing engines handle the heavy lifting of data transformation. This tiered approach prevents over-provisioning and ensures that the computational resources are distributed where they provide the most tangible value.

Understanding Contextual Memory and Reasoning

The functional utility of a language model is increasingly determined by its context window, which functions as the system’s active short-term memory during a single interaction. Organizations must carefully evaluate whether a task truly requires a massive context window or if the same results can be achieved through more efficient data retrieval methods, as paying for unused memory capacity is a common pitfall in early-stage artificial intelligence adoption.

Coupled with memory capacity is the model’s ability to engage in multi-stage reasoning, a feature that separates basic text generators from sophisticated problem-solving agents. This trade-off between “fast” intuitive responses and “slow” deliberative reasoning is a central theme in model selection for 2026. Furthermore, operational stability remains a concern, as even the most advanced models can suffer from occasional divergence or “statistical madness” when pushed beyond their training boundaries. Ensuring that a model remains grounded and follows instructions consistently is often more valuable for production environments than a higher score on a theoretical reasoning benchmark, leading many firms to prioritize stability and predictability over raw intellectual flexibility.

Data Integration and Specialized Functionality

Managing Data Freshness and Model Customization

One of the primary challenges in deploying large language models is the inherent limitation of their knowledge cutoff, which represents the exact moment their training concluded and their native understanding of the world froze. To solve this, many organizations have turned to Retrieval-Augmented Generation, a technique that connects the model to live, internal databases to provide a steady stream of current information. This approach allows the foundation model to act as the reasoning engine while the external database serves as the library of record.

For specialized industries, the general-purpose nature of base models is often insufficient, leading to a surge in the use of fine-tuning techniques to instill domain-specific expertise. By focusing on customization, companies can often achieve superior results using a smaller, more efficient fine-tuned model than they would with a massive, un-tuned alternative. This strategy not only improves the quality of the output but also creates a unique intellectual property asset that is specifically tailored to the organization’s unique competitive advantages and operational workflows.

Expanding Beyond Text with Multimodality

The current landscape of enterprise artificial intelligence has moved far beyond simple text-to-text interactions, with multimodality becoming a standard requirement for comprehensive workflow automation. A model’s ability to “see” and understand these inputs directly, rather than relying on a separate optical character recognition layer, drastically reduces error rates and simplifies the overall technical stack.

Beyond visual parsing, the rise of agentic workflows represents the next frontier in model utility, where the AI acts as an autonomous agent capable of executing multi-step tasks across different software platforms. This requires a model that not only understands language but also excels at “tool use” or function calling, allowing it to interact with external APIs, search the internet for missing data, or update entries in a corporate database. The choice between a model that is “sycophantic” and aims to please the user versus one that is “Socratic” and asks clarifying questions is a subtle but critical distinction.

Governance, Ethics, and Long-Term Strategy

Addressing Legal Risks and Compliance Standards

As the legal framework surrounding artificial intelligence becomes more defined, the provenance of training data has shifted from a theoretical concern to a major corporate liability. This has led to a market preference for model providers that can offer detailed audits of their training sets and provide contractual indemnification for their users.

In addition to intellectual property concerns, strict adherence to global regulatory standards like GDPR, HIPAA, and emerging AI-specific laws is non-negotiable for firms in the healthcare, finance, and legal sectors. Compliance often dictates where the model must physically reside and how it processes personal identifiable information. Transparency requirements also mean that some businesses must choose models that can “explain” their reasoning in a human-readable format, providing an audit trail for automated decisions.

Evaluating Sustainability and Model Portability

The environmental footprint of artificial intelligence has moved to the forefront of corporate social responsibility discussions, as the immense power required for both training and inference continues to grow. Forward-thinking companies are now evaluating models based on their carbon efficiency, often preferring those that are optimized for “green” processing. The energy cost per million tokens is quickly becoming a standard financial metric in the evaluation of high-volume AI deployments, reflecting a mature understanding of the relationship between digital technology and physical resources.

Finally, the strategic choice between proprietary and open-source models involves a careful consideration of long-term portability and vendor lock-in. In contrast, open-source models with publicly available weights offer a “guaranteed lifespan,” as they can be hosted locally and maintained indefinitely even if the original developer ceases operations. This portability is especially critical for projects with a multi-year horizon, where the cost of migrating to a new model architecture could be prohibitive.

Strategic Integration and Future Considerations

The assessment of large language models for enterprise use was historically dominated by a focus on raw reasoning scores and linguistic fluidity. However, the practical application of these technologies across diverse industries has proved that operational success is actually found at the intersection of technical performance, legal safety, and resource efficiency. Organizations that prioritized “best-in-class” benchmarks often found themselves struggling with unsustainable hardware costs and unpredictable model behavior in production.

Looking back at the deployment strategies used during the initial expansion phases, it became clear that the most successful implementations were those that maintained a high degree of architectural flexibility. By avoiding total dependence on a single proprietary provider and instead opting for a mix of specialized open-source and high-performance commercial models, firms protected themselves against market volatility and service interruptions. Ultimately, the maturity of the AI sector was marked by this transition from experimentation to specialized utility, where the “feel” and “quirks” of a model were as important as its parameter count. Decision-makers who treated the selection process with the same rigor as any other critical infrastructure procurement were the ones who successfully turned artificial intelligence into a reliable driver of business value.