Intel has officially responded to consumer concerns about stability issues with their latest high-end processors. Users had been experiencing system crashes, especially during gaming, which sparked a wave of feedback from the affected user base. Intel’s acknowledgment comes after initial reports were communicated mostly to hardware partners, leaving some consumers in the dark.

Intel’s Official Response

Understanding the Stability Problems

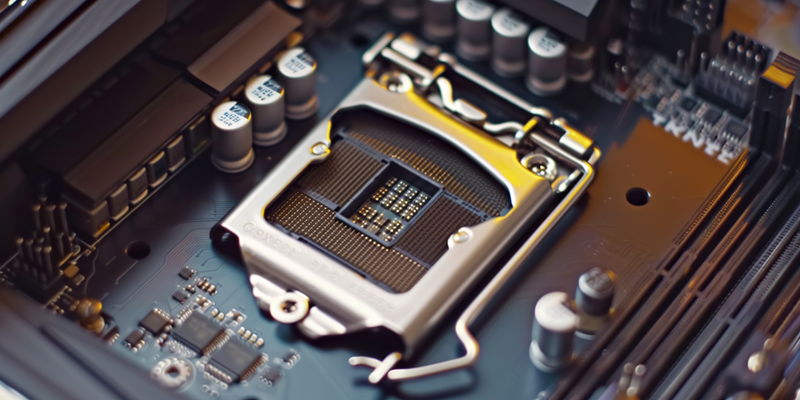

Intel’s investigation into these stability issues revealed that motherboard manufacturers had implemented ‘Intel Baseline Profile’ BIOS settings that were not in line with Intel’s ‘Default Settings’. These baseline profiles, which are remnants of previous power delivery guidelines, have been found to restrict power excessively. Such restrictions can lead to CPUs not functioning within their designed power and thermal frameworks, causing performance to suffer and systems to become unstable.

Intel’s official guidance is now steering users away from the motherboard makers’ baseline settings, particularly for their Raptor Lake CPUs. By adhering to ‘Intel Default Settings’, users can expect a balance of stable performance and efficient power usage. These settings are calibrated to match the capabilities of the motherboard, ensuring that the processor operates as intended.

Recommended BIOS Configurations

For enthusiasts with high-end motherboards designed to handle increased power, Intel is advising more customized configurations. Users are encouraged to select the ‘highest power delivery profile’ that is compatible with their specific hardware. This allows systems to operate with enhanced performance features, although it does come with increased power demands.

Moreover, Intel’s advice differentiates between users with various levels of technical expertise and motherboard tiers. For average users with standard motherboards, sticking to Intel’s recommended defaults is the safest bet for stable system performance. Conversely, power users with premium motherboards have the option to engage higher performance settings, albeit at the cost of higher energy consumption. Those who are not inclined to modify BIOS settings can rest assured that Intel’s default options should suffice for stable operations.

The Importance of Proper BIOS Configurations

For Standard Motherboard Users

For consumers who have invested in Intel’s high-end CPUs, understanding the significance of BIOS configurations is crucial. BIOS settings play a pivotal role in the overall system stability and performance. Following Intel’s guidance, standard motherboard users are advised to use the ‘Intel Default Settings’. These settings are specifically designed to provide a stable and reliable computing experience without necessitating complex adjustments to the system’s BIOS.

For users who may not be familiar with BIOS configurations, it’s essential to recognize that incorrect settings can lead to system instability and crashes, particularly under high-load scenarios like gaming or content creation. Intel’s recommendation to adhere to its default settings helps ensure their processors function within optimal parameters, thus avoiding the power limitations that were inadvertently introduced by the baseline profiles from motherboard manufacturers.

For Advanced Motherboard Users

Advanced users with high-end motherboards that can support higher power loads have different considerations. Intel suggests exploring more tailored settings that can leverage the capabilities of premium motherboards. These customized settings, which offer the potential for better performance, come with an understanding that power consumption may increase.

Intel’s guidance aims to cater to both segments of their user base—those seeking maximum stability and those aiming for heightened performance—while addressing the compatibility between CPUs and motherboards to avoid undue power restrictions. Proper configuration based on the motherboard and CPU capabilities remains key to achieving a match between system stability and performance outcomes.