Artificial intelligence (AI) and deep learning have emerged as the driving forces behind technological innovation in various sectors, from autonomous vehicles to natural language processing. These cutting-edge technologies have made significant strides in research and development, leading to groundbreaking advancements. However, translating these strides into scalable, real-world applications presents a new set of challenges. Latency issues, computational bottlenecks, and precision errors often hinder the practical deployment of AI models, making the optimization of AI infrastructure and development pivotal for achieving efficient deployment.

Key Challenges in AI Deployment

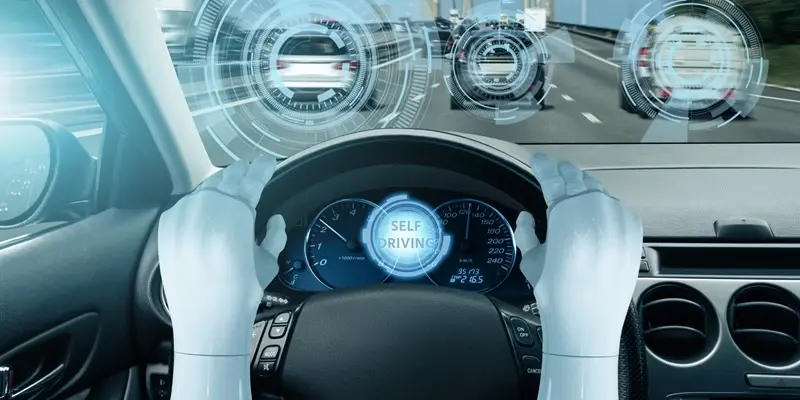

AI applications, particularly in critical sectors like autonomous vehicles, demand real-time decision-making capabilities. This requirement often brings latency issues to the forefront, where even a few milliseconds of delay in AI inference can disrupt the functionality and reliability of the application. Slow inference times can impede the ability of AI models to process vast amounts of data swiftly, making it difficult to meet the demands of real-time operations. Moreover, computational bottlenecks, which occur when the hardware and software pipelines are not adequately optimized, further exacerbate the problem, leading to slower processing speeds.

Another significant challenge is maintaining model precision. Reducing the precision of AI models, for example, shifting from FP32 to FP16, can introduce accuracy errors, which necessitates extensive validation and debugging processes. This trade-off between computational efficiency and precision creates a complex scenario for developers and engineers. Ensuring that the models are accurate while being computationally efficient requires meticulous optimization and robust validation protocols, which often consume considerable time and resources.

Expertise in AI Optimization

Srinidhi Goud Myadaboyina stands out as an expert in addressing the challenges associated with AI deployment. Through his work with industry giants like Cruise LLC, Amazon AWS, and Cisco, Myadaboyina has demonstrated his prowess in optimizing AI infrastructure. His emphasis on enhancing the efficiency of machine learning models has resulted in faster and more scalable solutions, thereby benefiting enterprise applications across various sectors. By focusing on fine-tuning the AI infrastructure, Myadaboyina has played a crucial role in ensuring that AI models can be deployed more effectively, overcoming the common pitfalls of latency, computational bottlenecks, and precision errors.

AI Optimization in Autonomous Vehicles

At Cruise LLC, a leader in autonomous driving technology, Myadaboyina achieved remarkable performance improvements. His efforts led to a substantial reduction in AI model rollout times by 66% and enhanced the speed of deep learning models by up to 100 times. These significant achievements were made possible by refining inference pipelines, automating deployment workflows, and leveraging advanced architectures such as FasterViT for camera-based object detection. Integrating these cutting-edge techniques ensured minimal latency in real-time decision-making for self-driving cars, enhancing their reliability and performance.

Myadaboyina’s expertise with tools like TensorRT and CUDA graphs played a significant role in accelerating the performance of AI models. By collaborating with NVIDIA, he worked on debugging and profiling TensorRT pipelines, ensuring that Cruise’s AI stack remained optimized for real-time inference. His contributions ensured that the AI-driven decision-making processes in autonomous vehicles could keep up with the rapid pace of real-world conditions, making driving systems safer and more efficient.

Advancements at Amazon AWS

Before marking advancements at Cruise, Myadaboyina made significant contributions to Amazon AWS, focusing on enhancing AI model training speeds and cloud service efficiency. His efforts helped achieve a fivefold improvement in AI model evaluation speeds by optimizing GPU utilization and streamlining multithreaded AWS S3 access. This optimization effectively minimized training bottlenecks, allowing for faster and more efficient training of AI models. Additionally, Myadaboyina played a pivotal role in seamless SageMaker Edge AI deployments, ensuring that AI-driven applications operated smoothly across diverse cloud infrastructures.

His contributions extended to the TVM stack, where he enabled support for quantized models. This innovation reduced computational requirements while maintaining accuracy, allowing AI models to run more efficiently on various hardware platforms. Myadaboyina’s work at AWS demonstrated how strategic optimization of AI model architectures and cloud infrastructure could enhance efficiency and reduce latency, enabling businesses to deploy AI-powered solutions more effectively and cost-efficiently.

The Future of AI Deployment

AI deployment is on the cusp of a significant transformation, moving towards self-optimizing models and federated learning architectures. These advancements herald an era where AI systems can continuously learn, adapt, and optimize themselves based on real-world conditions without human intervention. Such dynamic capabilities promise to make AI systems more robust and adaptable, ensuring they remain effective even as the data landscape evolves. By enabling AI models to self-optimize, the industry can overcome many of the current challenges related to latency, computational bottlenecks, and precision errors, paving the way for more seamless and reliable AI applications.

Federated learning architectures also represent a paradigm shift in AI deployment. By allowing AI models to learn from data distributed across multiple locations without centralized training data, federated learning enhances privacy and reduces the need for extensive data transfers. This decentralized approach ensures that AI systems can adapt to diverse data conditions and provide more personalized and context-aware solutions, setting the stage for future advancements in AI deployment.

Building Next-Generation AI Efficiency

Artificial intelligence (AI) and deep learning are revolutionizing various industries, from self-driving cars to natural language processing. These advanced technologies have achieved remarkable progress in research and development, paving the way for groundbreaking innovations. However, the transition from research to real-world, scalable applications introduces a new array of challenges. Issues such as latency, computational bottlenecks, and precision errors frequently obstruct the practical deployment of AI models. Therefore, optimizing AI infrastructure and refining development processes are crucial to overcoming these hurdles and achieving effective implementation. Addressing these issues can ensure that AI technologies reach their full potential and provide tangible benefits across diverse sectors. The focus lies not only on creating sophisticated models but also on ensuring their efficiency and reliability in practical use. Balancing innovation with operational robustness remains key to the successful integration of AI in everyday applications.