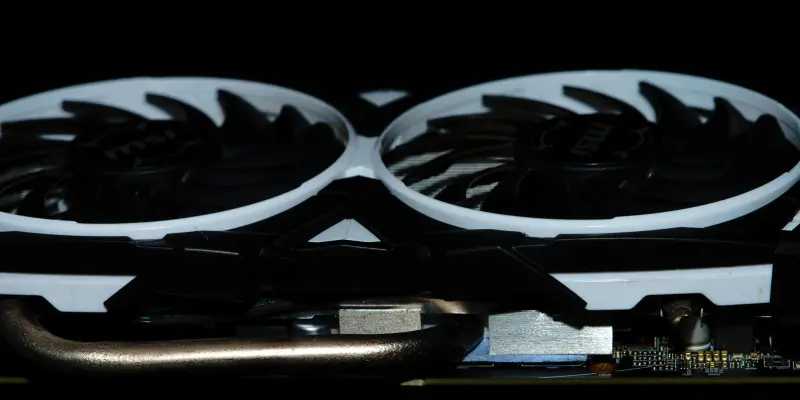

Recently, Gigabyte faced scrutiny over a thermal gel leakage problem affecting its RTX 50 series graphics processing units, especially the RTX 5080 model. The issue first came to light when a customer reported significant thermal gel leakage after just one month of using the graphics card. This problem was traced back to the application of an excessive amount of thermal conductivity gel during the early production phases. While this initially raised concerns about potential performance degradation, Gigabyte was quick to clarify that while the physical appearance might be off-putting, there was no actual compromise to the functionality or longevity of the GPUs. The firm emphasized that the excessive gel was not indicative of a defective product, as the gel’s formulation was designed to tolerate high temperatures without affecting the GPU’s reliability.

Gigabyte’s Response and Adjustments

Acknowledging the customer feedback, Gigabyte took immediate action to rectify this manufacturing oversight by adjusting the volume of thermal gel used in subsequent product batches. The company assured users that it had revised the gel application process to prevent future instances of leakage. Furthermore, Gigabyte confirmed that the thermal gel used could withstand temperatures as high as 150°C, ensuring it remains stable and does not further contribute to any overheating issues. Despite the manufacturing mishap, Gigabyte has yet to comment on whether this particular issue falls under warranty coverage for affected customers. The absence of this clarification leaves some ambiguity regarding customer support and resolution measures for those who have already purchased GPUs from the impacted production batches. Nevertheless, Gigabyte remains steadfast in its stance, underscoring that its core focus is on maintaining the performance and reliability standards expected by its clientele.

Consumer Assurance and Future Outlook

Gigabyte is taking proactive steps to address the thermal gel issue, reflecting its broader strategy to enhance consumer trust. By quickly fixing production lapses and refining its manufacturing processes, Gigabyte seeks to assure consumers of the reliability of their products. This incident highlights the importance of quality control, urging Gigabyte to implement thorough preventive measures to avoid such problems in the future. While the company maintains that the issue is merely cosmetic, it underscores the importance of transparent communication and active response to customer concerns. Moving forward, Gigabyte’s actions highlight its dedication to maintaining its standing in the competitive graphics card industry, with a strong focus on customer satisfaction. The company guarantees that future GPU models will not suffer from this gel overuse, remaining steadfast in its mission to provide solid and reliable hardware solutions to its wide-ranging customer base. This commitment serves as a testament to Gigabyte’s pledge to deliver quality to its users.