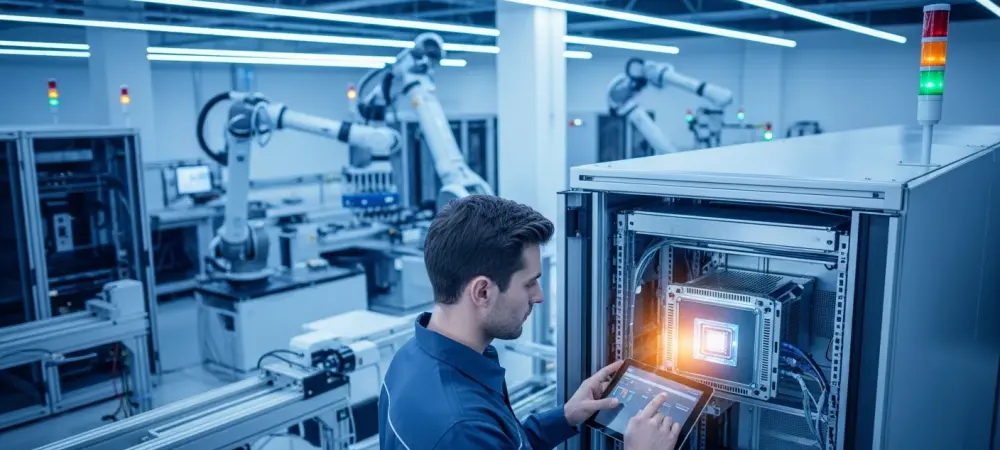

The transition of artificial intelligence from a collection of experimental digital novelties to a fundamental pillar of global industrial operations has reached a critical turning point where reliability matters more than hype. While the preceding years were dominated by the visibility of generative chatbots and creative tools, the focus in 2026 has shifted toward “embedded AI,” where machine learning functions as a silent but vital heartbeat within the infrastructure of modern society. This evolution moves artificial intelligence away from the cloud-based isolation of consumer software and into the high-stakes environments of manufacturing floors, medical operating rooms, and autonomous transport corridors. However, as organizations attempt to weave these intelligent systems into the fabric of their essential operations, they are encountering a formidable obstacle that separates theoretical potential from practical reality. This obstacle is not a lack of imaginative use cases or algorithmic innovation, but rather a profound shortage of the specialized engineering capacity required to deploy, manage, and sustain these complex systems across diverse and demanding global environments.

Transforming Traditional Industries Through Expressed Intelligence

Enhancing Safety and Security in Modern Infrastructure

The automotive industry provides perhaps the most visible example of this industrial transformation, as vehicles are no longer viewed as mechanical assemblies but as high-performance mobile computing platforms. In 2026, the primary objective has moved beyond basic driver assistance toward sophisticated sensor fusion, where a vehicle must synthesize massive streams of data from cameras, LIDAR, and radar in a fraction of a second to ensure passenger safety. This requirement places an immense burden on edge computing, as processing must occur locally with near-zero latency to be effective in safety-critical scenarios. Engineering teams are currently focused on achieving a level of fault tolerance that was previously reserved for aerospace applications, recognizing that an AI failure in a high-speed environment can have catastrophic consequences. Consequently, the development process has become a permanent, long-term commitment involving continuous over-the-air updates and exhaustive audit trails to satisfy the rigorous safety standards set by international regulatory bodies.

Home security and public safety systems are also experiencing a major shift by moving away from reactive motion detection toward proactive, context-aware interpretation of human intent. The goal for modern security infrastructure is to eliminate the persistent problem of “noise”—false alarms triggered by weather or animals—while identifying genuine threats with high precision. Achieving this requires deep integration of on-device video analytics that can distinguish between a delivery person and a potential intruder based on behavioral patterns. However, this increased intelligence creates a wider attack surface for cyber threats, necessitating a secondary layer of engineering focused entirely on defensive security measures. Engineers must ensure that as these systems become more autonomous, they do not become vulnerabilities that hackers can exploit to gain access to private data or physical spaces. This delicate balance between advanced interpretation and hardened security is now a core requirement for any commercial or residential security deployment.

Optimizing Production and Healthcare Efficiency

Agriculture and food production sectors are increasingly turning to AI to combat the volatility of climate change and labor shortages, yet they face the unique challenge of “real-world messiness.” Unlike a controlled laboratory or a clean data center, agricultural environments are characterized by unpredictable lighting, variable weather, and the inherent inconsistency of organic products. In 2026, successful implementations of computer vision for quality grading or autonomous machinery for planting must be treated as rugged operations engineering rather than experimental science. This shift in mindset allows companies to build resilient systems that can operate in the mud and dust of a farm while providing the same level of analytical precision found in a corporate office. The engineering challenge lies in creating models that are robust enough to handle these environmental fluctuations without requiring constant manual recalibration, effectively turning AI into a reliable tool for stabilizing global food supply chains.

In the healthcare sector, the integration of intelligent systems is being handled with extreme caution due to the high stakes of medical diagnostics and patient care. AI is now a standard component in radiology departments, where it assists clinicians in identifying subtle anomalies in medical imaging that might be missed by the human eye. The engineering burden in this space is uniquely heavy, requiring an intense focus on data privacy, validation, and explainability to meet strict legal and ethical requirements. Rather than attempting to replace medical professionals, these systems are designed to automate tedious workflows and provide predictive risk models for patient readmission, allowing doctors to focus on complex cases. Maintaining these systems requires a highly structured development lifecycle where every update is rigorously documented and tested against diverse patient datasets to prevent bias. This disciplined approach ensures that AI remains a supportive and transparent partner in the clinical environment rather than a “black box” that operates without accountability.

Overcoming the Engineering Bottleneck to Achieve Scale

Building the Talent Ecosystem and Execution Capacity

A comprehensive analysis of current industry trends reveals that the most significant bottleneck to widespread AI adoption is no longer a lack of vision but a critical shortage of human capital and specialized engineering infrastructure. Building a functional industrial AI feature is a complex multidisciplinary effort that extends far beyond the capabilities of a single machine learning specialist. It requires a robust ecosystem of data engineers who can build pipelines to ingest and clean massive datasets, backend engineers to integrate intelligent features with legacy enterprise software, and MLOps teams to monitor performance. These systems are prone to “model drift,” where the accuracy of an AI degrades over time as real-world conditions change, making continuous monitoring a non-negotiable requirement. Organizations are finding that without this comprehensive engineering support structure, even the most innovative AI prototypes will fail to provide sustained value or achieve the scale necessary to justify their initial investment.

To address this persistent talent shortage, many leading enterprises are moving away from the traditional model of maintaining entirely in-house development teams for every component of their AI strategy. In 2026, the use of strategic partnerships and offshore development centers has evolved from a simple cost-saving measure into a vital necessity for gaining the execution capacity needed to remain competitive. These specialized centers provide the “engineering muscle” required to handle the labor-intensive aspects of data hygiene, model training, and routine system maintenance without distracting the core team from primary product innovation. This hybrid approach allows companies to scale their technical operations rapidly, ensuring that the transition from a single successful pilot program to a global rollout does not collapse under the weight of its own complexity. By leveraging global talent pools, organizations can maintain the high tempo of iteration required to stay ahead in a market where technical agility is the primary differentiator between leaders and laggards.

Managing the Continuous Operational Lifecycle

The operational reality of industrial AI in 2026 is that it functions as a continuous, iterative loop rather than a project with a clearly defined completion date. Success in this environment was built upon a foundation of strict data hygiene, as early failures proved that poor data quality is the most frequent cause of model collapse. Engineers must ensure that intelligent systems remain compatible with older legacy infrastructure, which often requires building sophisticated middleware to bridge the gap between modern algorithms and decades-old machinery. Once a system is deployed, the work shifts toward constant monitoring and safe rollouts using feature flags to mitigate risks in live environments. This lifecycle approach acknowledges that as user behavior and environmental factors evolve, the underlying AI models must also be retrained and updated to maintain their accuracy and safety. This ongoing maintenance is what transforms a fragile experimental tool into a resilient piece of industrial equipment that can be trusted with critical tasks.

The companies that dominated their respective sectors in 2026 were those that stopped viewing AI as an optional add-on and started treating it as a living, permanent part of their corporate infrastructure. They invested heavily in the “machine that builds the machine,” creating standardized, repeatable processes for data engineering and model deployment that allowed for rapid scaling across global locations. For organizations still struggling to move past the pilot phase, the path forward involves a rigorous commitment to engineering discipline and the abandonment of the “innovation lab” mindset in favor of operational excellence. The most effective strategy for the coming years involves prioritizing the construction of robust data pipelines and investing in MLOps talent to ensure that AI features remain secure and reliable throughout their entire lifespan. By focusing on these foundational engineering requirements, enterprises can finally close the gap between the promise of artificial intelligence and the reality of a fully automated, intelligent industrial landscape that delivers measurable value every day.