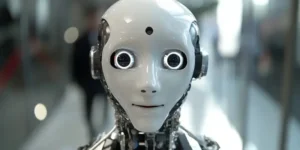

Artificial Intelligence (AI) and the Internet of Things (IoT) are individually transformative technologies, significantly affecting various industries. However, the fusion of these two groundbreaking technologies, termed AIoT (Artificial Intelligence of Things), heralds a new era of industrial advancement and efficiency improvements. AIoT represents the latest milestone in the ongoing industrial revolution, promising optimized processes, innovative business models, and competitive advantages